SAP WM Movement Type On the usage of ‘ref.stor.type search’ field in

The author is responsible for the implementation of mm and WM in the current project. According to the current situation of the storage area of the customer warehouse, I have enabled the following storage type in the design of storage type at the WM level:

001: high level storage area of raw materials

005: fixed shelf storage area for raw materials

PBL: isolation area beside the production line (production red area)

Qbl: quality isolation area (quality red area)

As well as self-made semi-finished products and finished products storage area.

The storage type indicator is enabled when the raw materials are delivered, There are different indicators for different types of materials, so that when these materials are put on the shelf or removed from the shelf, the system can automatically suggest that they be put on the shelf or removed from the shelf in the specified storage area.

For example, when some raw materials are removed from shelves for production preparation, they are first delivered from 001 and then delivered from 005;

When some raw materials are removed from shelves for production preparation, they are first discharged from 005 and then from 001. According to these requirements, I have completed the relevant configuration in storage type search.

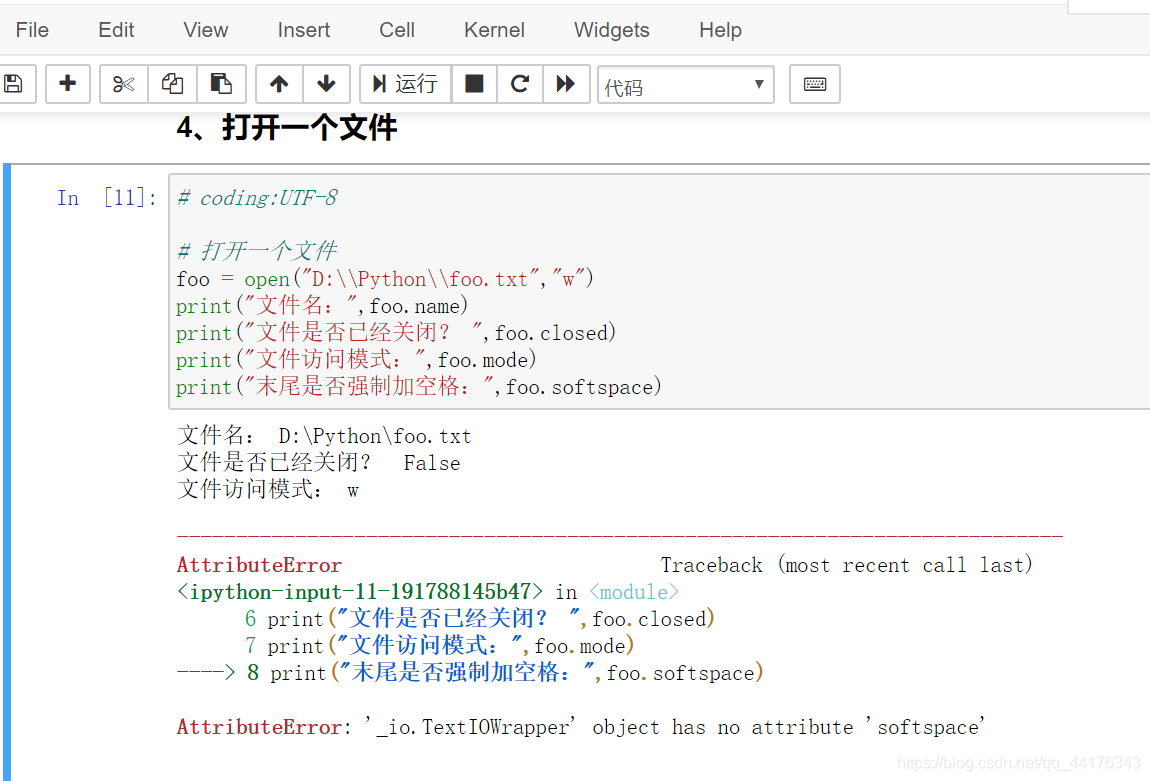

When business departments need to isolate materials for some reason, they need to move the materials to PBL and qbl. If judged by the quality department, these isolated materials need to be scrapped. At this time of shipment, when the business does not want to issue from to in this scrap scenario, the system recommends to issue from 001 or 005 by default, but requires the system to automatically recommend to issue from PBL and qbl.

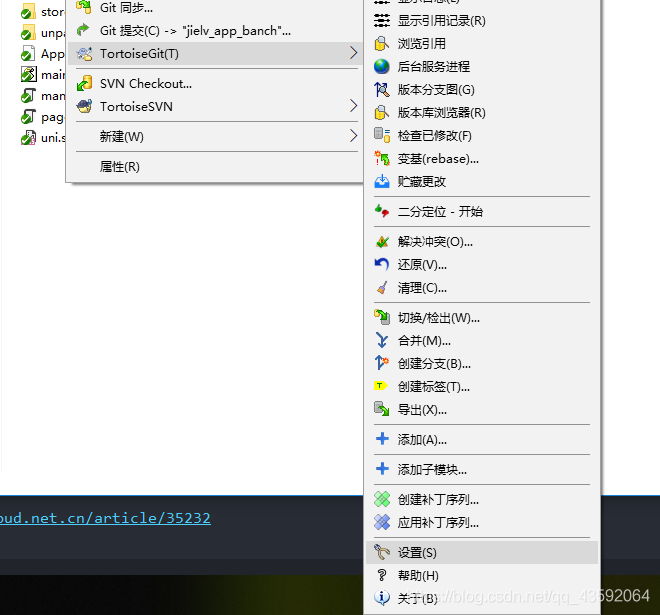

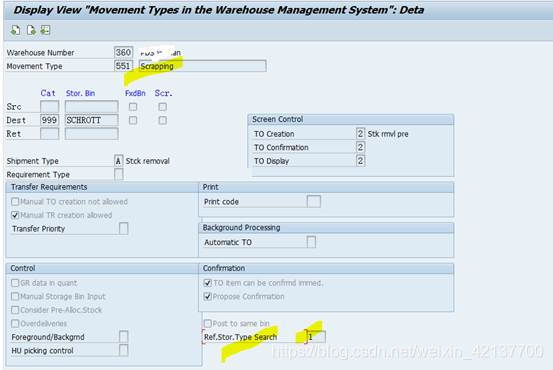

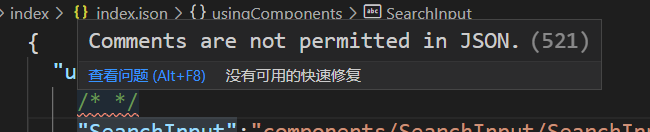

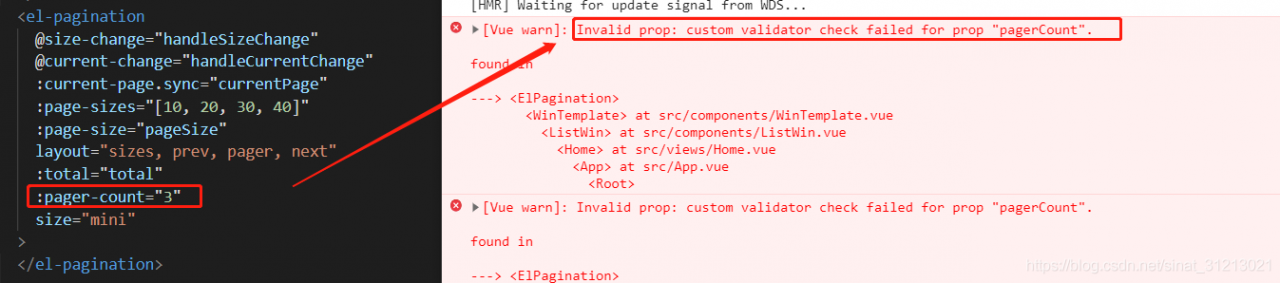

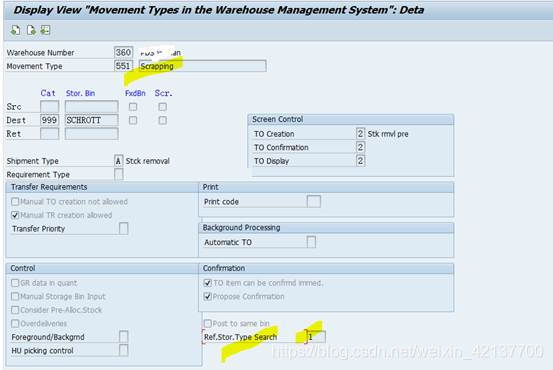

To achieve this requirement, you can enable ‘ref.stor.type search’ in WM mobile type settings. That is to say, for the same material, different storage types are recommended for different business scenarios

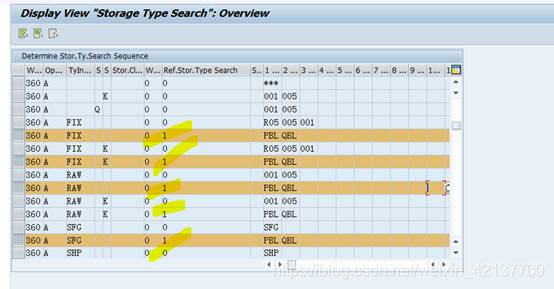

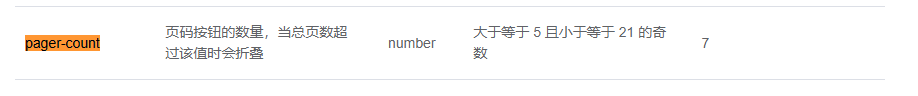

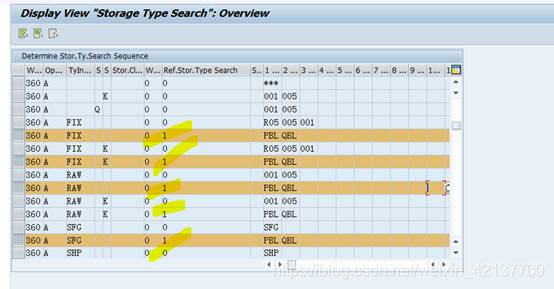

Then, in the configuration of storage type search, add the following configuration items:

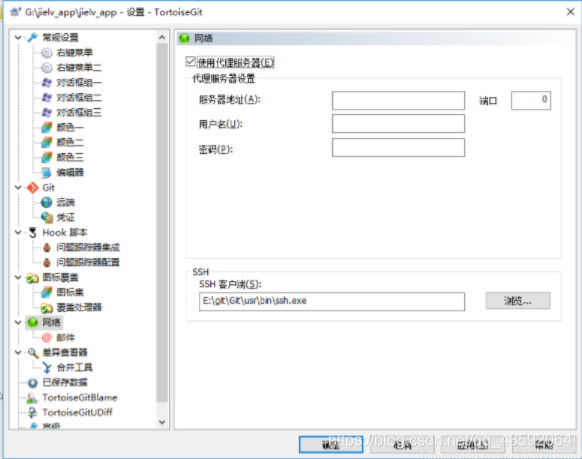

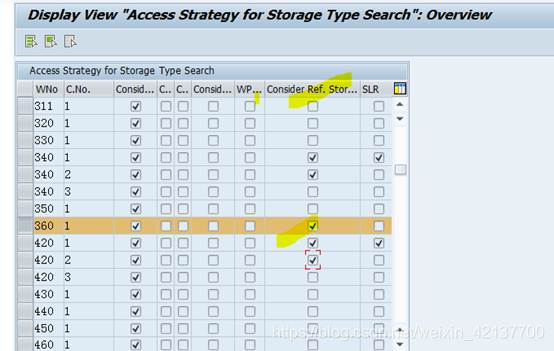

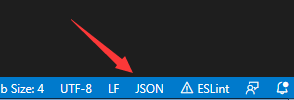

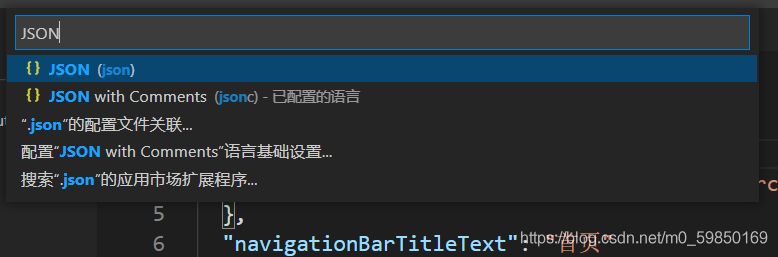

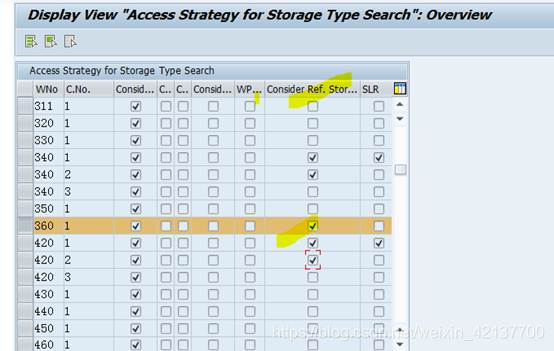

Of course, the premise is that when you search for storage type, you should consider ‘ Ref.storage type search ‘, as shown in the following figure:

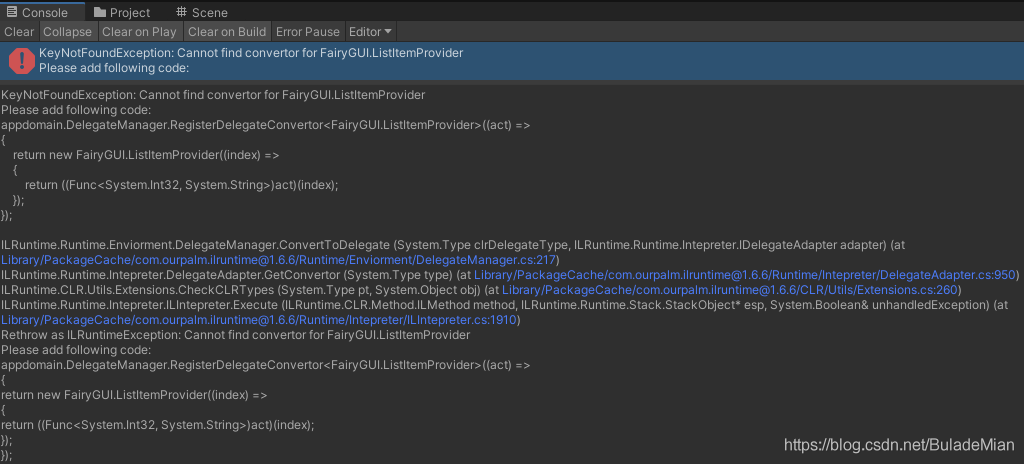

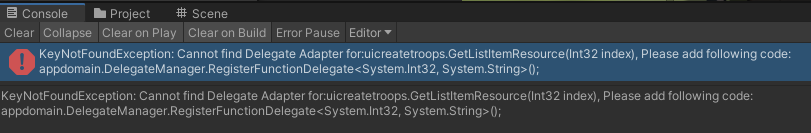

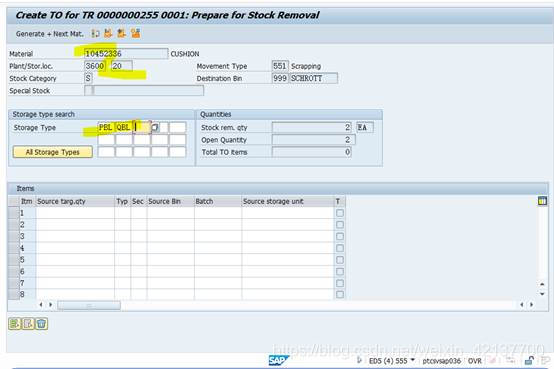

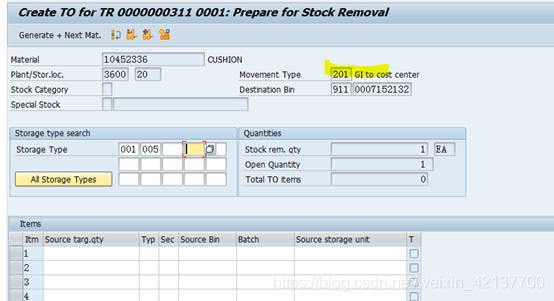

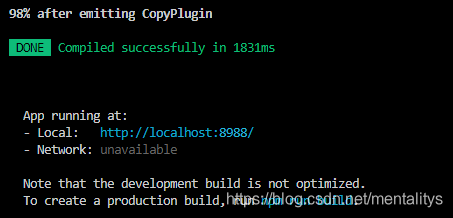

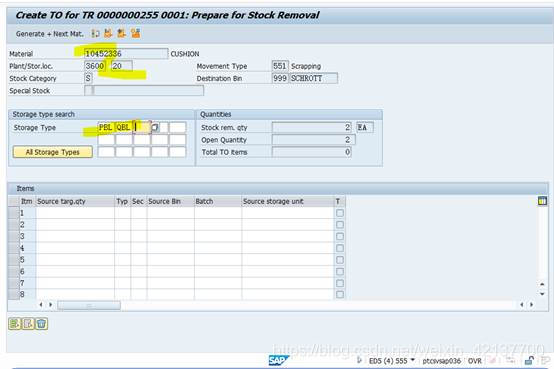

This completes mb1a + 555 at the front desk After the mobile type scrap issue, when lt06 is executed to create to, the interface will automatically suggest that the business be transferred from PBL & amp; In qbl:

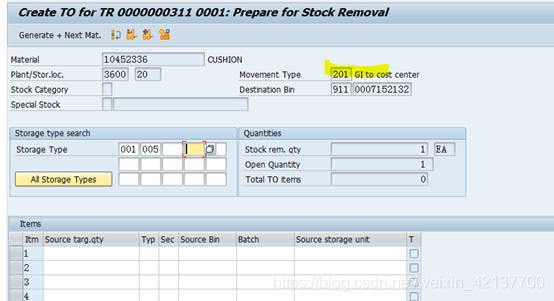

In other scenarios, for example, when the goods are delivered to the cost center, the system will issue the goods from 001/005 and other storage areas according to the normal delivery strategy

2016-12-01 Written in Wuhan Economic Development Zone