Today, I saw a red exclamation mark on an Esxi in vCSA.

So I clicked on the Esxi [Monitoring] under the [Problems and Alarms] and saw the status of other host hardware objects Name of serious alarm

But from this prompt still can not see what, so continue to click on the [Hardware Operational Status] to see the System Management Software 1 SEL Fullness

If you do not know what SEL is, you may not understand this error, in fact, here (SEL) that ESXi System Event Log

That is, the ESXi host system event log is full, if this is the case, we can clean up can

So first open Esxi ssh service and Bash Shell, and then ssh login to Esxi, because this Esxi and production, so I directly use localcli hardware ipmi sel clear will clear the logs

[root@EX123:~] localcli hardware ipmi sel get IpmiSELConfig: Enabled: true Formatted-Raw: Last Added: 2022-09-05T06:18:40 Last Cleared: 2018-12-16T07:51:30 Maximum Records: 512 Overflow: false Raw: Sel-Clock: 2022-11-07T08:42:57 Total Records: 456 Version: 0x51 (1.5) [root@EX123:~] localcli hardware ipmi sel clear [root@EX123:~] localcli hardware ipmi sel get IpmiSELConfig: Enabled: true Formatted-Raw: Last Added: 2022-11-07T08:43:45 Last Cleared: 2022-11-07T08:43:44 Maximum Records: 512 Overflow: false Raw: Sel-Clock: 2022-11-07T08:43:52 Total Records: 1 Version: 0x51 (1.5) [root@EX123:~]

After execution, log back into vCSA to see Esxi and find that the alarms have been eliminated, but if the Esxi is production-important, you can set a larger value or export the backup event log and then clean it up

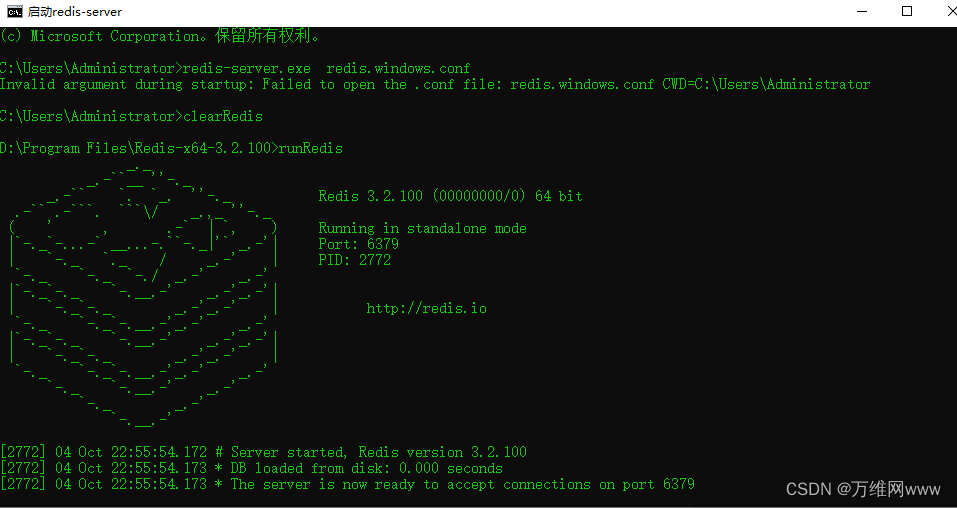

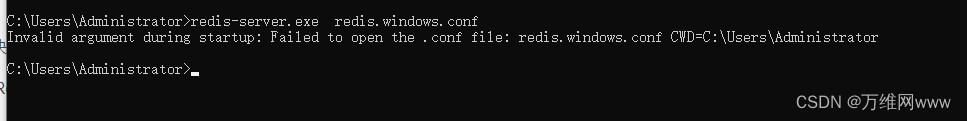

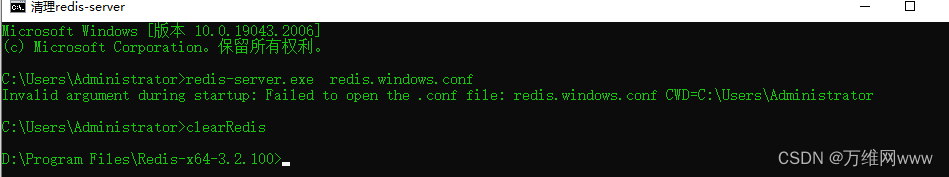

runRedis

runRedis