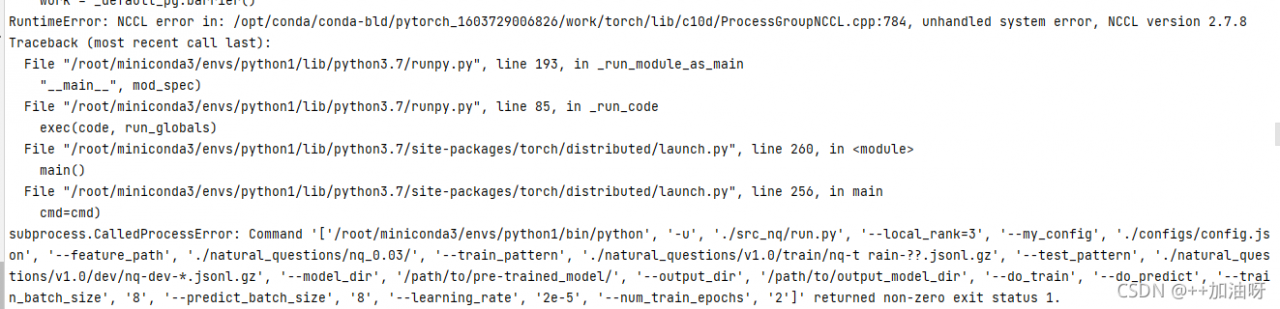

Project scenario:

PyTorch reports an error: TypeError: exceptions must deliver from BaseException

Problem description

In base_options.py, set the –netG parameters to be selected only from these.

self.parser.add_argument('--netG', type=str, default='p2hed', choices=['p2hed', 'refineD', 'p2hed_att'], help='selects model to use for netG')However, when selecting netG, the code is written as follows:

def define_G(input_nc, output_nc, ngf, netG, n_downsample_global=3, n_blocks_global=9, n_local_enhancers=1,

n_blocks_local=3, norm='instance', gpu_ids=[]):

norm_layer = get_norm_layer(norm_type=norm)

if netG == 'p2hed':

netG = DDNet_p2hED(input_nc, output_nc, ngf, n_downsample_global, n_blocks_global, norm_layer)

elif netG == 'refineDepth':

netG = DDNet_RefineDepth(input_nc, output_nc, ngf, n_downsample_global, n_blocks_global, n_local_enhancers, n_blocks_local, norm_layer)

elif netG == 'p2h_noatt':

netG = DDNet_p2hed_noatt(input_nc, output_nc, ngf, n_downsample_global, n_blocks_global, n_local_enhancers, n_blocks_local, norm_layer)

else:

raise('generator not implemented!')

#print(netG)

if len(gpu_ids) > 0:

assert(torch.cuda.is_available())

netG.cuda(gpu_ids[0])

netG.apply(weights_init)

return netGCause analysis:

Note that there is no option of ‘rfineD’, so when running the code, the program cannot find the network that netG should select, so it reports an error.

Solution:

In fact, change the “elif netG==’refineDepth’:” to “elif netG==’refineD’:”. it will be OK!