Error background:

When installing the Flink on yarn cluster, the Flink cluster cannot be started.

Version:

flink-1.14.6

hadoop-3.2.3

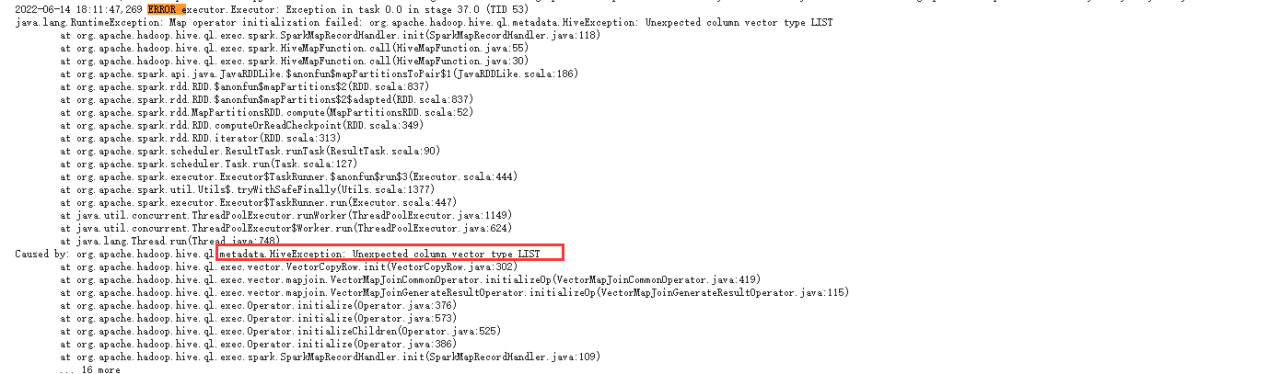

org.apache.flink.runtime.entrypoint.ClusterEntrypointException: Failed to initialize the cluster entrypoint StandaloneSessionClusterEntrypoint.

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.startCluster(ClusterEntrypoint.java:216) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.runClusterEntrypoint(ClusterEntrypoint.java:617) [flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.StandaloneSessionClusterEntrypoint.main(StandaloneSessionClusterEntrypoint.java:59) [flink-dist_2.12-1.14.6.jar:1.14.6]

Caused by: java.io.IOException: Could not create FileSystem for highly available storage path (hdfs:/flink/ha/default)

at org.apache.flink.runtime.blob.BlobUtils.createFileSystemBlobStore(BlobUtils.java:92) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.blob.BlobUtils.createBlobStoreFromConfig(BlobUtils.java:76) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.highavailability.HighAvailabilityServicesUtils.createHighAvailabilityServices(HighAvailabilityServicesUtils.java:121) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.createHaServices(ClusterEntrypoint.java:361) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.initializeServices(ClusterEntrypoint.java:318) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.runCluster(ClusterEntrypoint.java:243) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.lambda$startCluster$1(ClusterEntrypoint.java:193) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.security.contexts.NoOpSecurityContext.runSecured(NoOpSecurityContext.java:28) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.startCluster(ClusterEntrypoint.java:190) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

... 2 more

Caused by: org.apache.flink.core.fs.UnsupportedFileSystemSchemeException: Could not find a file system implementation for scheme 'hdfs'. The scheme is not directly supported by Flink and no Hadoop file system to support this scheme could be loaded. For a full list of supported file systems, please see https://nightlies.apache.org/flink/flink-docs-stable/ops/filesystems/.

at org.apache.flink.core.fs.FileSystem.getUnguardedFileSystem(FileSystem.java:532) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.core.fs.FileSystem.get(FileSystem.java:409) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.core.fs.Path.getFileSystem(Path.java:274) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.blob.BlobUtils.createFileSystemBlobStore(BlobUtils.java:89) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.blob.BlobUtils.createBlobStoreFromConfig(BlobUtils.java:76) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.highavailability.HighAvailabilityServicesUtils.createHighAvailabilityServices(HighAvailabilityServicesUtils.java:121) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.createHaServices(ClusterEntrypoint.java:361) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.initializeServices(ClusterEntrypoint.java:318) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.runCluster(ClusterEntrypoint.java:243) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.lambda$startCluster$1(ClusterEntrypoint.java:193) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.security.contexts.NoOpSecurityContext.runSecured(NoOpSecurityContext.java:28) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.startCluster(ClusterEntrypoint.java:190) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

... 2 more

Caused by: org.apache.flink.core.fs.UnsupportedFileSystemSchemeException: Hadoop is not in the classpath/dependencies.

at org.apache.flink.core.fs.UnsupportedSchemeFactory.create(UnsupportedSchemeFactory.java:55) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.core.fs.FileSystem.getUnguardedFileSystem(FileSystem.java:528) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.core.fs.FileSystem.get(FileSystem.java:409) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.core.fs.Path.getFileSystem(Path.java:274) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.blob.BlobUtils.createFileSystemBlobStore(BlobUtils.java:89) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.blob.BlobUtils.createBlobStoreFromConfig(BlobUtils.java:76) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.highavailability.HighAvailabilityServicesUtils.createHighAvailabilityServices(HighAvailabilityServicesUtils.java:121) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.createHaServices(ClusterEntrypoint.java:361) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.initializeServices(ClusterEntrypoint.java:318) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.runCluster(ClusterEntrypoint.java:243) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.lambda$startCluster$1(ClusterEntrypoint.java:193) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.security.contexts.NoOpSecurityContext.runSecured(NoOpSecurityContext.java:28) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

at org.apache.flink.runtime.entrypoint.ClusterEntrypoint.startCluster(ClusterEntrypoint.java:190) ~[flink-dist_2.12-1.14.6.jar:1.14.6]

... 2 moreThe reason for the error

Flink needs two jar package dependencies to access HDFS. Flink does not have them, so it needs to be put in by itself.

- flink-shaded-hadoop-3-3.1.1.7.2.9.0-173-9.0.jar

- commons-cli-1.5.0.jar

Solution:

Directly search the Maven warehouse for these two jar packages and download them: https://mvnrepository.com/

Put the jar package in the /flink/lib directory.