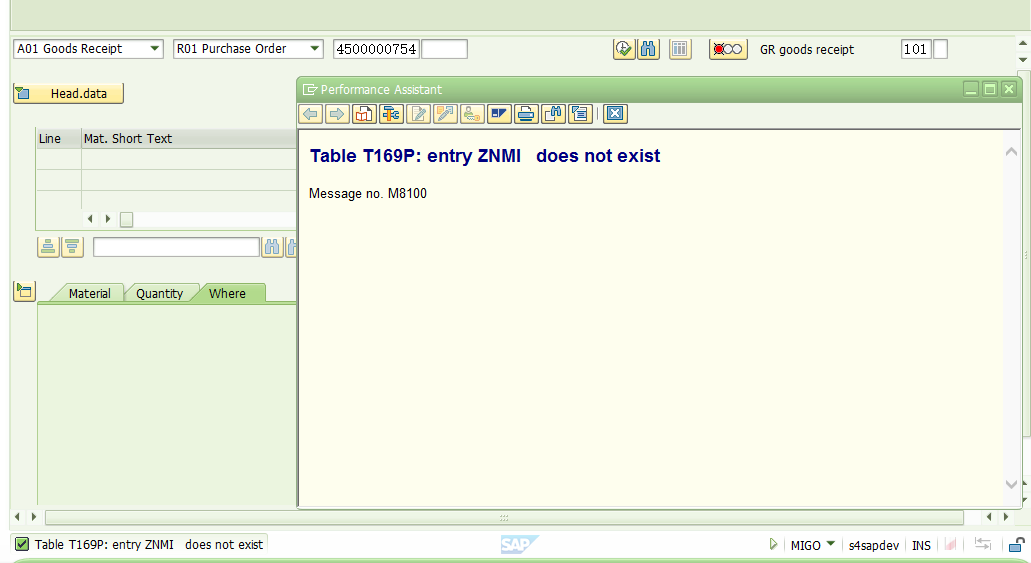

1. Find problems

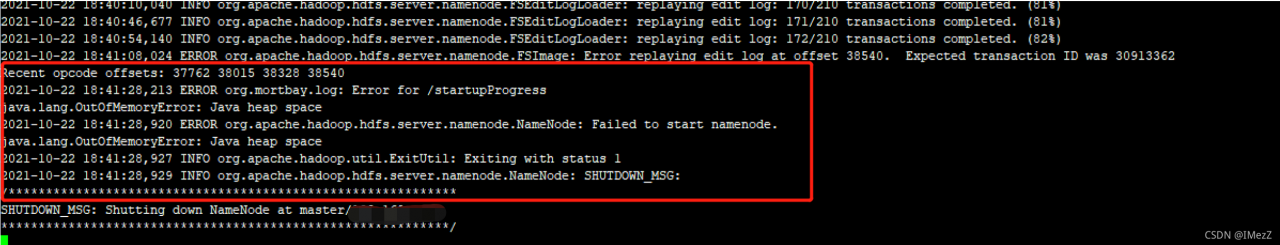

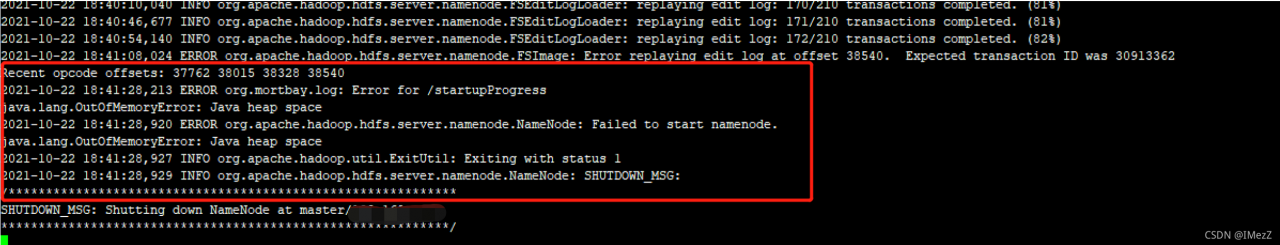

Phenomenon: restart the Hadoop cluster, and the namenode reports an error and cannot be started.

Error reported:

2. Analyze problems

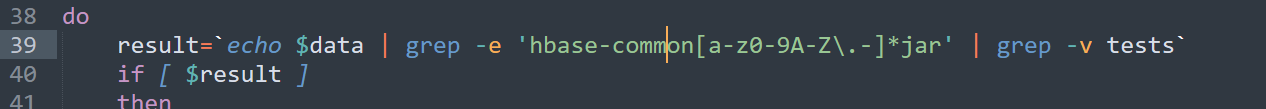

As soon as you see the word “outofmemoryerror: Java heap space” in the error report, it should be the problem of JVM related parameters. Go to the hadoop-env.sh configuration file when. The configuration file settings are as follows:

export HADOOP_NAMENODE_OPTS="-Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_NAMENODE_OPTS"

export HADOOP_DATANODE_OPTS="-Dhadoop.security.logger=ERROR,RFAS $HADOOP_DATANODE_OPTS"

It can be seen from the above that the size of heap memory is not set in the parameter.

The default heap memory size of roles (namenode, secondarynamenode, datanode) in the HDFS cluster is 1000m

3. Problem solving

Change the parameters to the following, start the cluster again, and the start is successful.

export HADOOP_NAMENODE_OPTS="-Xms4096m -Xmx4096m -Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_NAMENODE_OPTS"

export HADOOP_SECONDARYNAMENODE_OPTS="-Xms4096m -Xmx4096m -Dhadoop.security.logger=${HADOOP_SECURITY_LOGGER:-INFO,RFAS} -Dhdfs.audit.logger=${HDFS_AUDIT_LOGGER:-INFO,NullAppender} $HADOOP_NAMENODE_OPTS"

export HADOOP_DATANODE_OPTS="-Xms2048M -Xmx2048M -Dhadoop.security.logger=ERROR,RFAS -Xmx4096m $HADOOP_DATANODE_OPTS"

Parameter Description:

– Xmx4096m Maximum heap memory available

– Xms4096m Initial heap memory

Reference: HDFS memory configuration – flowers are not fully opened * months are not round – blog Park