In the recent project, the Minio drawing bed server is used to upload pictures. Make a record here. The environment of the project is as follows:

Nacos, gradle, springboot, mybatis, MySQL

First, you need to add Minio dependency in gradle. Version 3.0.10 is used in this project

compile 'io.minio:minio:3.0.10'

Then add the configuration class of minioutils in the project to call the service of Minio and provide the interface for calling Minio to upload pictures. All the parameters required in the project are written in the Nacos configuration center. Therefore, take the corresponding parameters from the Nacos configuration file in the annotation form of @ nacosvalue and call the interface for uploading pictures, Returns the URL of a Minio domain name/bucket name/file name stored in the bucket

import com.alibaba.nacos.api.config.annotation.NacosValue;

import com.iid.common.helper.IdHelper;

import io.minio.MinioClient;

import io.minio.errors.*;

import lombok.extern.slf4j.Slf4j;

import org.springframework.stereotype.Component;

import org.xmlpull.v1.XmlPullParserException;

import javax.annotation.PostConstruct;

import java.io.IOException;

import java.io.InputStream;

import java.security.InvalidKeyException;

import java.security.NoSuchAlgorithmException;

/**

* @ClassName MinioUtils

* @Description: TODO

* @Author XuJianSong

* @Date 2021-01-07

* @Version V1.0

**/

@Slf4j

@Component

public class MinioUtils {

private MinioClient minioClient;

@NacosValue(value = "${ymukj.minio.endpoint}")

private String endPoint;

@NacosValue(value = "${ymukj.minio.accessKey}")

private String accessKey;

@NacosValue(value = "${ymukj.minio.secretKey}")

private String secretKey;

@NacosValue(value = "${ymukj.minio.preUrl}")

private String preUrl;

@PostConstruct

public void initMinioClient() {

try {

minioClient = new MinioClient(endPoint, accessKey, secretKey);

} catch (InvalidEndpointException e) {

log.error(e.getMessage(), e);

} catch (InvalidPortException e) {

log.error(e.getMessage(), e);

}

}

public String uploadFile(String bucketName, String objName, InputStream inputStream, Long lenght, String contentType) {

// Use putObject to upload a file to the storage bucket.

try {

minioClient.putObject(bucketName, objName, inputStream, lenght, contentType);

boolean isExist = minioClient.bucketExists(bucketName);

if (!isExist) {

minioClient.makeBucket(bucketName);

}

return preUrl+"/"+objName;

} catch (Exception e) {

e.printStackTrace();

log.info(">>>>>>>>>>>>>>>>>>>>>>>>>Error:" + e);

return null;

}

}

}

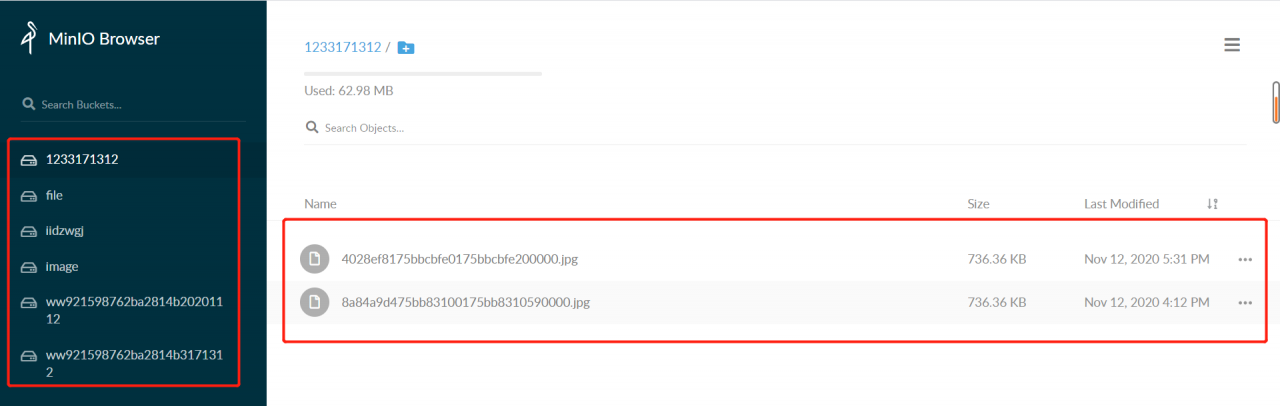

In Minio, there is the concept of “bucket”. The so-called bucket is the folder on the Minio drawing bed. If the bucket name passed to the parameter already exists on the drawing bed, the uploaded picture will be stored in the current bucket

if the bucket name transferred to the parameter does not exist on the drawing bed, the bucket will be created in the source code method first and then saved

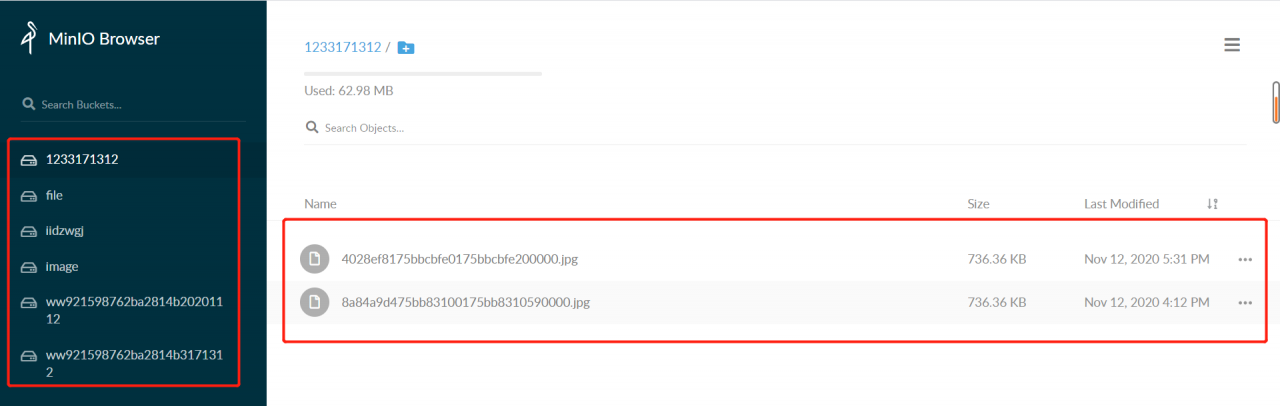

As shown in the figure: the bucket in the Minio drawing bed is on the left, and the files in the bucket are on the right. Minio supports uploading all files. Video and document files are OK, but these functions are not involved in the project, which will be studied later

The next step is to write the interface for uploading pictures in the project. Because it does not involve the operation of the database, I directly wrote all the interfaces in the controller layer and did not call the method of the service layer

the controller layer interface receives the file parameters from the front end, and then processes the files according to the requirements of the parameters received by the method for uploading pictures in the minioutils configuration class, First, we can see that the upload image interface in the miniutils configuration file requires four parameters: bucket, objname, InputStream, lenght and contenttype. The four parameters are: bucket name, file name uploaded and saved to bucket, file input stream and file type. If the upload is successful, a URL will be returned. Put the URL in the browser to directly open the picture. If you need to use the picture in the project, you can directly store the URL in the database and get it from the database later

public GlobalResponse uploadPic(MultipartFile file) {

String bucket = "pic";

String filename = file.getOriginalFilename();

String[] exts = filename.split("\\.");

String ext = exts[exts.length - 1];

String caselsh = filename.substring(0,filename.lastIndexOf("."));

String objName = SystemHelper.now() + caselsh + "." + ext;

log.info(">>>>>>>>>>>>>>>>>>>>>>>>>>objName:" + objName);

String contentType = file.getContentType();

InputStream inputStream = null;

Long lenght = null;

try {

inputStream = file.getInputStream();

lenght = Long.valueOf(inputStream.available());

} catch (IOException e) {

e.printStackTrace();

}

String picUrl = minioUtils.uploadFile(bucket, objName, inputStream, lenght, contentType);

log.info(">>>>>>>>>>>>>>>>>>>>>>>>>>picUrl:" + picUrl);

return GlobalResponse.success(picUrl);

}

Note that the file name uploaded to the bucket should not be repeated! Don’t repeat! Don’t repeat

because duplicate file names can cause a problem: for example, if you upload a picture a.png first and save it in the bucket with the name 111.png, then the Minio domain name/bucket name/111.png can directly open the picture a.png, but if you upload a picture B.png and save it to the same name 111.png, then the Minio domain name/bucket name/111.png opens the picture B.png, If the picture a.png has been stored in the database and has been used, the consequences can be imagined

therefore, the solution used in the interface is to use the form of timestamp + original file name + suffix. Because the timestamp is 13 bits and milliseconds, even if a file with the same name is uploaded, there will be no problem that the file name saved in the bucket will be repeated

That’s all for this sharing. If you have any mistakes, please correct them!