Watching for file changes with StatReloader

Exception in thread django-main-thread:

Traceback (most recent call last):

File "/home/delta/.local/lib/python3.6/site-packages/django/db/backends/mysql/base.py", line 15, in <module>

import MySQLdb as Database

ModuleNotFoundError: No module named 'MySQLdb'

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/usr/lib/python3.6/threading.py", line 916, in _bootstrap_inner

self.run()

File "/usr/lib/python3.6/threading.py", line 864, in run

self._target(*self._args, **self._kwargs)

File "/home/delta/.local/lib/python3.6/site-packages/django/utils/autoreload.py", line 64, in wrapper

fn(*args, **kwargs)

File "/home/delta/.local/lib/python3.6/site-packages/django/core/management/commands/runserver.py", line 110, in inner_run

autoreload.raise_last_exception()

File "/home/delta/.local/lib/python3.6/site-packages/django/utils/autoreload.py", line 87, in raise_last_exception

raise _exception[1]

File "/home/delta/.local/lib/python3.6/site-packages/django/core/management/__init__.py", line 375, in execute

autoreload.check_errors(django.setup)()

File "/home/delta/.local/lib/python3.6/site-packages/django/utils/autoreload.py", line 64, in wrapper

fn(*args, **kwargs)

File "/home/delta/.local/lib/python3.6/site-packages/django/__init__.py", line 24, in setup

apps.populate(settings.INSTALLED_APPS)

File "/home/delta/.local/lib/python3.6/site-packages/django/apps/registry.py", line 114, in populate

app_config.import_models()

File "/home/delta/.local/lib/python3.6/site-packages/django/apps/config.py", line 301, in import_models

self.models_module = import_module(models_module_name)

File "/usr/lib/python3.6/importlib/__init__.py", line 126, in import_module

return _bootstrap._gcd_import(name[level:], package, level)

File "<frozen importlib._bootstrap>", line 994, in _gcd_import

File "<frozen importlib._bootstrap>", line 971, in _find_and_load

File "<frozen importlib._bootstrap>", line 955, in _find_and_load_unlocked

File "<frozen importlib._bootstrap>", line 665, in _load_unlocked

File "<frozen importlib._bootstrap_external>", line 678, in exec_module

File "<frozen importlib._bootstrap>", line 219, in _call_with_frames_removed

File "/home/delta/.local/lib/python3.6/site-packages/django/contrib/auth/models.py", line 3, in <module>

from django.contrib.auth.base_user import AbstractBaseUser, BaseUserManager

File "/home/delta/.local/lib/python3.6/site-packages/django/contrib/auth/base_user.py", line 48, in <module>

class AbstractBaseUser(models.Model):

File "/home/delta/.local/lib/python3.6/site-packages/django/db/models/base.py", line 122, in __new__

new_class.add_to_class('_meta', Options(meta, app_label))

File "/home/delta/.local/lib/python3.6/site-packages/django/db/models/base.py", line 326, in add_to_class

value.contribute_to_class(cls, name)

File "/home/delta/.local/lib/python3.6/site-packages/django/db/models/options.py", line 207, in contribute_to_class

self.db_table = truncate_name(self.db_table, connection.ops.max_name_length())

File "/home/delta/.local/lib/python3.6/site-packages/django/utils/connection.py", line 15, in __getattr__

return getattr(self._connections[self._alias], item)

File "/home/delta/.local/lib/python3.6/site-packages/django/utils/connection.py", line 62, in __getitem__

conn = self.create_connection(alias)

File "/home/delta/.local/lib/python3.6/site-packages/django/db/utils.py", line 204, in create_connection

backend = load_backend(db['ENGINE'])

File "/home/delta/.local/lib/python3.6/site-packages/django/db/utils.py", line 111, in load_backend

return import_module('%s.base' % backend_name)

File "/usr/lib/python3.6/importlib/__init__.py", line 126, in import_module

return _bootstrap._gcd_import(name[level:], package, level)

File "/home/delta/.local/lib/python3.6/site-packages/django/db/backends/mysql/base.py", line 20, in <module>

) from err

django.core.exceptions.ImproperlyConfigured: Error loading MySQLdb module.

Did you install mysqlclient?

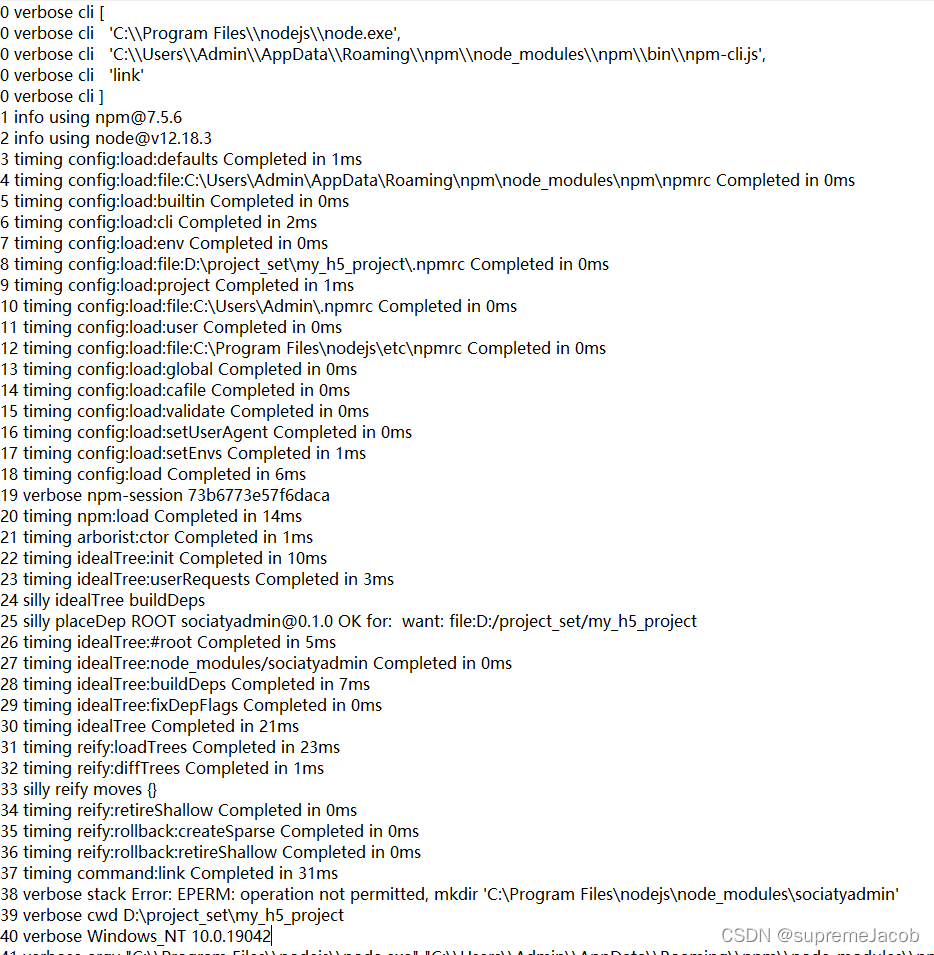

One of the reasons for this error when starting the Django project is:

Because pymysql is not installed or not configured,

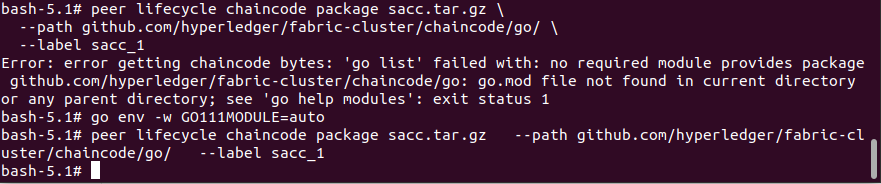

Pymysql is not installed. It needs to be built into the environment and executed

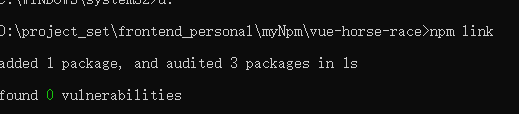

import pymysql

pymysql.install_as_MySQLdb()

Done!

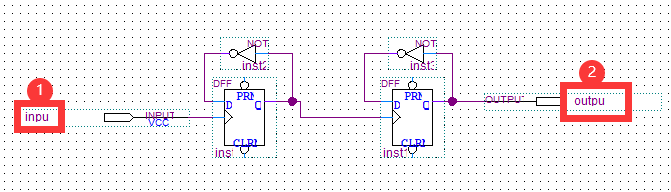

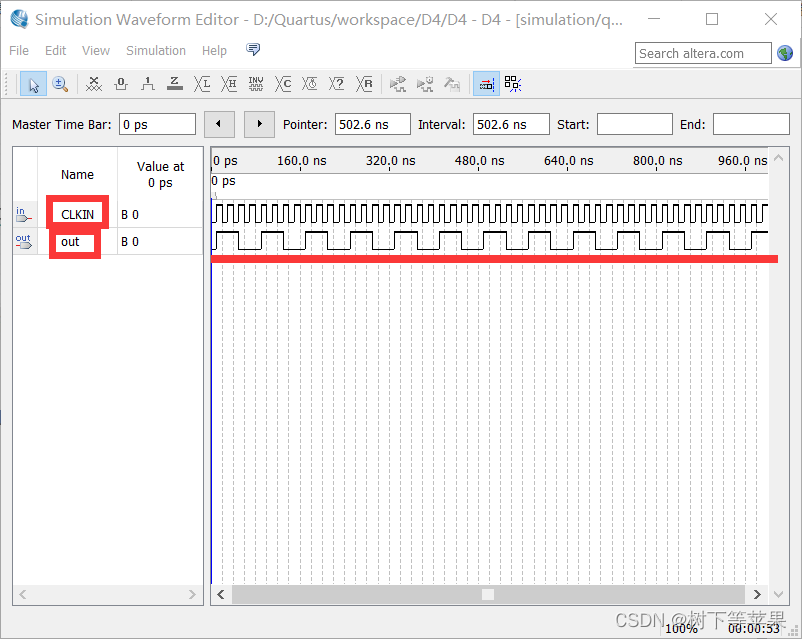

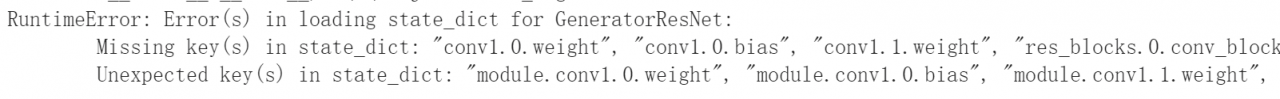

you can find that the key values in the model are more modules

you can find that the key values in the model are more modules