1. scene

The

production environment requires writing to a large number of json files, inserting an attribute in the specified node. As follows:

{

"dataSources":{

"test_dataSource_hod":{

"spec":{

"dataSchema":{

"dataSource":"test_dataSource_hod",

"parser":{

"type":"string",

"parseSpec":{

"timestampSpec":{

"column":"timestamp",

"format":"yyyy-MM-dd HH:mm:ss"

},

"dimensionsSpec":{

"dimensions":[

"method",

"key"

]

},

"format":"json"

}

},

"granularitySpec":{

"type":"uniform",

"segmentGranularity":"hour",

"queryGranularity":"none"

},

"metricsSpec":[

{

"name":"count",

"type":"count"

},

{

"name":"call_count",

"type":"longSum",

"fieldName":"call_count"

},

{

"name":"succ_count",

"type":"longSum",

"fieldName":"succ_count"

},

{

"name":"fail_count",

"type":"longSum",

"fieldName":"fail_count"

}

]

},

"ioConfig":{

"type":"realtime"

},

"tuningConfig":{

"type":"realtime",

"maxRowsInMemory":"100000",

"intermediatePersistPeriod":"PT10M",

"windowPeriod":"PT10M"

}

},

"properties":{

"task.partitions":"1",

"task.replicants":"1",

"topicPattern":"test_topic"

}

}

},

"properties":{

"zookeeper.connect":"zookeeper.com:2015",

"druid.discovery.curator.path":"/druid/discovery",

"druid.selectors.indexing.serviceName":"druid/overlord",

"commit.periodMillis":"12500",

"consumer.numThreads":"1",

"kafka.zookeeper.connect":"kafkaka.com:2181,kafka.com:2181,kafka.com:2181",

"kafka.group.id":"test_dataSource_hod_dd"

}

}

needs to be at the end of the properties node to add a "druidBeam. RandomizeTaskId" : "true" property.

2. Ideas

the general idea is as follows:

- scan all files to be changed

- confirm the position to be changed

- insert new character

in the file

where I find it a little bit harder is where I confirm the insertion location. We know is "druid. Selectors. Indexing. The serviceName" : "druid/overlord", this thing must be in the node, that as long as I can find this stuff, and then in his behind with respect to OK.

ok, we've got the idea, let's write the code.

#!/usr/bin/python

# coding:utf-8

import os

old_string = '"druid/overlord"'

new_string = ('"druid/overlord",' +

'\n ' +

'"druidBeam.randomizeTaskId":"true",')

def insertrandomproperty(file_name):

if '.json' in file_name:

with open(file, 'r') as oldfile:

content = oldfile.read()

checkandinsert(content, file)

else:

pass

def checkandinsert(content, file):

if 'druidBeam.randomizeTaskId' not in content:

# to avoid ^M appear in the new file because of different os

# we replace \r with ''

new_content = content.replace(old_string, new_string).replace('\r', '')

with open(file, 'w') as newfile:

newfile.write(new_content)

else:

pass

if __name__ == '__main__':

files = os.listdir('/home/tranquility/conf/service_bak')

os.chdir('/home/tranquility/conf/service_bak')

for file in files:

insertrandomproperty(file)

is just updating the content in memory, and then rewriting it back to the file. The code is just a rough representation of the idea and can be modified and optimized as needed.

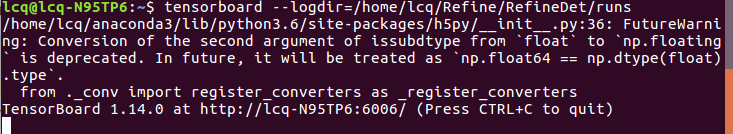

at this point, you can click on the url into the HTTP. But when I used it, I found that it didn’t work.

at this point, you can click on the url into the HTTP. But when I used it, I found that it didn’t work.