[error 1]:

java.lang.RuntimeException: HMaster Aborted

at org.apache.hadoop.hbase.master.HMasterCommandLine.startMaster(HMasterCommandLine.java:261)

at org.apache.hadoop.hbase.master.HMasterCommandLine.run(HMasterCommandLine.java:149)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:76)

at org.apache.hadoop.hbase.util.ServerCommandLine.doMain(ServerCommandLine.java:149)

at org.apache.hadoop.hbase.master.HMaster.main(HMaster.java:2971)

2021-08-26 12:25:35,269 INFO [main-EventThread] zookeeper.ClientCnxn: EventThread shut down for session: 0x37b80a4f6560008

[attempt 1]: delete the HBase node under ZK

but not solve my problem

[attempt 2]: reinstall HBase

but not solve my problem

[attempt 3]: turn off HDFS security mode

hadoop dfsadmin -safemode leave

Still can’t solve my problem

[try 4]: check zookeeper. You can punch in spark normally or add new nodes. No problem.

Turn up and report an error

[error 2]:

master.HMaster: Failed to become active master

org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.ipc.StandbyException): Operation category READ is not supported in state standby. Visit https://s.apache.org/sbnn-error

at org.apache.hadoop.hdfs.server.namenode.ha.StandbyState.checkOperation(StandbyState.java:108)

at org.apache.hadoop.hdfs.server.namenode.NameNode$NameNodeHAContext.checkOperation(NameNode.java:2044)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkOperation(FSNamesystem.java:1409)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getFileInfo(FSNamesystem.java:2961)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.getFileInfo(NameNodeRpcServer.java:1160)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.getFileInfo(ClientNamenodeProtocolServerSideTranslatorPB.java:880)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:507)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:1034)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:1003)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:931)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1926)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2854)

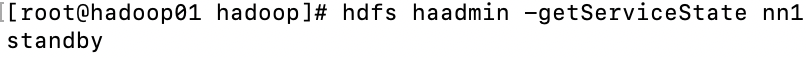

Here’s the point: an error is reported that my master failed to become an hmaster. The reason is: operation category read is not supported in state standby. That is, the read operation fails because it is in the standby state. Check the NN1 state at this time

# hdfs haadmin -getServiceState nn1

Sure enough, standby

[solution 1]:

manual activation

hdfs haadmin -transitionToActive --forcemanual nn1

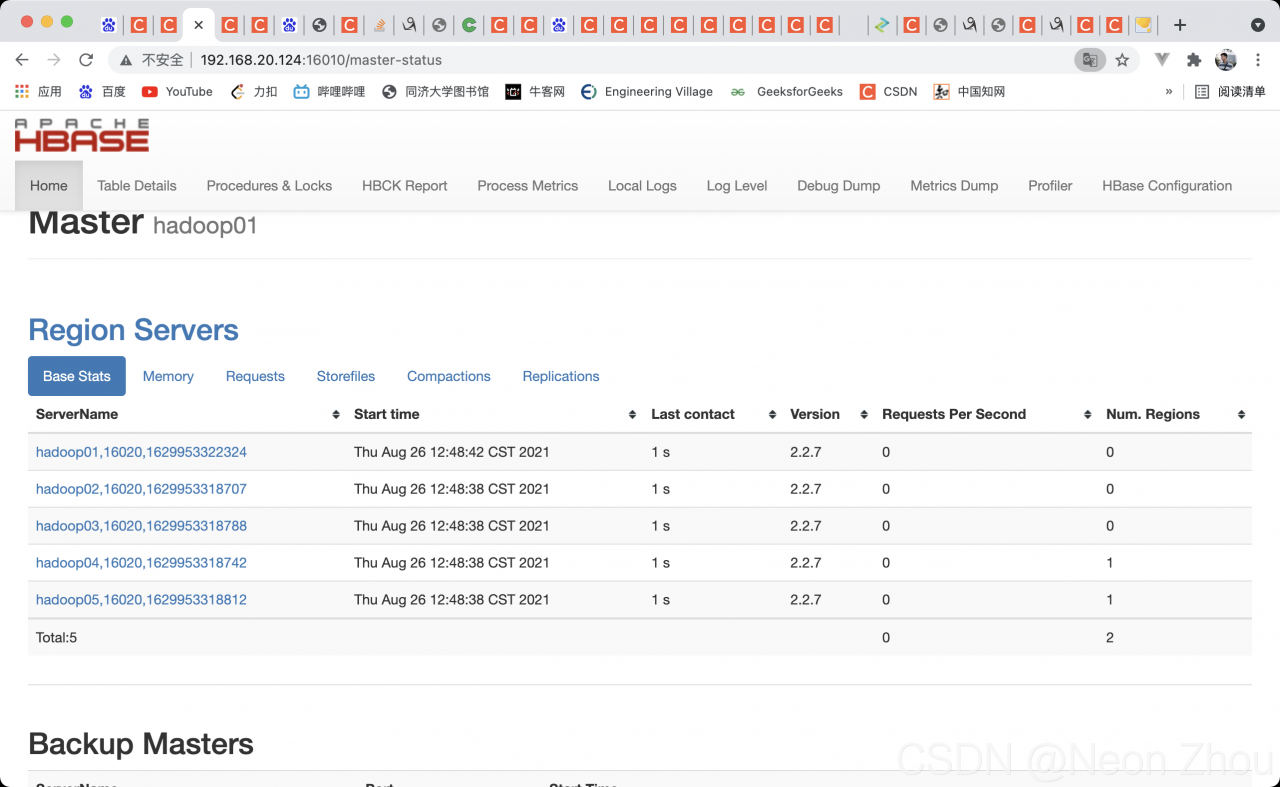

Kill all HBase processes, restart, JPS view, access port

Bravo!!!

It’s been changed for a few hours. It’s finally good. The reason is that I forgot the correct startup sequence

zookeeper —— & gt; hadoop——-> HBase

every time I start Hadoop first, I waste a long time. I hope I can help you.

Read More:

- The remote port occupation of Linux startup jar package script reports an error

- The list command in HBase shell reported an error org.apache.hadoop . hbase.PleaseHoldException : Master is initializing

- hbase ERROR org.apache.hadoop.hbase.PleaseHoldException: Master is initializing

- An error occurs when HBase uses the shell command: pleaseholdexception: Master is initializing solution

- Kafka opens JMX port and reports that the error port is occupied

- The MySQL service suddenly hangs up with the error message can’t connect to MySQL server on ‘localhost’ (10061)

- Dca1000 reports an error and the SPI port cannot be connected

- How to Solve Hbase Error: Master is initializing

- Kylin startup error HBase common lib not found

- Resourcemanger reported an error: the port is unavailable

- Flash + Vue uses Axios to obtain server data, and reports error: “request aborted”

- Vue Alain startup command yarn serve reports an error

- Hadoop reports an error. Cannot access scala.serializable and python MapReduce reports an error

- Idea2021 reports an error. Default operand size is 64 sets the startup task to automatically add the registry

- Datanode startup failed with an error: incompatible clusterids

- Some problems in the development of HBase MapReduce

- Lamdba in the studio part reports an error. Observe lamdba reports an error but can run

- vagrant up reports an error mounting failed with the error: No such device mounting directory failed

- The echots in Vue reports an error. After obtaining the DOM element, the chart can be displayed. The console still reports an error

- The file server reports an error of 413, and the file uploaded by nginx reports an error of 413 request entity too large