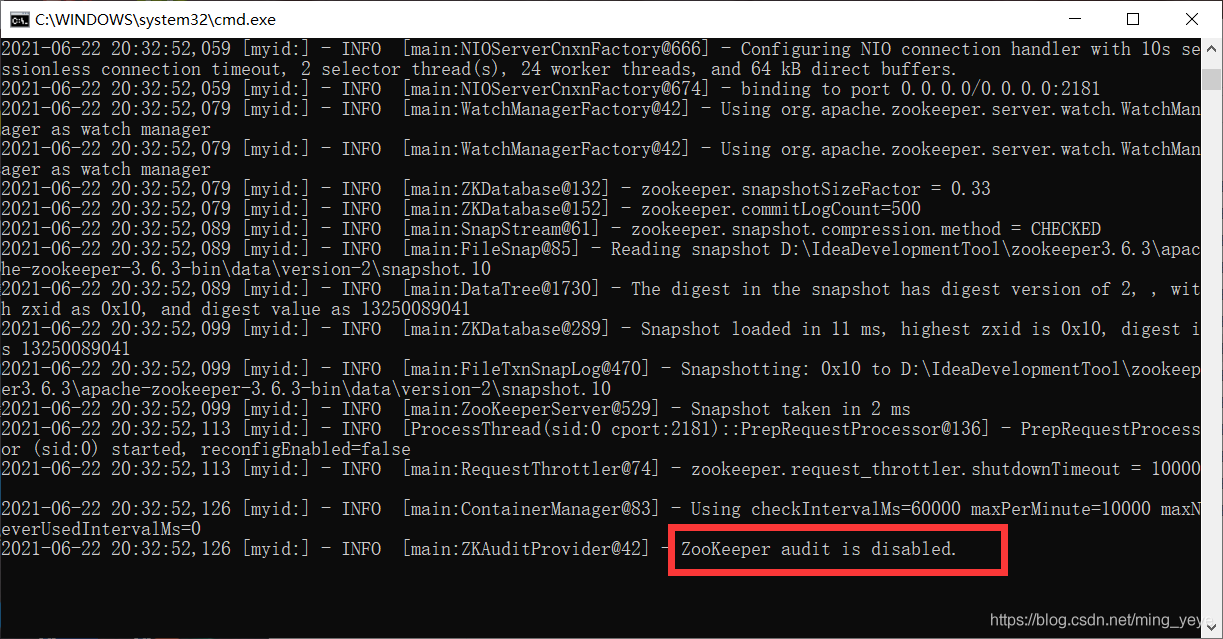

Start zookeeper under windows and prompt zookeeper audit is disabled; Indicates that the startup failed, as follows:

Reason: I’m running zookeeper version 3.6.3. It seems that there will be this phenomenon in versions above 3.6. The reason is that the newly added audit log of zookeeper is turned off by default when the new version is started, so this situation occurs.

terms of settlement:

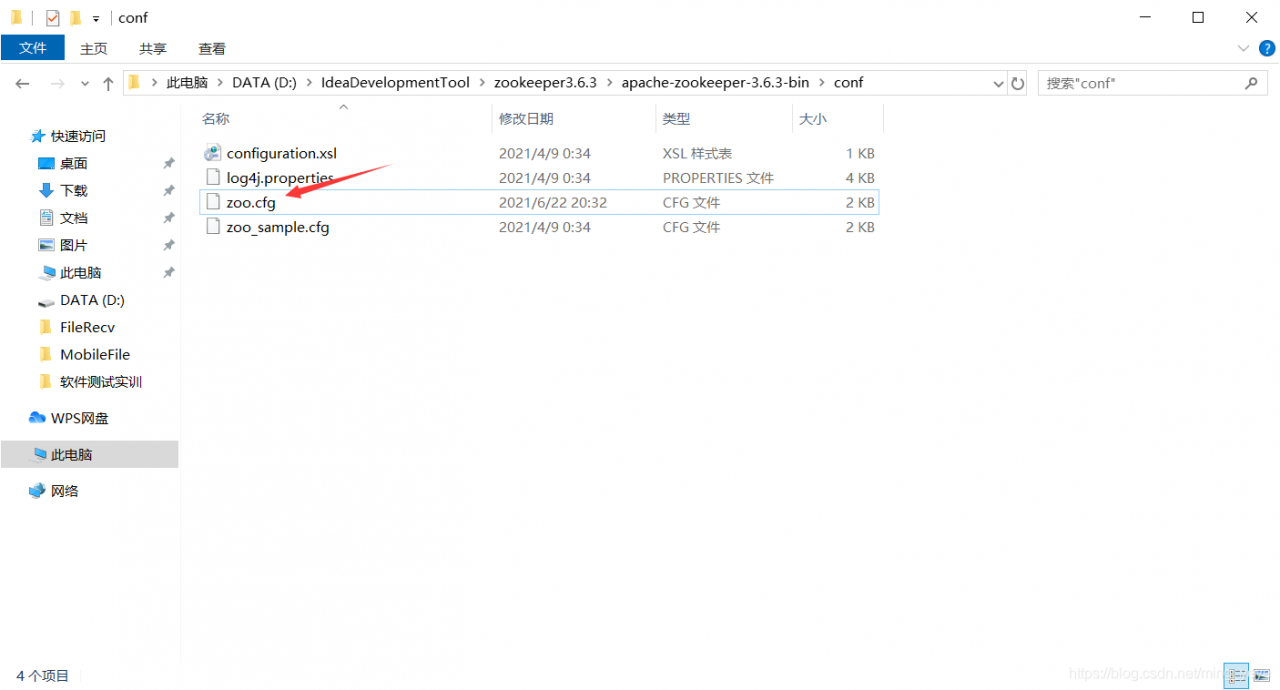

1. In the configuration file conf of zookeeper, open the file zoo.cfg:

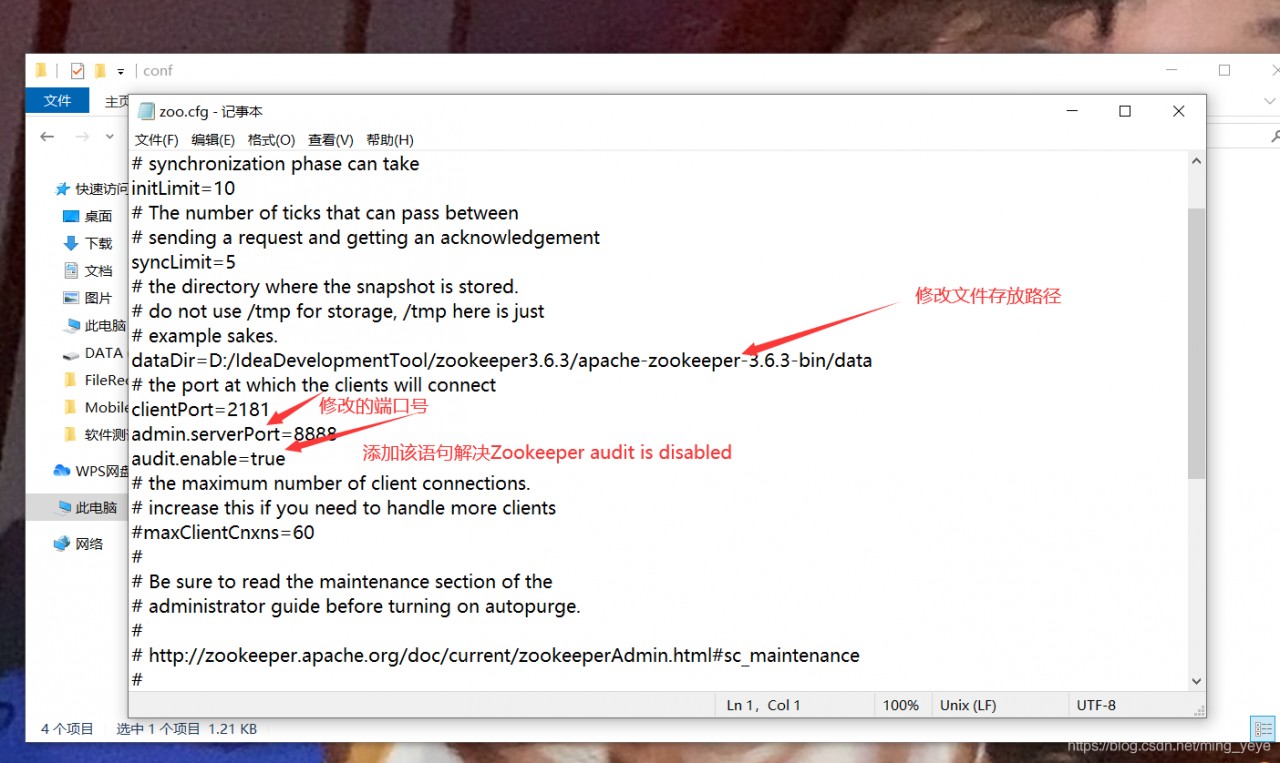

Attachment: the zoo.cfg file is a copy of the zoo_ Sample.cfg file. In the zoo.cfg file, the data storage path and the port number used when starting zookeeper are modified.

2. Modify the zoo.cfg file by adding admin.enable = true

3. Save, restart zookeeper (double-click zkserver. CMD in bin directory), start successfully!

Hope to help you!!

Nacos failed to start [How to Solve]

Nacos failed to start

-

- Modify config/application.properties to configure the corresponding database information

#*************** Config Module Related Configurations ***************#

### If use MySQL as datasource:

spring.datasource.platform=mysql

### Count of DB:

db.num=1

### Connect URL of DB:

db.url.0=jdbc:mysql://127.0.0.1:3306/nacos?characterEncoding=utf8&connectTimeout=1000&socketTimeout=3000&autoReconnect=true&useUnicode=true&useSSL=false&serverTimezone=UTC

db.user=root

db.password=123456

-

-

- create the corresponding database Nacos, and execute the corresponding config/nacos-mysql.sql single node startup.cmd – M standalone, modify the default single node startup, and modify bin/startup.cmd. Line 26 is as follows

-

rem set MODE="cluster"

set MODE="standalone"

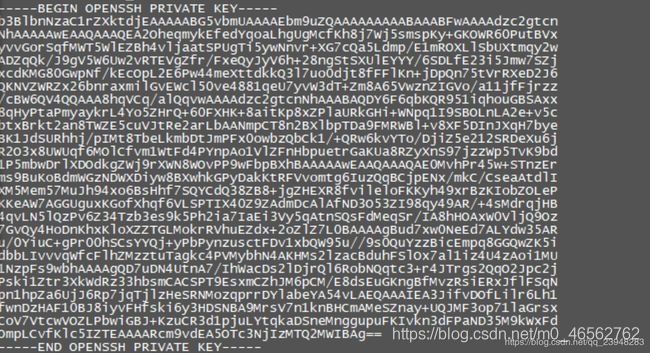

git@gitlab invalid privatekey

SSH git code error caused by: org. Eclipse. Jgit. Errors. Transportexception: git@gitlab invalid privatekey

When using SSH git code, we found the problem of invalid private key:

the following shows some inline code chips .

Caused by: org.eclipse.jgit.errors.TransportException: [email protected]:xxx.git: invalid privatekey: [B@2fc74d70

at org.eclipse.jgit.transport.JschConfigSessionFactory.getSession(JschConfigSessionFactory.java:158)

at org.eclipse.jgit.transport.SshTransport.getSession(SshTransport.java:107)

at org.eclipse.jgit.transport.TransportGitSsh$SshFetchConnection.(TransportGitSsh.java:247)

at org.eclipse.jgit.transport.TransportGitSsh.openFetch(TransportGitSsh.java:137)

at org.eclipse.jgit.transport.FetchProcess.executeImp(FetchProcess.java:105)

at org.eclipse.jgit.transport.FetchProcess.execute(FetchProcess.java:91)

at org.eclipse.jgit.transport.Transport.fetch(Transport.java:1260)

at org.eclipse.jgit.api.FetchCommand.call(FetchCommand.java:211)

... 60 common frames omitted

Caused by: com.jcraft.jsch.JSchException: invalid privatekey: [B@2fc74d70

at com.jcraft.jsch.KeyPair.load(KeyPair.java:664)

at com.jcraft.jsch.KeyPair.load(KeyPair.java:561)

at com.jcraft.jsch.IdentityFile.newInstance(IdentityFile.java:40)

at com.jcraft.jsch.JSch.addIdentity(JSch.java:406)

at com.dmall.autotestcenter.service.impl.GitServiceImpl$1.createDefaultJSch(GitServiceImpl.java:81)

at org.eclipse.jgit.transport.JschConfigSessionFactory.getJSch(JschConfigSessionFactory.java:350)

at org.eclipse.jgit.transport.JschConfigSessionFactory.createSession(JschConfigSessionFactory.java:308)

at org.eclipse.jgit.transport.JschConfigSessionFactory.createSession(JschConfigSessionFactory.java:175)

at org.eclipse.jgit.transport.JschConfigSessionFactory.getSession(JschConfigSessionFactory.java:105)

... 67 common frames omitted

The reason is that the openssh version is used to generate the key, which leads to too high version:

solution:

SSH keygen – M PEM – t RSA regenerates the key in the old format, which can change the key format specified by the

– M parameter, and PEM (that is, RSA format) is the old format used before

Unicode encodeerror encountered in Python 3

Run a business script locally today, perfect!

Then deploy the script to the server to run, perfect!

Add to crontab timing task, report error!

UnicodeEncodeError: ‘ascii’ codec can’t encode characters in position 57-60: ordinal not in range(128)

Then all kinds of methods are checked, according to the blogger’s method can not solve

after a toss, there’s no choice but to use a stupid method. Add the running script to a shell script and add the following two lines of code in front of it. It’s normal! It’s a solution anyway

export LANG="en_US.UTF-8"

export PYTHONIOENCODING=utf-8

The back end cannot receive the parameters passed by the front end

Error description

The front-end sending code is as follows

login() {

let {userCode, userPwd} = this.ruleForm;

// this.$axios.post('/UserInfoService/login',

// this.$qs.stringify({

// account: userCode,

// password: userPwd

// })).then(res => {

// console.log(res);

// })

this.$axios({

method: 'post',

url: '/UserInfoService/login',

data: {

account: userCode,

password: userPwd

}

}).then(res => {

console.log(res);

})

},

The content of the request body is as follows

{account: "111", password: "222"}

account: "111"

password: "222"

Whether the back end splits the attribute or uses the userinfo entity to receive it, it gets null

Cause of error

The reason is that the default sending data format of Axios is JSON format, not form data. As a result, the data passed to the back end is JSON format, and the back end cannot receive it directly. When the result is returned, the data sent by the front end will be passed to the front end

Solution

There are two solutions, as follows

The first is to change the format of the front-end transmission data to form data

axios.defaults.headers.post['Content-Type'] = 'application/x-www-form-urlencoded';

But this method is not recommended, because the front-end and back-end communication uses the JSON format. The second method is to use the entity class to receive the parameters passed by the front-end, and use @ requestbody to modify the parameters

Opencv does not define identifier cv_ CAP_ PROP_ FRAME_ COUNT

This is because of the high version of OpenCV, the function name has changed, and I have been checking it for a long time. Because I changed the opencv version.

It used to be cv_ CAP_ PROP_ FRAME_ Count

now there is no cv_ The prefix is too long.

cv::CAP_PROP_FRAME_COUNT

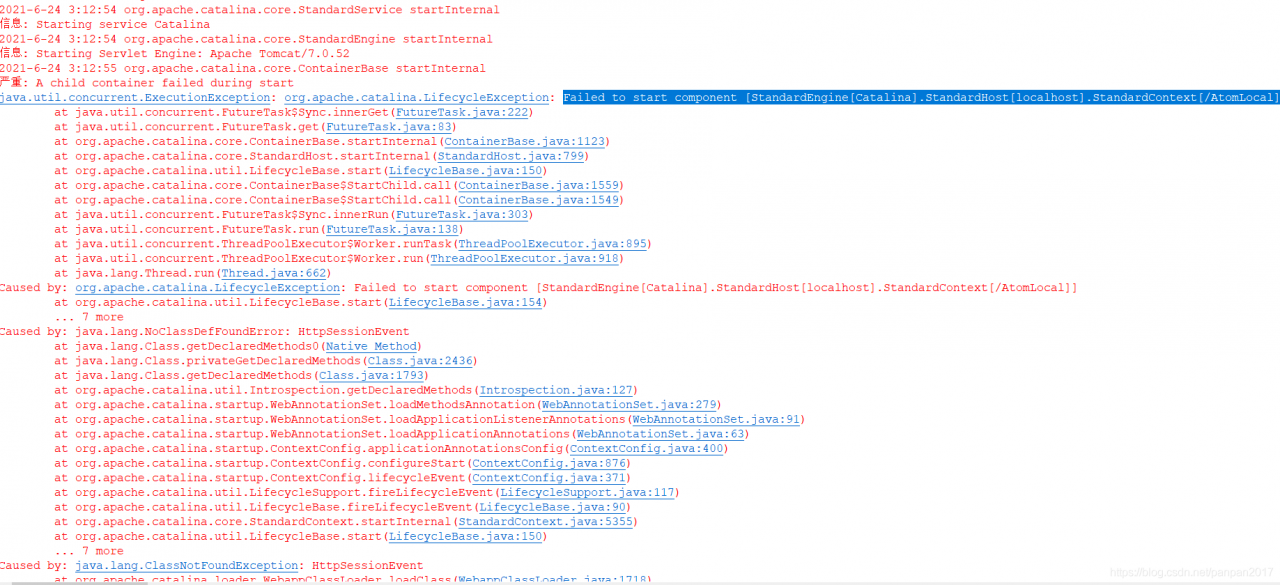

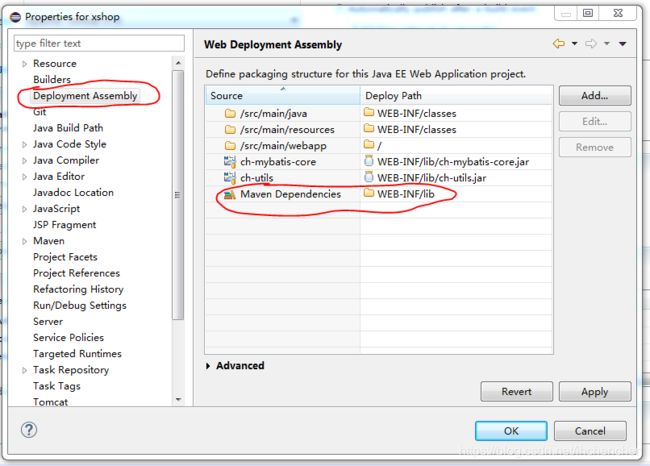

[Solved] Tomcat7 Start Error: Failed to start component [StandardEngine[Catalina].StandardHost[localhost].StandardCon

When transforming a project in eclipse, tomcat7 is always started

Failed to start component [StandardEngine[Catalina].StandardHost[localhost].StandardContext[/AtomLocal]]

terms of settlement:

Right click project properties, find deployment assembly, add Java build path entries and Maven dependencies

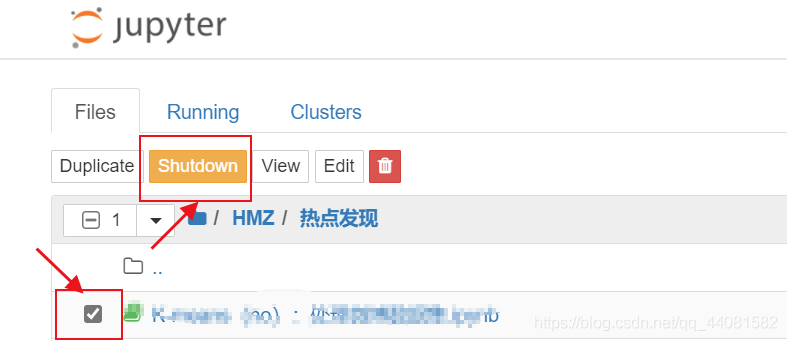

Interrupt the kernel in Jupiter does not respond

Sometimes, when the program has a large amount of computation or falls into a dead loop, and there is no response when you click the interrupt button, there are three solutions:

1. Enter Ctrl + C to exit jupyter

2. Select notebook and shutdown

3. Click restart the Kernel (recommended)

Some problems in the development of HBase MapReduce

Recently in the course design, the main process is to collect data from the CSV file, store it in HBase, and then use MapReduce for statistical analysis of the data. During this period, we encountered some problems, which were finally solved through various searches. Record these problems and their solutions here.

1. HBase hmaster auto close problem

Enter zookeeper, delete HBase data (use with caution), and restart HBase

./zkCli.sh

rmr /hbase

stop-hbase.sh

start-hbase.sh

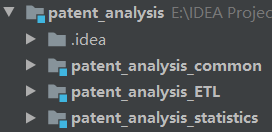

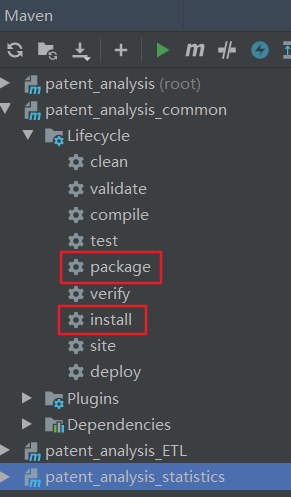

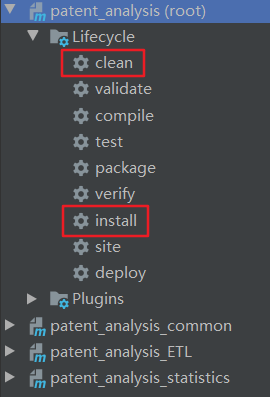

2. Dealing with multi module dependency when packaging with Maven

The project structure is shown in the figure below

ETL and statistics both refer to the common module. When they are packaged separately, they are prompted that the common dependency cannot be found and the packaging fails.

Solution steps:

1. To do Maven package and Maven install for common, I use idea to operate directly in Maven on the right.

2. Run Maven clean and Maven install commands in the outermost total project (root)

After completing these two steps, the problem can be solved.

3. When the Chinese language is stored in HBase, it becomes a form similar to “ XE5 x8f x91 Xe6 x98 x8e”

The classic Chinese encoding problem can be solved by calling the following method before using.

public static String decodeUTF8Str(String xStr) throws UnsupportedEncodingException {

return URLDecoder.decode(xStr.replaceAll("\\\\x", "%"), "utf-8");

}

4. Error in job submission of MapReduce

Write the code locally, type it into a jar package and run it on the server. The error is as follows:

Exception in thread "main" java.lang.NoSuchMethodError: org.apache.hadoop.mapreduce.Job.getArchiveSharedCacheUploadPolicies(Lorg/apache/hadoop/conf/Configuration;)Ljava/util/Map;

at org.apache.hadoop.mapreduce.v2.util.MRApps.setupDistributedCache(MRApps.java:491)

at org.apache.hadoop.mapred.LocalDistributedCacheManager.setup(LocalDistributedCacheManager.java:92)

at org.apache.hadoop.mapred.LocalJobRunner$Job.<init>(LocalJobRunner.java:172)

at org.apache.hadoop.mapred.LocalJobRunner.submitJob(LocalJobRunner.java:788)

at org.apache.hadoop.mapreduce.JobSubmitter.submitJobInternal(JobSubmitter.java:240)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1290)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1287)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1762)

at org.apache.hadoop.mapreduce.Job.submit(Job.java:1287)

at org.apache.hadoop.mapreduce.Job.waitForCompletion(Job.java:1308)

at MapReduce.main(MapReduce.java:49)

Solution: add dependencies

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-core</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-common</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.1.3</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-mapreduce-client-jobclient</artifactId>

<version>3.1.3</version>

<scope>provided</scope>

</dependency>

Among them

Hadoop-mapreduce-client-core.jar supports running on a cluster

Hadoop-mapreduce-client-common.jar supports running locally

After solving the above problems, my code can run smoothly on the server.

Finally, it should be noted that the output path of MapReduce cannot already exist, otherwise an error will be reported.

I hope this article can help you with similar problems.

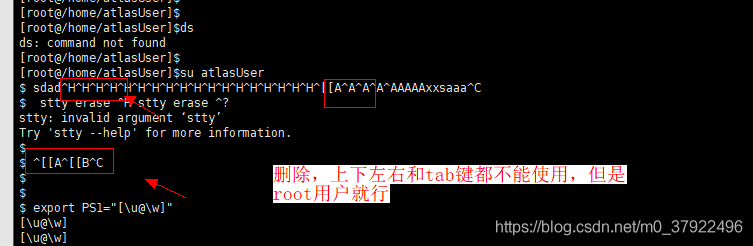

New user’s shell, delete prompt ^ H, up and down prompt ^ A ^ B, tab key is useless

Problem phenomenon

reason

The shell interpreter used by new users is sh, not bash

1. Switching

Enter Chsh

2. Enter the bash position of the environment

Enter/bin/bash after login shell [*]

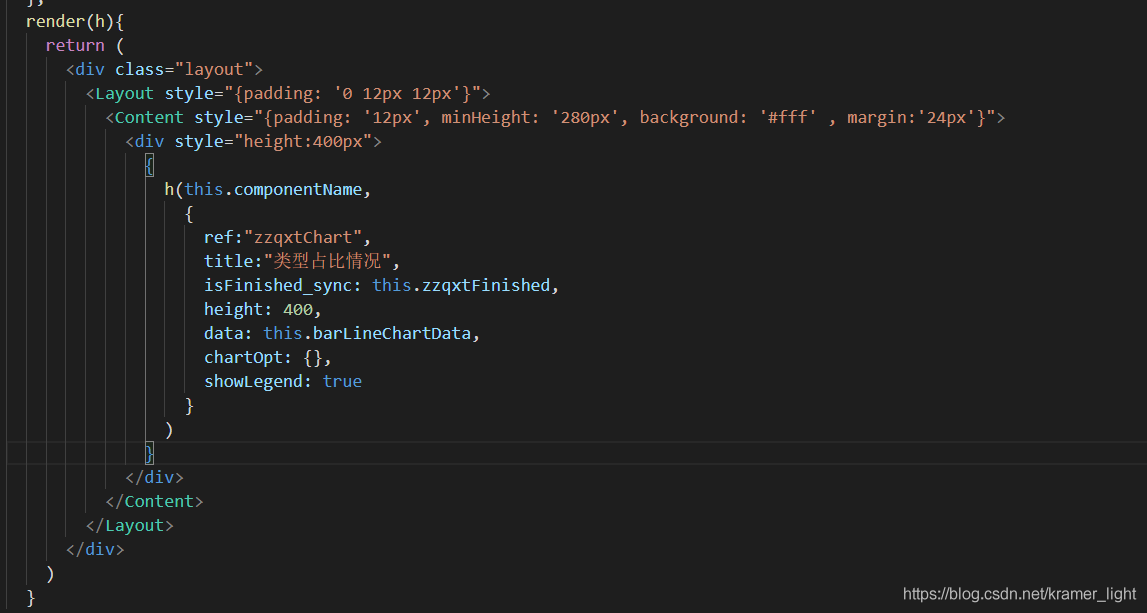

Unknown custom element: <component> – did you register the component correctly

This error occurs when creating dynamic components using JSX:

Unknown custom element: <component> - did you register the component correctly

The reason is that we take it for granted that in template syntax, dynamic components are created like this:

<component :is='dev-chart'></component>

The JSX syntax should be:

<component is={'dev-chart'}></component>

Then the above error appears, hhhhh.

Therefore, the correct way to write it is to use createElement instead of component is, which is the price of going deep into the bottom layer.

Source: https://blog.csdn.net/kw269937519/article/details/114080530

The jar package download of Maven project appears (could not transfer artifact. Org mybatis:mybatis )

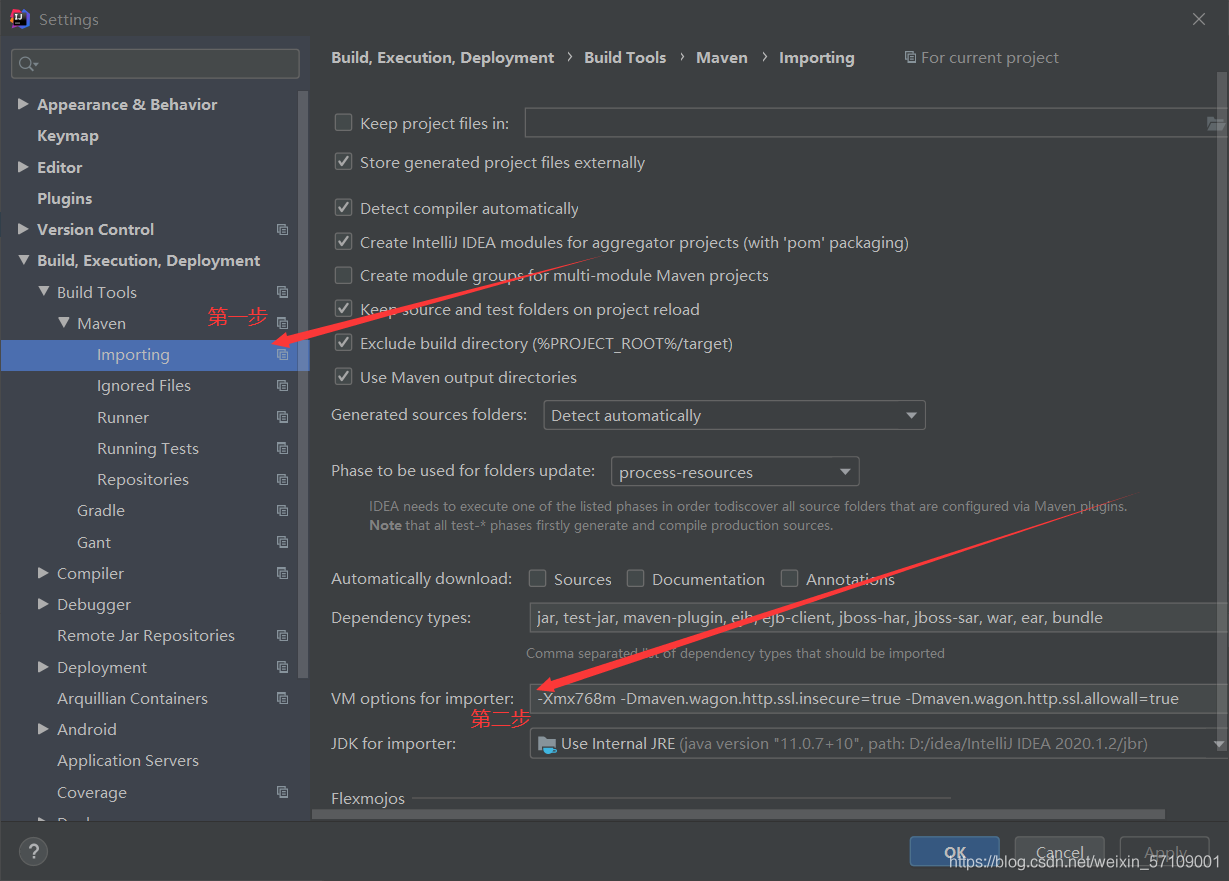

1. Under file, click settings

2. Find importing under Maven

3. Add in the second step – Dmaven.wagon.http.ssl.insecure=true -Dmaven.wagon.http.ssl.allowall=true

You can complete