Problem Description:

In the experiment of digital image processing course, SIFT algorithm is used in the feature matching experiment, and opencv4 is used for the first time with vscode 4 and python 3 8. An error is reported

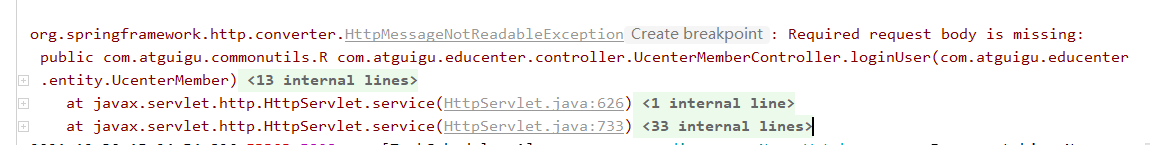

module 'cv2.cv2' has no attribute 'xfeatures2d'

Then install the opencv Python 3.4.2.16 command in Anaconda prompt as follows, and an error occurs that the relevant version cannot be found

pip install opencv-python==3.4.2.16And report an error as

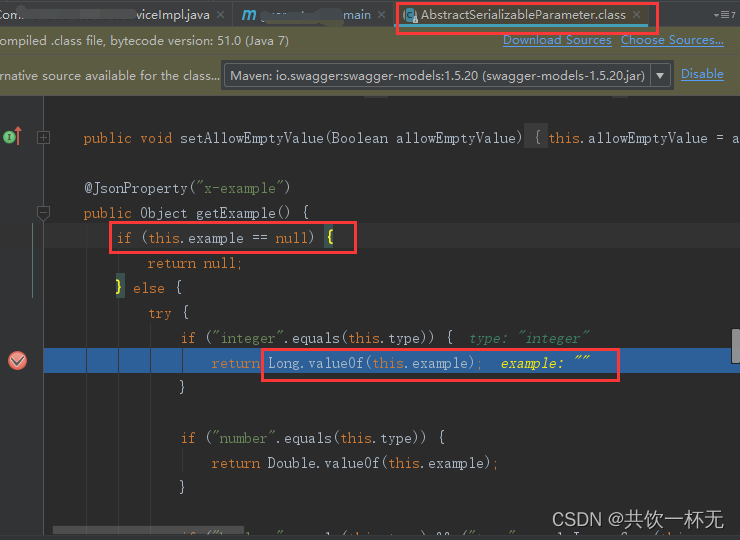

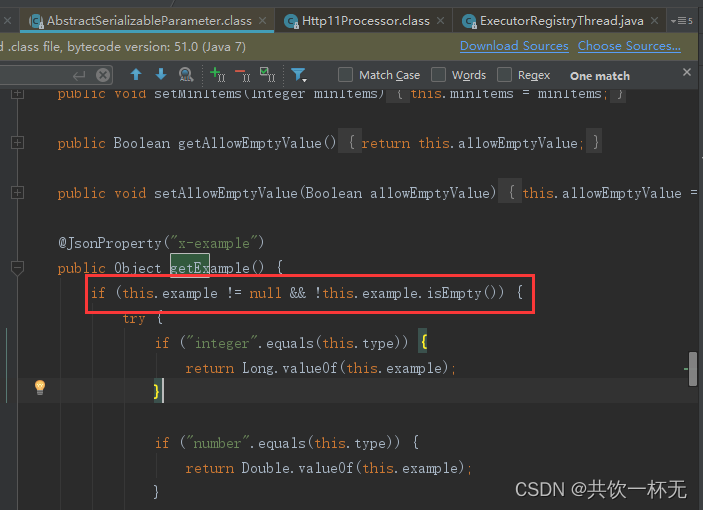

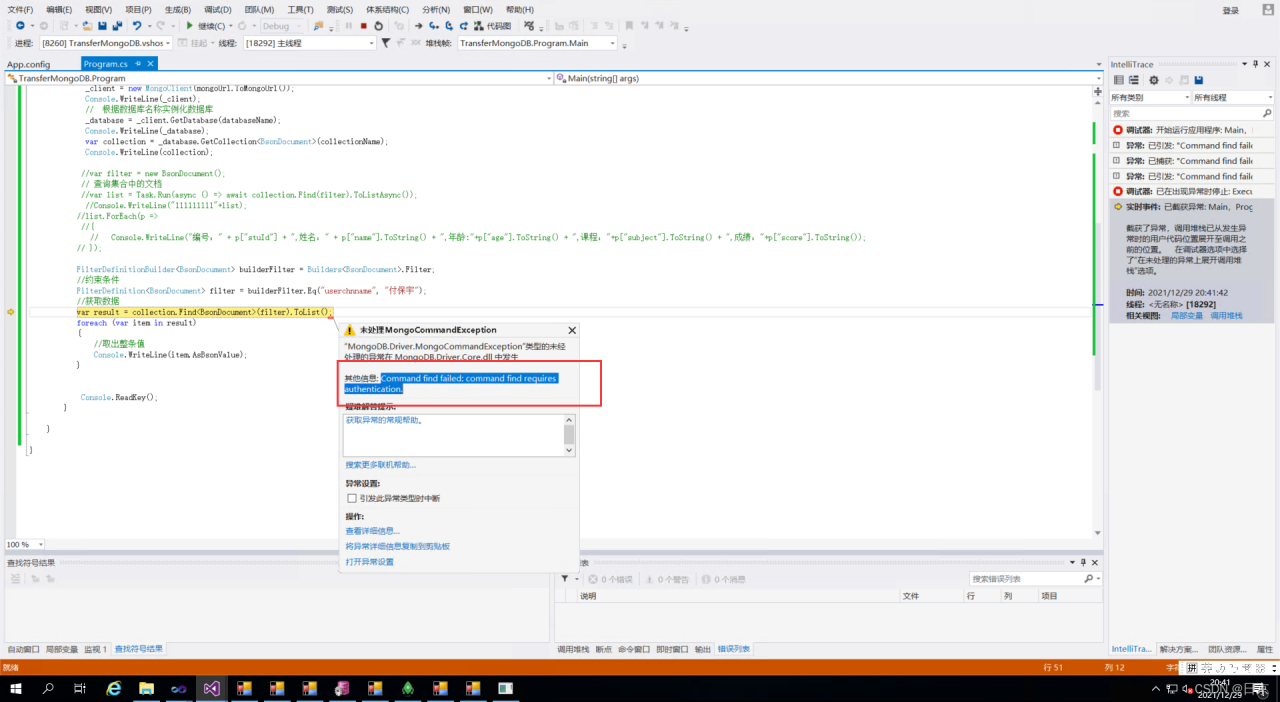

cv2.error: OpenCV(3.4.8) C:\projects\opencv-python\opencv_contrib\modules\xfeatures2d\src\sift.cpp:1207: error: (-213:The function/feature is not implemented) This algorithm is patented and is excluded in this configuration; Set OPENCV_ENABLE_NONFREE CMake option and rebuild the library in function 'cv::xfeatures2d::SIFT::create'

Cause analysis

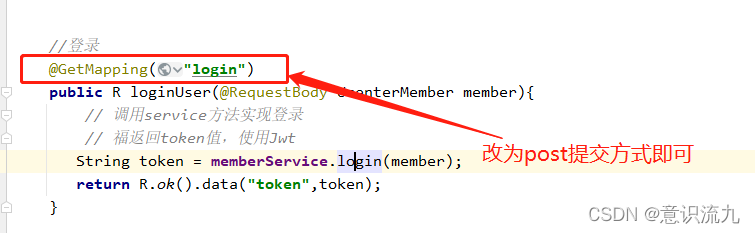

The reason for the first error is opencv4 Version 4 cannot be used due to sift patent; The second error is due to opencv4 2 and python 3 8 mismatch, need to be reduced to 3.6; This error is still a problem with the opencv version

Solution:

If it’s Python 3.6. You only need to install the package of the relevant version of OpenCV. Execute the following command in Anaconda prompt

pip install opencv-python==3.4.2.16

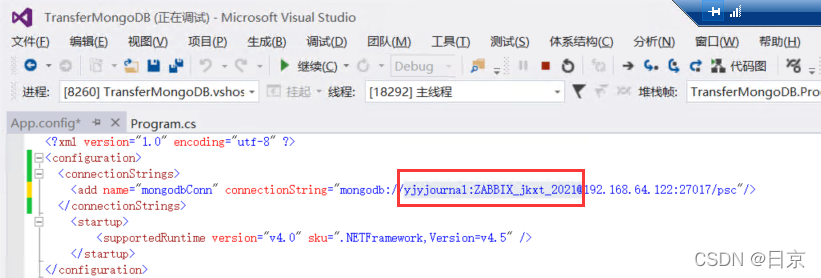

pip install opencv-contrib-python==3.4.2.16Because I’m Python 3.8. Therefore, a virtual environment py36 is created for related configuration. For creating a virtual environment and activating a virtual environment, please refer to the creating a virtual environment – brief book in Anaconda blog, and then execute the above command in the activated virtual environment

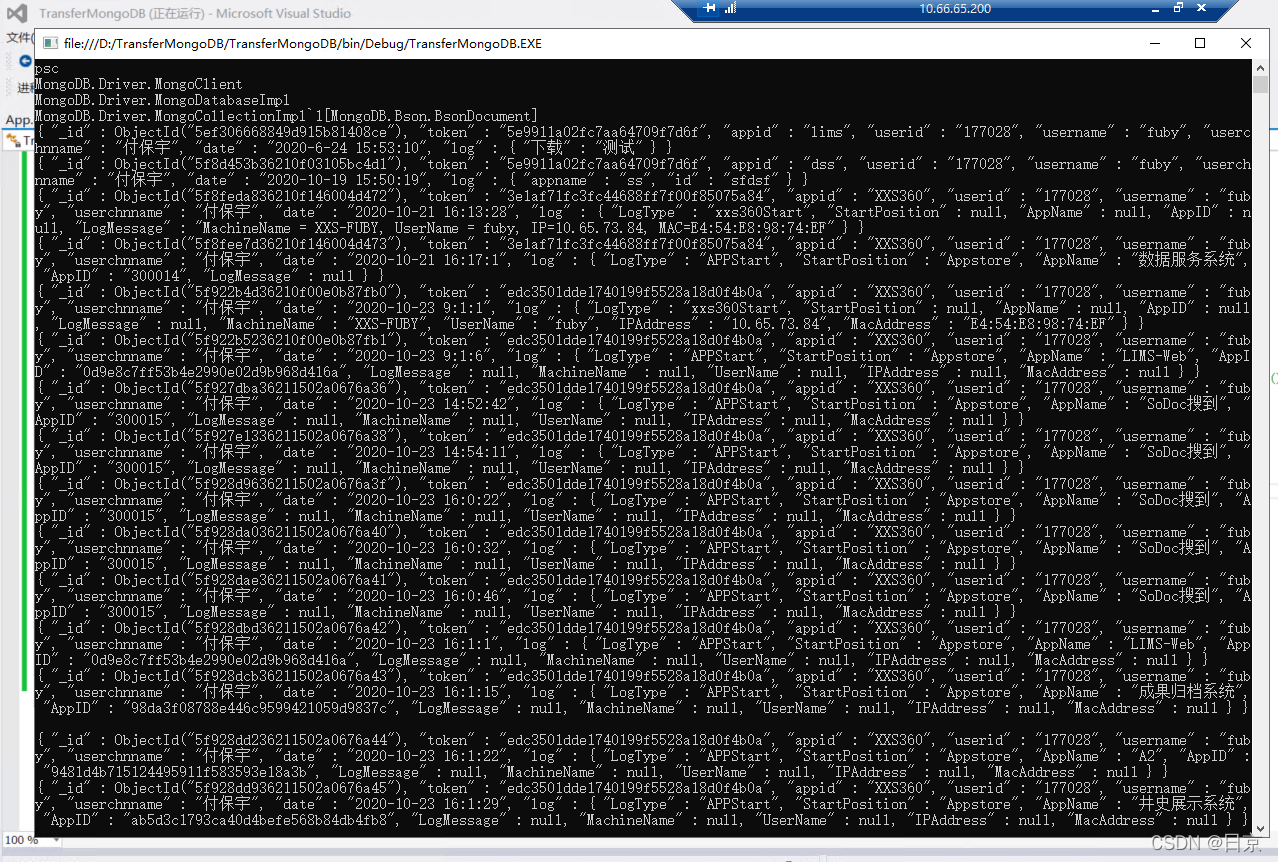

Finally, run the PY file in the vs Code terminal

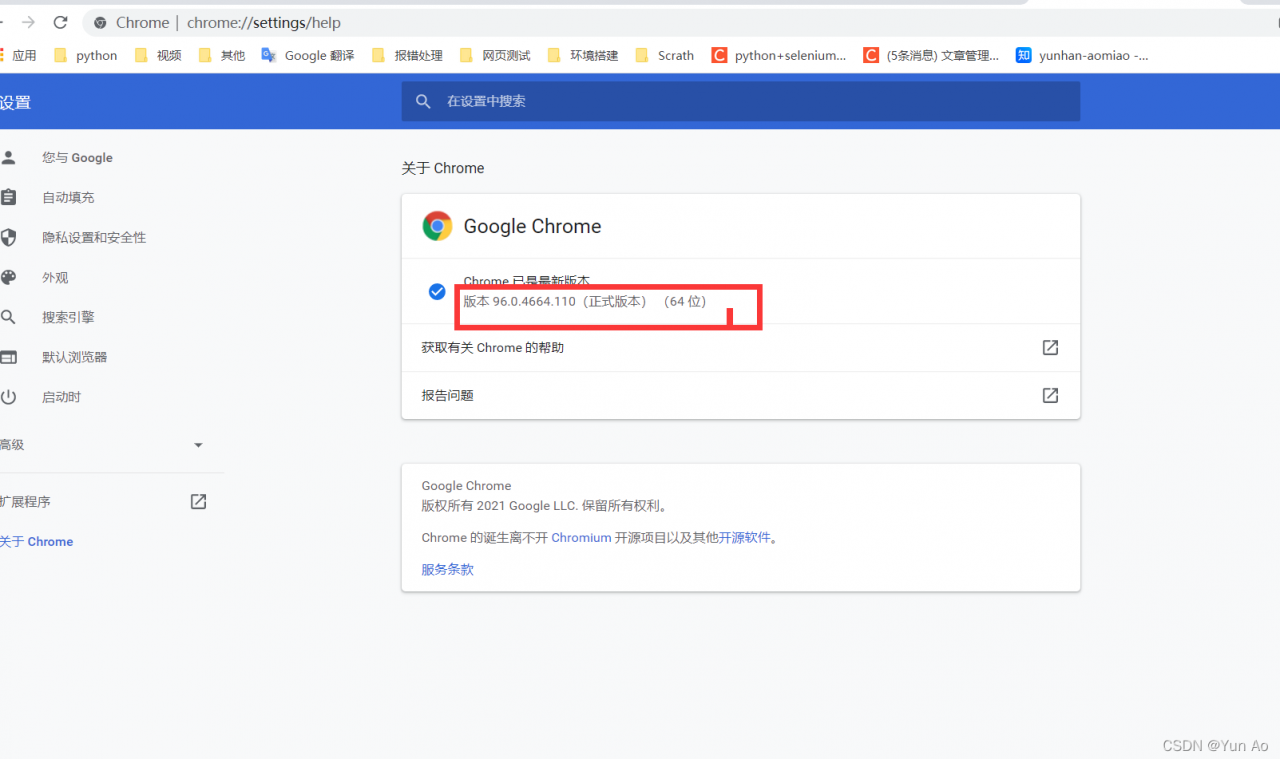

## Replace or Save chromedriver.exe

## Replace or Save chromedriver.exe