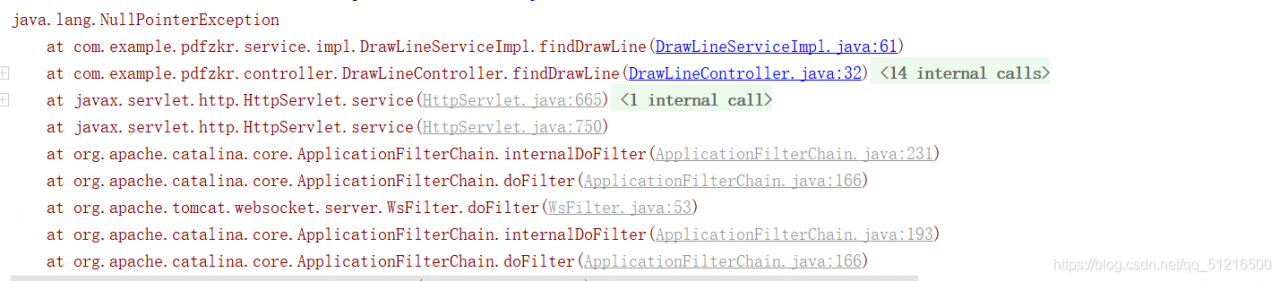

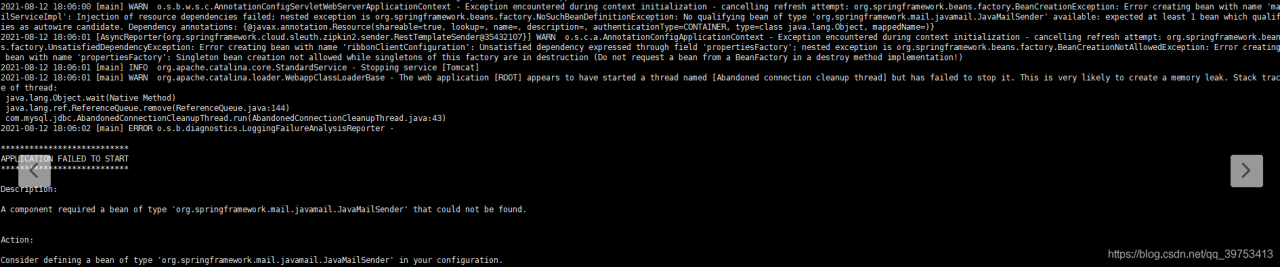

Java packaging error:

caused by: org.springframework.beans.factory.beancreationexception: error creating bean with name ‘org.springframework.transaction.annotation.proxytransactionmanagementconfiguration’: initialization of bean failed; Needed exception is org.springframework.beans.factory.nosuchbeandefinitionexception: no bean named ‘org.springframework.context.annotation.configurationclasspostprocessor.importregistry’ available

click the picture to see that it is actually an error message from javamailsender. First, check whether the configuration file is configured to send mail

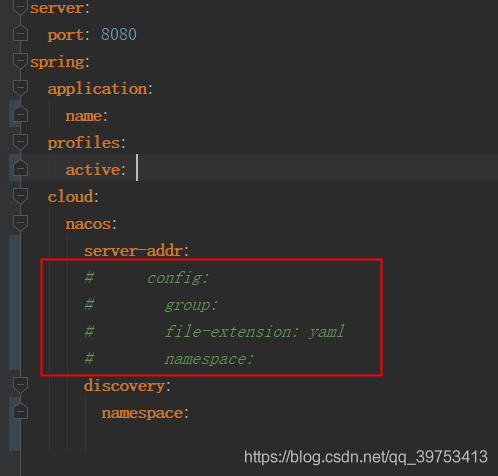

If the project is used with Nacos, check the local configuration file xxx.yml to see if the Nacos configuration is annotated. As shown in the following figure, if the annotation is, you can uncomment it.