Key error information

/usr/local/include/c++/8.2.0/bits/basic_string.tcc:1067:1: error: cannot call member function 'void std::basic_string<_CharT, _Traits, _Alloc>::_Rep::_M_set_sharable() [with _CharT = char32_t; _Traits = std::

char_traits<char32_t>; _Alloc = std::allocator<char32_t>]' without object

ninja: build stopped: subcommand failed.

Traceback (most recent call last):

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/utils/cpp_extension.py", line 1423, in _run_ninja_build

check=True)

File "/home/miniconda3/envs/motr/lib/python3.7/subprocess.py", line 512, in run

output=stdout, stderr=stderr)

subprocess.CalledProcessError: Command '['ninja', '-v']' returned non-zero exit status 1.

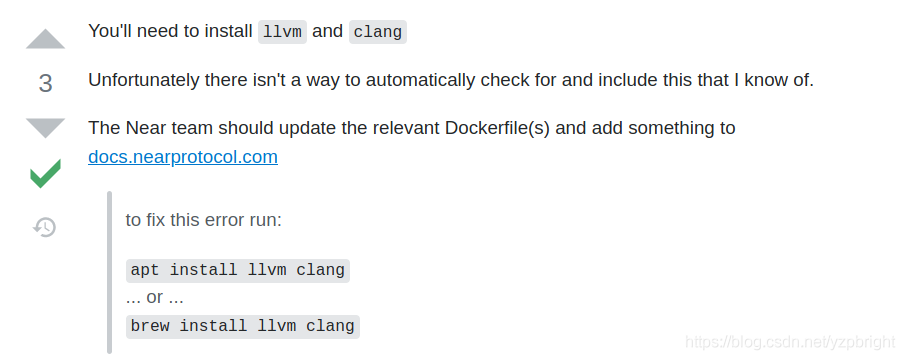

Solution:

/usr/local/include/c++/8.2.0/bits/basic_string.tcc Line 1067

__p->_M_set_sharable();

Change to:

(*__p)._M_set_sharable();

Attachment: the overall error report is as follows

which: no hipcc in (/home/miniconda3/envs/motr/bin:/home/miniconda3/bin:/usr/local/nvidia/bin:/usr/local/cuda/bin:/usr/local/bin:/usr/bin:/home/bin:/usr/local/sbin:/usr/sbin)

running build

running build_py

creating build/lib.linux-x86_64-3.7

creating build/lib.linux-x86_64-3.7/functions

copying functions/__init__.py -> build/lib.linux-x86_64-3.7/functions

copying functions/ms_deform_attn_func.py -> build/lib.linux-x86_64-3.7/functions

creating build/lib.linux-x86_64-3.7/modules

copying modules/__init__.py -> build/lib.linux-x86_64-3.7/modules

copying modules/ms_deform_attn.py -> build/lib.linux-x86_64-3.7/modules

running build_ext

building 'MultiScaleDeformableAttention' extension

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/nfs

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/nfs/volume-95-4

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/nfs/volume-95-4/liushuai

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src/cpu

creating /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src/cuda

/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/utils/cpp_extension.py:220: UserWarning:

!! WARNING !!

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

Your compiler (c++) is not compatible with the compiler Pytorch was

built with for this platform, which is g++ on linux. Please

use g++ to to compile your extension. Alternatively, you may

compile PyTorch from source using c++, and then you can also use

c++ to compile your extension.

See https://github.com/pytorch/pytorch/blob/master/CONTRIBUTING.md for help

with compiling PyTorch from source.

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

!! WARNING !!

platform=sys.platform))

Emitting ninja build file /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/build.ninja...

Compiling objects...

Allowing ninja to set a default number of workers... (overridable by setting the environment variable MAX_JOBS=N)

[1/3] c++ -MMD -MF /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src/cpu/ms_deform_attn_cpu.o.d -pthread -B /home/miniconda3/envs/motr/compiler_compat -Wl,--sysroot=/ -Wsign-compare -DNDEBUG -g -fwrapv -O3 -Wall -Wstrict-prototypes -fPIC -DWITH_CUDA -I/github/MOTR/models/ops/src -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/TH -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/THC -I/usr/local/cuda/include -I/home/miniconda3/envs/motr/include/python3.7m -c -c /github/MOTR/models/ops/src/cpu/ms_deform_attn_cpu.cpp -o /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src/cpu/ms_deform_attn_cpu.o -DTORCH_API_INCLUDE_EXTENSION_H -DTORCH_EXTENSION_NAME=MultiScaleDeformableAttention -D_GLIBCXX_USE_CXX11_ABI=0 -std=c++14

cc1plus: warning: command line option '-Wstrict-prototypes' is valid for C/ObjC but not for C++

[2/3] c++ -MMD -MF /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src/vision.o.d -pthread -B /home/miniconda3/envs/motr/compiler_compat -Wl,--sysroot=/ -Wsign-compare -DNDEBUG -g -fwrapv -O3 -Wall -Wstrict-prototypes -fPIC -DWITH_CUDA -I/github/MOTR/models/ops/src -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/TH -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/THC -I/usr/local/cuda/include -I/home/miniconda3/envs/motr/include/python3.7m -c -c /github/MOTR/models/ops/src/vision.cpp -o /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src/vision.o -DTORCH_API_INCLUDE_EXTENSION_H -DTORCH_EXTENSION_NAME=MultiScaleDeformableAttention -D_GLIBCXX_USE_CXX11_ABI=0 -std=c++14

cc1plus: warning: command line option '-Wstrict-prototypes' is valid for C/ObjC but not for C++

In file included from /github/MOTR/models/ops/src/vision.cpp:11:

/github/MOTR/models/ops/src/ms_deform_attn.h: In function 'at::Tensor ms_deform_attn_forward(const at::Tensor&, const at::Tensor&, const at::Tensor&, const at::Tensor&, const at::Tensor&, int)':

/github/MOTR/models/ops/src/ms_deform_attn.h:29:20: warning: 'at::DeprecatedTypeProperties& at::Tensor::type() const' is deprecated: Tensor.type() is deprecated. Instead use Tensor.options(), which in many ca

ses (e.g. in a constructor) is a drop-in replacement. If you were using data from type(), that is now available from Tensor itself, so instead of tensor.type().scalar_type(), use tensor.scalar_type() instead

and instead of tensor.type().backend() use tensor.device(). [-Wdeprecated-declarations]

if (value.type().is_cuda())

^

In file included from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/ATen/Tensor.h:11,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/ATen/Context.h:4,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/ATen/ATen.h:5,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/types.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data/dataloader_options.h:4,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data/dataloader/base.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data/dataloader/stateful.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data/dataloader.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/all.h:4,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/extension.h:4,

from /github/MOTR/models/ops/src/cpu/ms_deform_attn_cpu.h:12,

from /github/MOTR/models/ops/src/ms_deform_attn.h:13,

from /github/MOTR/models/ops/src/vision.cpp:11:

/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/ATen/core/TensorBody.h:262:30: note: declared here

DeprecatedTypeProperties & type() const {

^~~~

In file included from /github/MOTR/models/ops/src/vision.cpp:11:

/github/MOTR/models/ops/src/ms_deform_attn.h: In function 'std::vector<at::Tensor> ms_deform_attn_backward(const at::Tensor&, const at::Tensor&, const at::Tensor&, const at::Tensor&, const at::Tensor&, const

at::Tensor&, int)':

/github/MOTR/models/ops/src/ms_deform_attn.h:51:20: warning: 'at::DeprecatedTypeProperties& at::Tensor::type() const' is deprecated: Tensor.type() is deprecated. Instead use Tensor.options(), which in many ca

ses (e.g. in a constructor) is a drop-in replacement. If you were using data from type(), that is now available from Tensor itself, so instead of tensor.type().scalar_type(), use tensor.scalar_type() instead

and instead of tensor.type().backend() use tensor.device(). [-Wdeprecated-declarations]

if (value.type().is_cuda())

^

In file included from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/ATen/Tensor.h:11,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/ATen/Context.h:4,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/ATen/ATen.h:5,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/types.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data/dataloader_options.h:4,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data/dataloader/base.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data/dataloader/stateful.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data/dataloader.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/data.h:3,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/csrc/api/include/torch/all.h:4,

from /home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/torch/extension.h:4,

from /github/MOTR/models/ops/src/cpu/ms_deform_attn_cpu.h:12,

from /github/MOTR/models/ops/src/ms_deform_attn.h:13,

from /github/MOTR/models/ops/src/vision.cpp:11:

/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/ATen/core/TensorBody.h:262:30: note: declared here

DeprecatedTypeProperties & type() const {

^~~~

[3/3] /usr/local/cuda/bin/nvcc -DWITH_CUDA -I/github/MOTR/models/ops/src -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/i

nclude/torch/csrc/api/include -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/TH -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/THC -I/usr/local/cuda/include -I/

home/miniconda3/envs/motr/include/python3.7m -c -c /github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.cu -o /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src/cuda/ms_deform_attn

_cuda.o -D__CUDA_NO_HALF_OPERATORS__ -D__CUDA_NO_HALF_CONVERSIONS__ -D__CUDA_NO_HALF2_OPERATORS__ --expt-relaxed-constexpr --compiler-options ''"'"'-fPIC'"'"'' -DCUDA_HAS_FP16=1 -D__CUDA_NO_HALF_OPERATORS__ -

D__CUDA_NO_HALF_CONVERSIONS__ -D__CUDA_NO_HALF2_OPERATORS__ -DTORCH_API_INCLUDE_EXTENSION_H -DTORCH_EXTENSION_NAME=MultiScaleDeformableAttention -D_GLIBCXX_USE_CXX11_ABI=0 -gencode=arch=compute_61,code=sm_61

-std=c++14

FAILED: /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.o

/usr/local/cuda/bin/nvcc -DWITH_CUDA -I/github/MOTR/models/ops/src -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include

/torch/csrc/api/include -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/TH -I/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/include/THC -I/usr/local/cuda/include -I/home/m

iniconda3/envs/motr/include/python3.7m -c -c /github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.cu -o /github/MOTR/models/ops/build/temp.linux-x86_64-3.7/github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.

o -D__CUDA_NO_HALF_OPERATORS__ -D__CUDA_NO_HALF_CONVERSIONS__ -D__CUDA_NO_HALF2_OPERATORS__ --expt-relaxed-constexpr --compiler-options ''"'"'-fPIC'"'"'' -DCUDA_HAS_FP16=1 -D__CUDA_NO_HALF_OPERATORS__ -D__CUD

A_NO_HALF_CONVERSIONS__ -D__CUDA_NO_HALF2_OPERATORS__ -DTORCH_API_INCLUDE_EXTENSION_H -DTORCH_EXTENSION_NAME=MultiScaleDeformableAttention -D_GLIBCXX_USE_CXX11_ABI=0 -gencode=arch=compute_61,code=sm_61 -std=c

++14

/github/MOTR/models/ops/src/cuda/ms_deform_im2col_cuda.cuh(261): warning: variable "q_col" was declared but never referenced

detected during instantiation of "void ms_deformable_im2col_cuda(cudaStream_t, const scalar_t *, const int64_t *, const int64_t *, const scalar_t *, const scalar_t *, int, int, int, int, int, int, i

nt, scalar_t *) [with scalar_t=double]"

/github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.cu(64): here

/github/MOTR/models/ops/src/cuda/ms_deform_im2col_cuda.cuh(762): warning: variable "q_col" was declared but never referenced

detected during instantiation of "void ms_deformable_col2im_cuda(cudaStream_t, const scalar_t *, const scalar_t *, const int64_t *, const int64_t *, const scalar_t *, const scalar_t *, int, int, int

, int, int, int, int, scalar_t *, scalar_t *, scalar_t *) [with scalar_t=double]"

/github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.cu(134): here

/github/MOTR/models/ops/src/cuda/ms_deform_im2col_cuda.cuh(872): warning: variable "q_col" was declared but never referenced

detected during instantiation of "void ms_deformable_col2im_cuda(cudaStream_t, const scalar_t *, const scalar_t *, const int64_t *, const int64_t *, const scalar_t *, const scalar_t *, int, int, int

, int, int, int, int, scalar_t *, scalar_t *, scalar_t *) [with scalar_t=double]"

/github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.cu(134): here

/github/MOTR/models/ops/src/cuda/ms_deform_im2col_cuda.cuh(331): warning: variable "q_col" was declared but never referenced

detected during instantiation of "void ms_deformable_col2im_cuda(cudaStream_t, const scalar_t *, const scalar_t *, const int64_t *, const int64_t *, const scalar_t *, const scalar_t *, int, int, int

, int, int, int, int, scalar_t *, scalar_t *, scalar_t *) [with scalar_t=double]"

/github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.cu(134): here

/github/MOTR/models/ops/src/cuda/ms_deform_im2col_cuda.cuh(436): warning: variable "q_col" was declared but never referenced

detected during instantiation of "void ms_deformable_col2im_cuda(cudaStream_t, const scalar_t *, const scalar_t *, const int64_t *, const int64_t *, const scalar_t *, const scalar_t *, int, int, int

, int, int, int, int, scalar_t *, scalar_t *, scalar_t *) [with scalar_t=double]"

/github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.cu(134): here

/github/MOTR/models/ops/src/cuda/ms_deform_im2col_cuda.cuh(544): warning: variable "q_col" was declared but never referenced

detected during instantiation of "void ms_deformable_col2im_cuda(cudaStream_t, const scalar_t *, const scalar_t *, const int64_t *, const int64_t *, const scalar_t *, const scalar_t *, int, int, int

, int, int, int, int, scalar_t *, scalar_t *, scalar_t *) [with scalar_t=double]"

/github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.cu(134): here

/github/MOTR/models/ops/src/cuda/ms_deform_im2col_cuda.cuh(649): warning: variable "q_col" was declared but never referenced

detected during instantiation of "void ms_deformable_col2im_cuda(cudaStream_t, const scalar_t *, const scalar_t *, const int64_t *, const int64_t *, const scalar_t *, const scalar_t *, int, int, int

, int, int, int, int, scalar_t *, scalar_t *, scalar_t *) [with scalar_t=double]"

/github/MOTR/models/ops/src/cuda/ms_deform_attn_cuda.cu(134): here

/usr/local/include/c++/8.2.0/bits/basic_string.tcc: In instantiation of 'static std::basic_string<_CharT, _Traits, _Alloc>::_Rep* std::basic_string<_CharT, _Traits, _Alloc>::_Rep::_S_create(std::basic_string<

_CharT, _Traits, _Alloc>::size_type, std::basic_string<_CharT, _Traits, _Alloc>::size_type, const _Alloc&) [with _CharT = char16_t; _Traits = std::char_traits<char16_t>; _Alloc = std::allocator<char16_t>; std

::basic_string<_CharT, _Traits, _Alloc>::size_type = long unsigned int]':

/usr/local/include/c++/8.2.0/bits/basic_string.tcc:578:28: required from 'static _CharT* std::basic_string<_CharT, _Traits, _Alloc>::_S_construct(_InIterator, _InIterator, const _Alloc&, std::forward_iterat

or_tag) [with _FwdIterator = const char16_t*; _CharT = char16_t; _Traits = std::char_traits<char16_t>; _Alloc = std::allocator<char16_t>]'

/usr/local/include/c++/8.2.0/bits/basic_string.h:5043:20: required from 'static _CharT* std::basic_string<_CharT, _Traits, _Alloc>::_S_construct_aux(_InIterator, _InIterator, const _Alloc&, std::__false_typ

e) [with _InIterator = const char16_t*; _CharT = char16_t; _Traits = std::char_traits<char16_t>; _Alloc = std::allocator<char16_t>]'

/usr/local/include/c++/8.2.0/bits/basic_string.h:5064:24: required from 'static _CharT* std::basic_string<_CharT, _Traits, _Alloc>::_S_construct(_InIterator, _InIterator, const _Alloc&) [with _InIterator =

const char16_t*; _CharT = char16_t; _Traits = std::char_traits<char16_t>; _Alloc = std::allocator<char16_t>]'

/usr/local/include/c++/8.2.0/bits/basic_string.tcc:656:134: required from 'std::basic_string<_CharT, _Traits, _Alloc>::basic_string(const _CharT*, std::basic_string<_CharT, _Traits, _Alloc>::size_type, cons

t _Alloc&) [with _CharT = char16_t; _Traits = std::char_traits<char16_t>; _Alloc = std::allocator<char16_t>; std::basic_string<_CharT, _Traits, _Alloc>::size_type = long unsigned int]'

/usr/local/include/c++/8.2.0/bits/basic_string.h:6716:95: required from here

/usr/local/include/c++/8.2.0/bits/basic_string.tcc:1067:1: error: cannot call member function 'void std::basic_string<_CharT, _Traits, _Alloc>::_Rep::_M_set_sharable() [with _CharT = char16_t; _Traits = std::

char_traits<char16_t>; _Alloc = std::allocator<char16_t>]' without object

__p->_M_set_sharable();

^ ~~~~~~~~~

/usr/local/include/c++/8.2.0/bits/basic_string.tcc: In instantiation of 'static std::basic_string<_CharT, _Traits, _Alloc>::_Rep* std::basic_string<_CharT, _Traits, _Alloc>::_Rep::_S_create(std::basic_string<

_CharT, _Traits, _Alloc>::size_type, std::basic_string<_CharT, _Traits, _Alloc>::size_type, const _Alloc&) [with _CharT = char32_t; _Traits = std::char_traits<char32_t>; _Alloc = std::allocator<char32_t>; std

::basic_string<_CharT, _Traits, _Alloc>::size_type = long unsigned int]':

/usr/local/include/c++/8.2.0/bits/basic_string.tcc:578:28: required from 'static _CharT* std::basic_string<_CharT, _Traits, _Alloc>::_S_construct(_InIterator, _InIterator, const _Alloc&, std::forward_iterat

or_tag) [with _FwdIterator = const char32_t*; _CharT = char32_t; _Traits = std::char_traits<char32_t>; _Alloc = std::allocator<char32_t>]'

/usr/local/include/c++/8.2.0/bits/basic_string.h:5043:20: required from 'static _CharT* std::basic_string<_CharT, _Traits, _Alloc>::_S_construct_aux(_InIterator, _InIterator, const _Alloc&, std::__false_typ

e) [with _InIterator = const char32_t*; _CharT = char32_t; _Traits = std::char_traits<char32_t>; _Alloc = std::allocator<char32_t>]'

/usr/local/include/c++/8.2.0/bits/basic_string.h:5064:24: required from 'static _CharT* std::basic_string<_CharT, _Traits, _Alloc>::_S_construct(_InIterator, _InIterator, const _Alloc&) [with _InIterator =

const char32_t*; _CharT = char32_t; _Traits = std::char_traits<char32_t>; _Alloc = std::allocator<char32_t>]'

/usr/local/include/c++/8.2.0/bits/basic_string.tcc:656:134: required from 'std::basic_string<_CharT, _Traits, _Alloc>::basic_string(const _CharT*, std::basic_string<_CharT, _Traits, _Alloc>::size_type, cons

t _Alloc&) [with _CharT = char32_t; _Traits = std::char_traits<char32_t>; _Alloc = std::allocator<char32_t>; std::basic_string<_CharT, _Traits, _Alloc>::size_type = long unsigned int]'

/usr/local/include/c++/8.2.0/bits/basic_string.h:6721:95: required from here

/usr/local/include/c++/8.2.0/bits/basic_string.tcc:1067:1: error: cannot call member function 'void std::basic_string<_CharT, _Traits, _Alloc>::_Rep::_M_set_sharable() [with _CharT = char32_t; _Traits = std::

char_traits<char32_t>; _Alloc = std::allocator<char32_t>]' without object

ninja: build stopped: subcommand failed.

Traceback (most recent call last):

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/utils/cpp_extension.py", line 1423, in _run_ninja_build

check=True)

File "/home/miniconda3/envs/motr/lib/python3.7/subprocess.py", line 512, in run

output=stdout, stderr=stderr)

subprocess.CalledProcessError: Command '['ninja', '-v']' returned non-zero exit status 1.

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "setup.py", line 70, in <module>

cmdclass={"build_ext": torch.utils.cpp_extension.BuildExtension},

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/setuptools/__init__.py", line 153, in setup

return distutils.core.setup(**attrs)

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/core.py", line 148, in setup

dist.run_commands()

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/dist.py", line 966, in run_commands

self.run_command(cmd)

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/command/build.py", line 135, in run

self.run_command(cmd_name)

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/cmd.py", line 313, in run_command

self.distribution.run_command(command)

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/dist.py", line 985, in run_command

cmd_obj.run()

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/setuptools/command/build_ext.py", line 79, in run

_build_ext.run(self)

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/Cython/Distutils/old_build_ext.py", line 186, in run

_build_ext.build_ext.run(self)

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/command/build_ext.py", line 340, in run

self.build_extensions()

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/utils/cpp_extension.py", line 603, in build_extensions

build_ext.build_extensions(self)

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/Cython/Distutils/old_build_ext.py", line 195, in build_extensions

_build_ext.build_ext.build_extensions(self)

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/command/build_ext.py", line 449, in build_extensions

self._build_extensions_serial()

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/command/build_ext.py", line 474, in _build_extensions_serial

self.build_extension(ext)

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/setuptools/command/build_ext.py", line 196, in build_extension

_build_ext.build_extension(self, ext)

File "/home/miniconda3/envs/motr/lib/python3.7/distutils/command/build_ext.py", line 534, in build_extension

depends=ext.depends)

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/utils/cpp_extension.py", line 437, in unix_wrap_ninja_compile

with_cuda=with_cuda)

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/utils/cpp_extension.py", line 1163, in _write_ninja_file_and_compile_objects

error_prefix='Error compiling objects for extension')

File "/home/miniconda3/envs/motr/lib/python3.7/site-packages/torch/utils/cpp_extension.py", line 1436, in _run_ninja_build

raise RuntimeError(message)

RuntimeError: Error compiling objects for extension