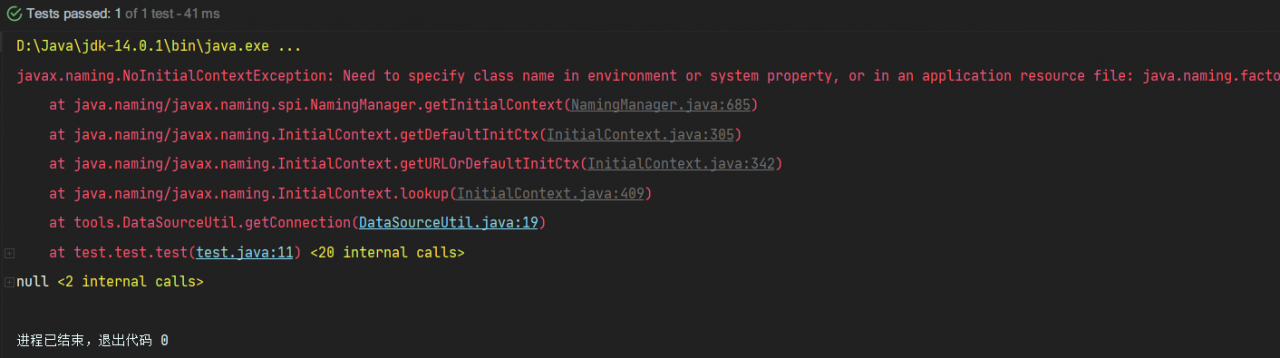

When using DBCP to configure data sources, a test class was written. In the test class, DBCP was called to get the database connection error: javax.naming.NoInitialContextException: Need to specify class name in environment or or in,

Check whether the dependent jar package is imported:

commons-dbcp2-2.8.0. Jar

commons-pool2-2.10.0. Jar

mysql8.0 jar package

mysql5.0 jar package

Test code:

package test;

import org.junit.Test;

import tools.DataSourceUtil;

import java.sql.Connection;

public class test {

@Test

public void test(){

Connection connection = DataSourceUtil.getConnection();

System.out.println(connection);

}

}

Database source code:

package tools;

import javax.naming.InitialContext;

import javax.naming.NamingException;

import javax.sql.DataSource;

import java.sql.Connection;

import java.sql.SQLException;

public class DataSourceUtil {

// Get the connection

public static Connection getConnection() {

// ctrl + alt + t --- select 6

// instantiate the object of the initial context

Connection connection = null;

try {

InitialContext initialContext = new InitialContext();

// Get the data in the configuration and get the object object

Object lookup = initialContext.lookup("java:comp/env/jdbc/easybuy");

// unboxing strong turn to get the connection pool

DataSource ds = (DataSource) lookup;

// get the connection pool to give me a connection

connection = ds.getConnection();

} catch (NamingException e) {

e.printStackTrace();

} catch (SQLException throwables) {

throwables.printStackTrace();

}

// Return to link

return connection;

}

// close connection

public static void closeConnection(Connection connection) {

if (connection != null) {

try {

connection.close();

} catch (SQLException throwables) {

throwables.printStackTrace();

}

}

}

}

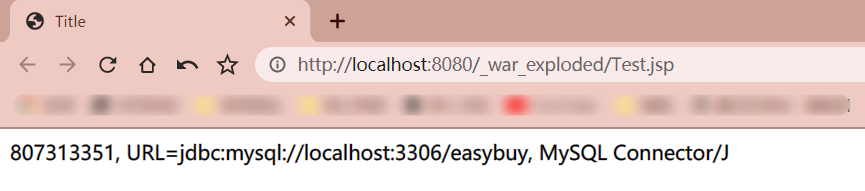

Bug map:

reason:

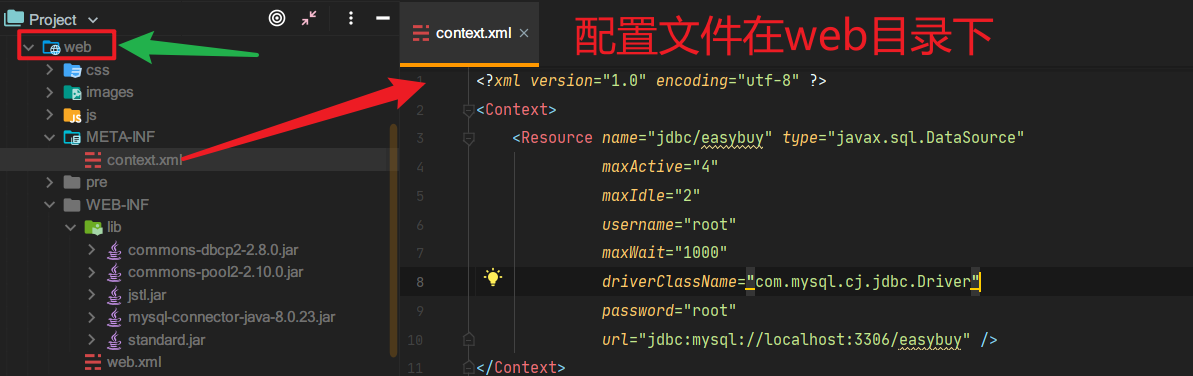

When DBCP is used to connect to the database, the main function is always used to test. The initialcontext is only available in the context of the web application server. While the configuration file is in the web directory, it is obvious that if initialcontext wants to access the configuration file, it must run on the web server to connect to the database and get the connection object

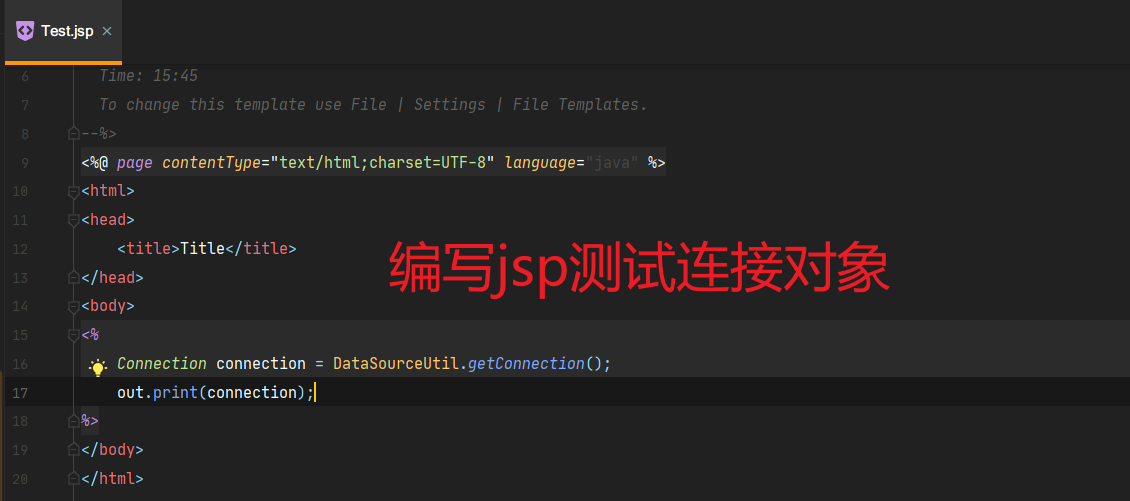

It can’t be tested directly with the main function, it can only be displayed in Tomcat or servlet or JSP

Running results:

#Successfully get the connection object!