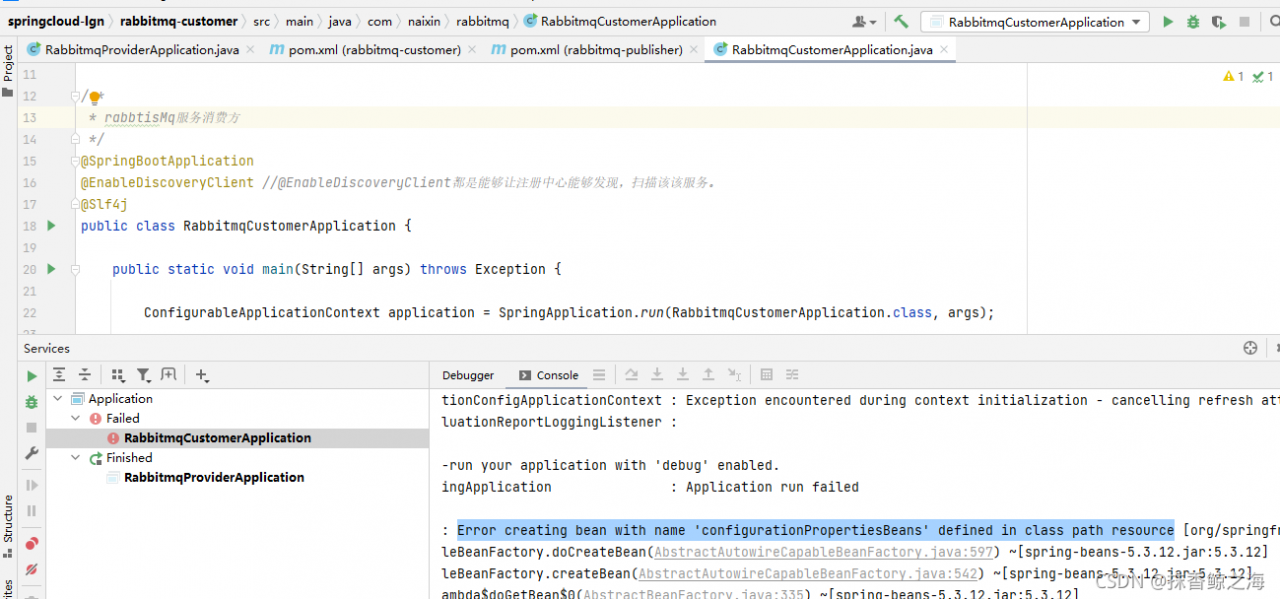

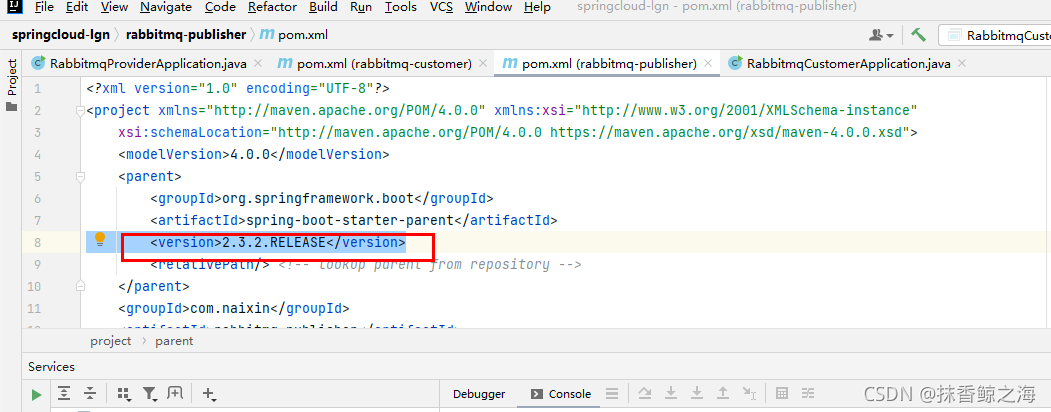

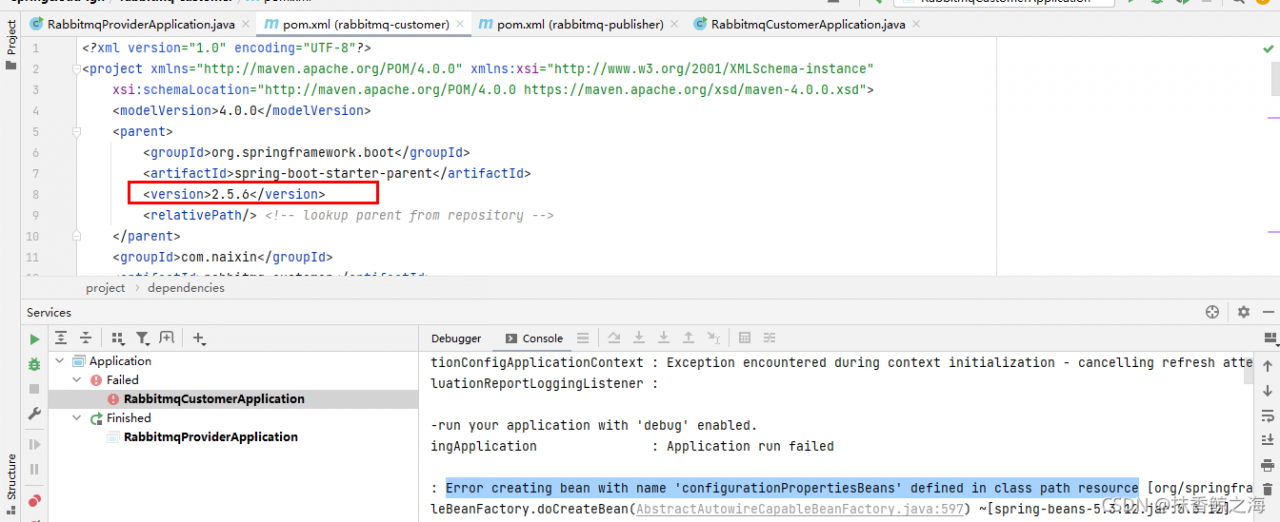

Integrate the registry Nacos and start the provider_ The following errors are reported during service:

view the spring boot version:

just modify the version.

Author Archives: Robins

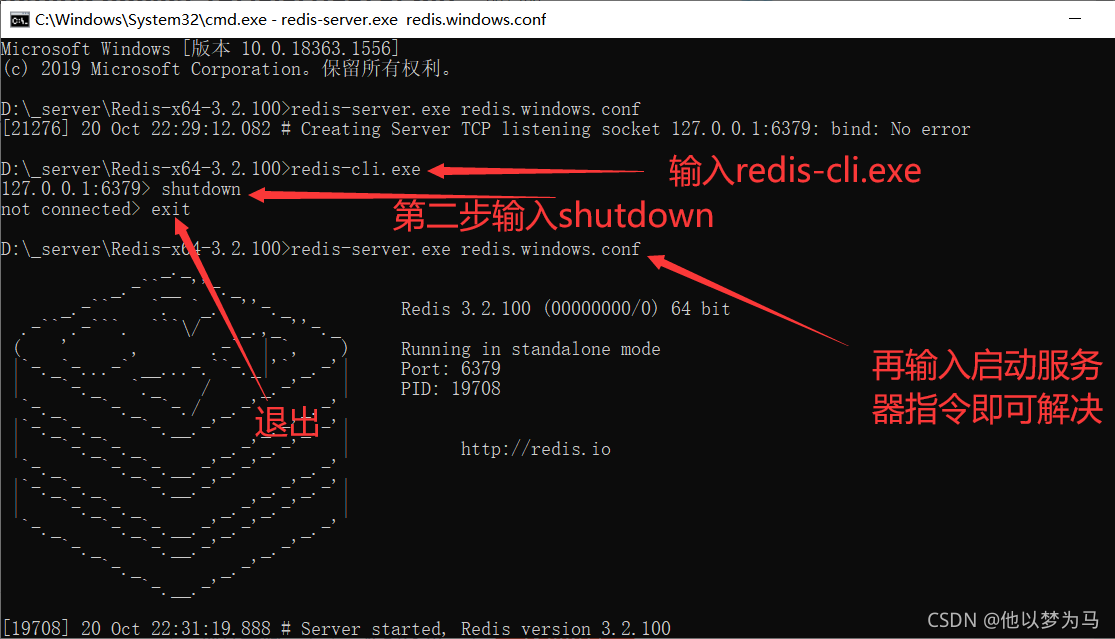

Creating Server TCP listening socket 127.0.0.1:6379: bind: No error

Error in starting redis service under Windows creating server TCP listening socket 127.0.0.1:6379: bind: no error

Solution:

[Solved] K8s Error: ERROR: Unable to access datastore to query node configuration

K8s start Service Kube calico start reports the following error

Skipping datastore connection test

ERROR: Unable to access datastore to query node configuration

Terminating

Calico node failed to startSolution:

The main reason for this problem is that the firewall of the primary node is turned on. Just turn off the firewall of the primary node (that is, the server where etcd is installed)

systemctl stop firewalld

Other possible errors

1. The address of etcd in calico configuration file is written incorrectly

2. Etcd service is not started

Brew cask installation software prompt: error: unknown command: cask

Brew cask installation software prompt: error: unknown command: cask

problem

➜ /Users/test > brew cask install mounty

Error: Unknown command: cask

Brew cask installation software prompt: error: unknown command: cask

resolvent:

Use brew install -- cask mount

➜ /Users/test >brew install --cask mounty

Updating Homebrew...

Reference address: install software through brew cask on MAC prompt: error: unknown command: cask?

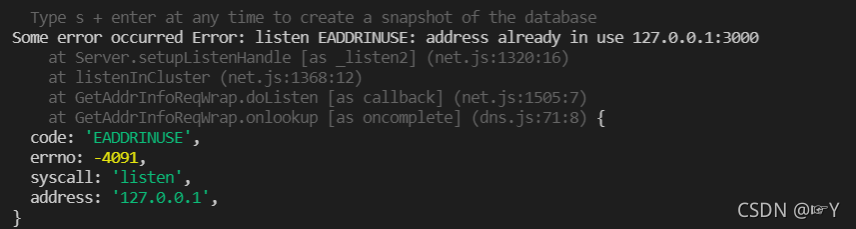

Some error occurred error: listen eaddinuse: address already in use 127.0.0.1:3000

In this case, the port is occupied

solution:

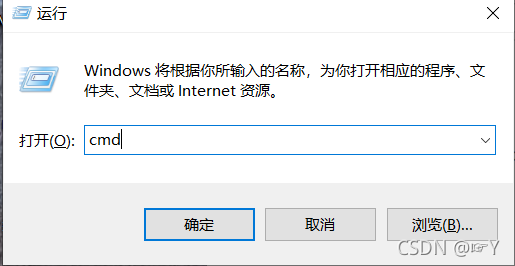

window + R open run input CMD open DOS command window

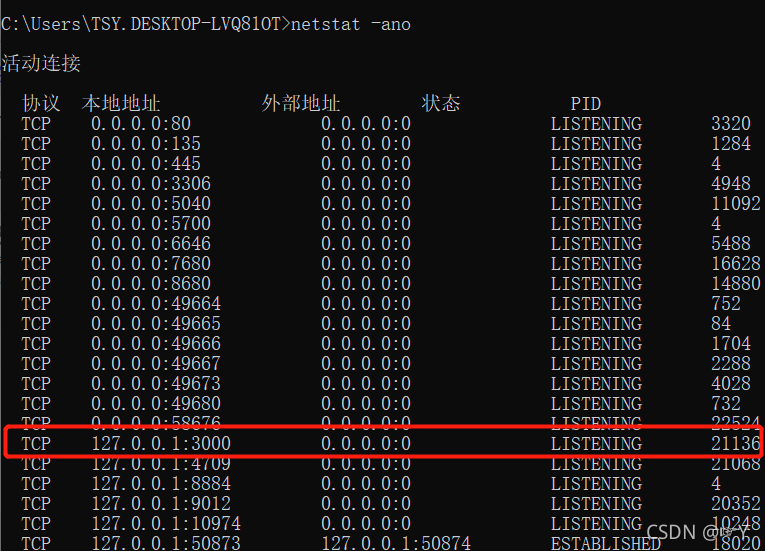

Input: netstat - ano view port

the red mark indicates that the port is occupied,

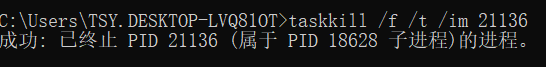

Enter the TCP number corresponding to taskkill/F/T/im in CMD to turn off the occupied port

and then NPM run serve can be run normally

error: ‘CLOCK_MONOTONIC‘ undeclared (first use in this function)

Error message:

/home/xx/test/main.c: In function ‘main’:

/home/xx/test/main.c:37:21: error: storage size of ‘start’ isn’t known

37 | struct timespec start, end; //nanoseconds

| ^~~~~

/home/xx/test/main.c:37:28: error: storage size of ‘end’ isn’t known

37 | struct timespec start, end; //nanoseconds

| ^~~

/home/xx/test/main.c:43:5: warning: implicit declaration of function ‘clock_gettime’ [-Wimplicit-function-declaration]

43 | clock_gettime(CLOCK_MONOTONIC, &start);

| ^~~~~~~~~~~~~

/home/xx/test/main.c:43:19: error: ‘CLOCK_MONOTONIC’ undeclared (first use in this function)

43 | clock_gettime(CLOCK_MONOTONIC, &start);

| ^~~~~~~~~~~~~~~

/home/xx/test/main.c:43:19: note: each undeclared identifier is reported only once for each function it appears in

make[2]: *** [CMakeFiles/WBSM4.dir/build.make:63: CMakeFiles/xx.dir/test/main.c.o] Error 1

make[1]: *** [CMakeFiles/Makefile2:78: CMakeFiles/xx.dir/all] Error 2

make: *** [Makefile:84: all] Error 2

Solution:

Add a compiler to the cmakelists.txt file:

add_compile_options(-D_POSIX_C_SOURCE=199309L) Solution source [copyright infringement and deletion]:

c++ – error: ‘CLOCK_ MONOTONIC’ undeclared (first use in this function) – Stack Overflow

error: ‘CLOCK_ Mononic ‘undeclared problem solving_ Whahu 1989 column – CSDN blog

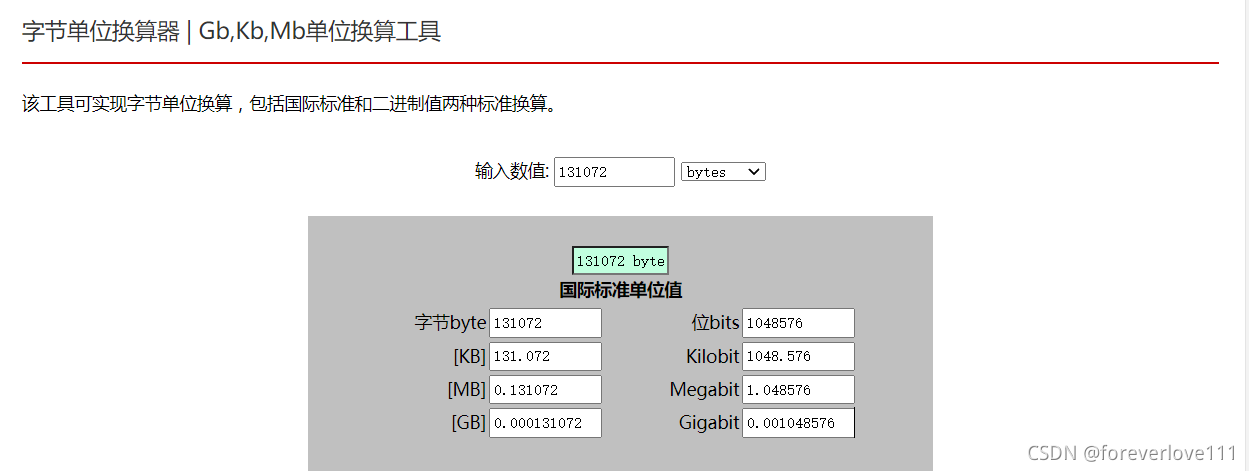

Error: The size of the connection buffer (131072) was not large enough

R language, carry out geo data mining and analysis, Download geo data online,

gset <- getGEO("GSE94994", GSEMatrix =TRUE, AnnotGPL=FALSE)The following error is reported:

Found 1 file(s)

GSE94994_series_matrix.txt.gz

Using locally cached version: C:\Users\ENMONS~1\AppData\Local\Temp\Rtmpe27iLR/GSE94994_series_matrix.txt.gz

Error: The size of the connection buffer (131072) was not large enough

to fit a complete line:

* Increase it by setting `Sys.setenv("VROOM_CONNECTION_SIZE")`Rstido’s default link cache is 131072 bytes. After conversion, it is 131kb, 0.131mb

But the size of the data you downloaded & gt; 131072 bytes, so we need to adjust the default connection cache for normal download

resolvent:

Sys.setenv("VROOM_CONNECTION_SIZE"=99999999)Code implementation:

> gset <- getGEO("GSE94994", GSEMatrix =TRUE, AnnotGPL=FALSE)

Found 1 file(s)

GSE94994_series_matrix.txt.gz

Using locally cached version: C:\Users\ENMONS~1\AppData\Local\Temp\Rtmpe27iLR/GSE94994_series_matrix.txt.gz

Error: The size of the connection buffer (111) was not large enough

to fit a complete line:

* Increase it by setting `Sys.setenv("VROOM_CONNECTION_SIZE")`

> Sys.setenv("VROOM_CONNECTION_SIZE"=99999999)

> gset <- getGEO("GSE94994", GSEMatrix =TRUE, AnnotGPL=FALSE)

Found 1 file(s)

GSE94994_series_matrix.txt.gz

Using locally cached version: C:\Users\ENMONS~1\AppData\Local\Temp\Rtmpe27iLR/GSE94994_series_matrix.txt.gz

Rows: 18 Columns: 160

0s-- Column specification --------------------------------------------------------------------------------------------------------

Delimiter: "\t"

chr (1): ID_REF

dbl (159): GSM2493904, GSM2493905, GSM2493906, GSM2493907, GSM2493908, GSM2493909, GSM2493910, GSM2493911, GSM2493912, GSM24...

i Use `spec()` to retrieve the full column specification for this data.

i Specify the column types or set `show_col_types = FALSE` to quiet this message.

Using locally cached version of GPL23075 found here:

C:\Users\ENMONS~1\AppData\Local\Temp\Rtmpe27iLR/GPL23075.soft Vue cli 4 CMD command creates an error: error command failed NPM install – loglevel error – solution

Vue cli 4 CMD command creates an error: error command failed NPM install – loglevel error – solution

Solution: on the CMD command line, enter NPM cache clean — force

Vue project startup error: cannot find module XXX

Cause: a module that the project depends on cannot be found

Solution:

1. delete the folder where modules are stored node_ module;

2. Execute the clear cache command NPM cache clean

If an error is reported, use to force to clear NPM cache clean -- force

If an error is reported, delete the package-lock.json file;

3. Reinstall the module, NPM install ; (the package-lock.json file will be automatically regenerated)

Then restart NPM run dev.

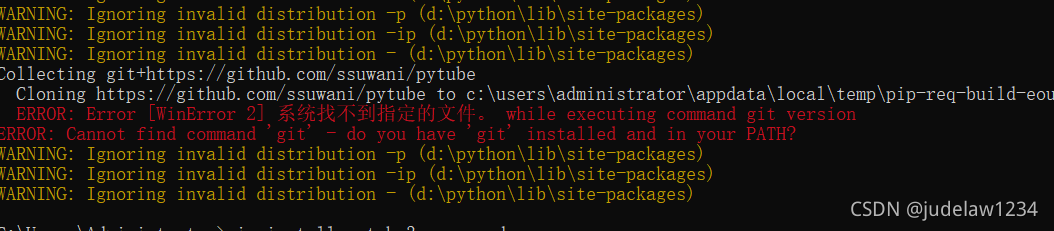

ERROR: Could not find a version that satisfies the requirement pytube (from versions: none)ERROR: N

This problem may be related to the version

pip install pytube3 –upgrade

Just add an — upgrade after it

KAFKA – ERROR Failed to write meta.properties due to (kafka.server.BrokerMetadataCheckpoint)

I set up Kafka source code read environment on Windows10, and encourted with error below, so what is wrong, I am a fresh man to learn kafka

[2021-04-29 19:57:42,957] ERROR Failed to write meta.properties due to (kafka.server.BrokerMetadataCheckpoint)

java.nio.file.AccessDeniedException: C:\Users\a\workspace\kafka\logs

at sun.nio.fs.WindowsException.translateToIOException(WindowsException.java:83)

at sun.nio.fs.WindowsException.rethrowAsIOException(WindowsException.java:97)

at sun.nio.fs.WindowsException.rethrowAsIOException(WindowsException.java:102)

at sun.nio.fs.WindowsFileSystemProvider.newFileChannel(WindowsFileSystemProvider.java:115)

[2021-04-29 19:57:42,973] ERROR [KafkaServer id=0] Fatal error during KafkaServer startup. Prepare to shutdown (kafka.server.KafkaServer)

java.nio.file.AccessDeniedException: C:\Users\a\workspace\kafka\logs

at sun.nio.fs.WindowsException.translateToIOException(WindowsException.java:83)

at sun.nio.fs.WindowsException.rethrowAsIOException(WindowsException.java:97)

at sun.nio.fs.WindowsException.rethrowAsIOException(WindowsException.java:102)

at sun.nio.fs.WindowsFileSystemProvider.newFileChannel(WindowsFileSystemProvider.java:115)

and another ERROR below

[2021-04-29 19:57:43,821] ERROR Error while writing to checkpoint file C:\Users\a\workspace\kafka\logs\recovery-point-offset-checkpoint (kafka.server.LogDirFailureChannel)

java.nio.file.AccessDeniedException: C:\Users\a\workspace\kafka\logs

at sun.nio.fs.WindowsException.translateToIOException(WindowsException.java:83)

at sun.nio.fs.WindowsException.rethrowAsIOException(WindowsException.java:97)

at sun.nio.fs.WindowsException.rethrowAsIOException(WindowsException.java:102)

at sun.nio.fs.WindowsFileSystemProvider.newFileChannel(WindowsFileSystemProvider.java:115)

[2021-04-29 19:57:43,823] ERROR Disk error while writing recovery offsets checkpoint in directory C:\Users\a\workspace\kafka\logs: Error while writing to checkpoint file C:\Users\a\workspace\kafka\logs\recovery-point-offset-checkpoint (kafka.log.LogManager)

[2021-04-29 19:57:43,833] ERROR Error while writing to checkpoint file C:\Users\a\workspace\kafka\logs\log-start-offset-checkpoint (kafka.server.LogDirFailureChannel)

java.nio.file.AccessDeniedException: C:\Users\a\workspace\kafka\logs

at sun.nio.fs.WindowsException.translateToIOException(WindowsException.java:83)

at sun.nio.fs.WindowsException.rethrowAsIOException(WindowsException.java:97)

at sun.nio.fs.WindowsException.rethrowAsIOException(WindowsException.java:102)

at sun.nio.fs.WindowsFileSystemProvider.newFileChannel(WindowsFileSystemProvider.java:115)

[2021-04-29 19:57:43,834] ERROR Disk error while writing log start offsets checkpoint in directory C:\Users\a\workspace\kafka\logs: Error while writing to checkpoint file C:\Users\a\workspace\kafka\logs\log-start-offset-checkpoint (kafka.log.LogManager)

I downgraded to the Kafka 2.8.1 version (Index of /kafka/2.8.1) and after that everything is working perfectly.

Share

Improve this answer

This is a common error when log retention happens.

Kafka doesn’t have good support for windows filesystem.

You can use WSL2 or Docker to work around these limitations –

This seems to be a bug affecting some Kafka versions. Try installing kafka_2.12-2.8.1.tgz version. It will solve your problem.

I have tried using Kafka kafka_2.12-3.0.0.tgz and the same issue occurred. Seems 2.12-3.0.0 have issues with windows.

Try to downgrade the version to kafka_2.12-2.8.1.tgz. This resolved all issues and working fine.

CompilationFailureException: Compilation failure error: cannot access NotThreadSafe

Error content:

Caused by: org.apache.maven.plugin.compiler.CompilationFailureException: Compilation failure

error: cannot access NotThreadSafe

Error reporting reason:

<dependency>

<groupId>org.apache.httpcomponents</groupId>

<artifactId>httpclient</artifactId>

<version>4.5.2</version>

</dependency>

<dependency>

<groupId>org.apache.httpcomponents</groupId>

<artifactId>httpcore</artifactId>

<version>4.4.5</version>

</dependency>

The versions of httpclient and HttpCore packages do not match.

be careful! Many online Posts say that reducing HttpCore to 4.4.4 can solve the problem, because 4.5.2 and 4.4.4 match.

However, the httpclient package itself has security vulnerabilities and is vulnerable to XSS attacks.

For httpclient problems, see: https://github.com/advisories/GHSA-7r82-7xv7-xcpj

Apache HttpClient versions prior to version 4.5.13 and 5.0.3 can misinterpret malformed authority component in request URIs passed to the library as java.net.URI object and pick the wrong target host for request execution.

Therefore, you can upgrade httpclient to 4.5.13 here, which matches 4.4.5 of HttpCore. satisfy both sides.

Correct reference posture:

<dependency>

<groupId>org.apache.httpcomponents</groupId>

<artifactId>httpclient</artifactId>

<version>4.5.13</version>

</dependency>

<dependency>

<groupId>org.apache.httpcomponents</groupId>

<artifactId>httpcore</artifactId>

<version>4.4.5</version>

</dependency>