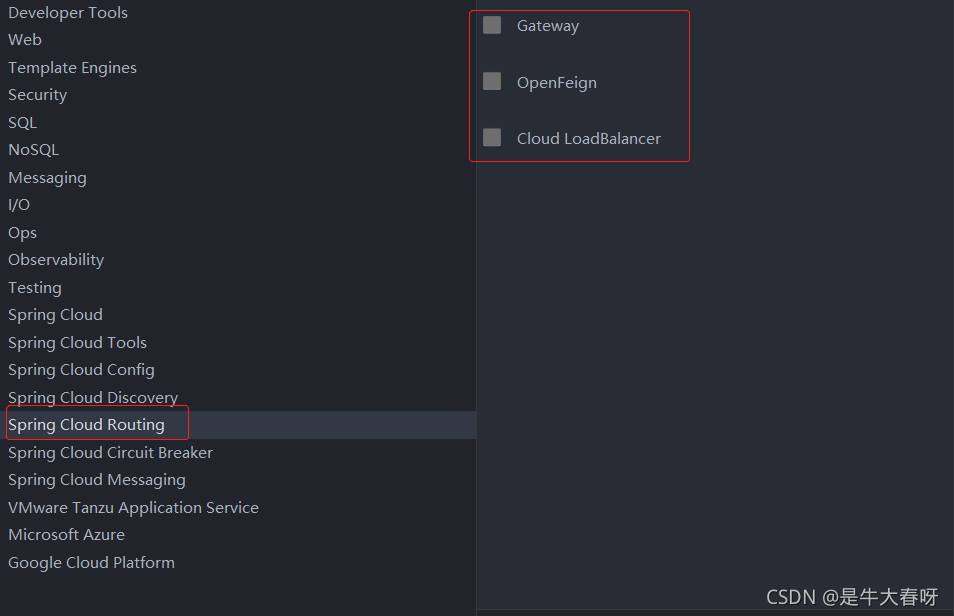

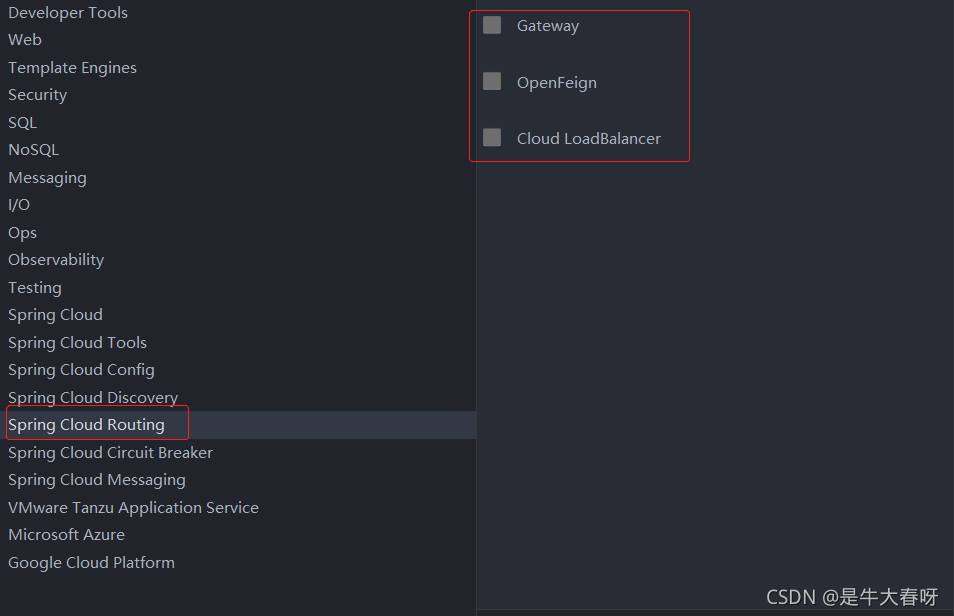

When creating the zuul project, I found that spring cloud routing does not have zuul

and then directly click next. I think it is possible to introduce zuul dependency by directly writing dependency code in POM file

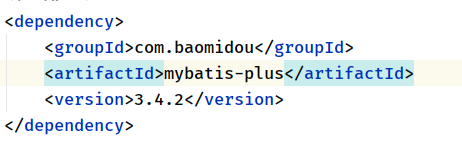

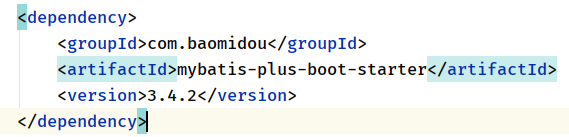

then I added the following dependencies to the POM file:

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-netflix-zuul</artifactId>

<version>2.2.10.RELEASE</version>

</dependency>

Then add @enablezuulproxy

to the startup class. Click Startup and find that an error is reported

Solution:

①: configure version and add & lt; spring-cloud.version>

<properties>

<java.version>1.8</java.version>

<spring-cloud.version>2021.0.0-RC1</spring-cloud.version>

</properties>

②: add unified version configuration

<dependencyManagement>

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-dependencies</artifactId>

<version>${spring-cloud.version}</version>

<type>pom</type>

<scope>import</scope>

</dependency>

</dependencies>

</dependencyManagement>

③: add warehouse configuration

<repositories>

<repository>

<id>spring-milestones</id>

<name>Spring Milestones</name>

<url>https://repo.spring.io/milestone</url>

<snapshots>

<enabled>false</enabled>

</snapshots>

</repository>

</repositories>

Method ①, ② and ③ seem to be indispensable, otherwise it will not start.

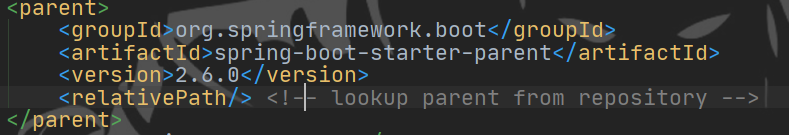

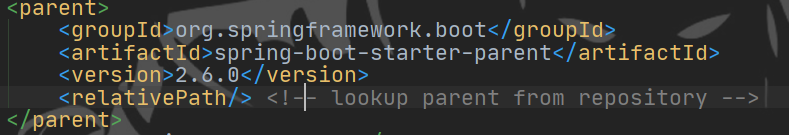

(in fact, the spring boot version conflicts with the spring cloud version)

we can click the zuul dependency package to see that it contains the starter of spring boot

therefore, we can directly cancel the parent tag so that it does not introduce parent dependency

however, I don’t think it’s good to cancel the parent dependency directly. After all, there are few introductions, and there is no need to consider the conflict of versions. Therefore, in order not to remove the parent tag, we can introduce the version dependency that does not conflict between spring boot and spring cloud.

Attach the complete POM configuration:

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>2.0.6.RELEASE</version>

<relativePath/> <!-- lookup parent from repository -->

</parent>

<groupId>cn.itcast.zuul</groupId>

<artifactId>itcast-zuul</artifactId>

<version>0.0.1-SNAPSHOT</version>

<name>itcast-zuul</name>

<description>Demo project for Spring Boot</description>

<properties>

<java.version>1.8</java.version>

</properties>

<dependencies>

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-netflix-zuul</artifactId>

<version>2.0.2.RELEASE</version>

</dependency>

</dependencies>

</project>

view

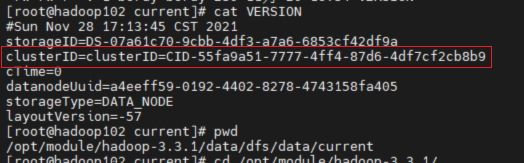

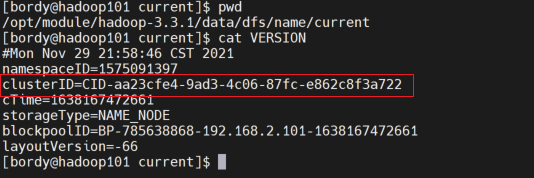

view  found two files The clusterid in is missing and does not match. It is understood that in the HDFS architecture, each datanode needs to communicate with the namenode, and the clusterid is the unique ID of the namenode.

found two files The clusterid in is missing and does not match. It is understood that in the HDFS architecture, each datanode needs to communicate with the namenode, and the clusterid is the unique ID of the namenode.