1. Scene

Nginx implements the separation of front and back end, and the reverse proxy of service cluster.

2. Nginx configuration instance

upstream portal-system {

server 127.0.0.1:8061 max_fails=3 fail_timeout=30s;

server 172.31.88.30:8061 max_fails=3 fail_timeout=30s;

}

server {

listen 80;

server_name 47.102.168.177;

root /opt/pages/dispatch-portal-system/;

location/{

proxy_set_header Host $host:$server_port;

proxy_pass http://portal-system;

}

location /images/ {

alias /opt/images/dispatch-portal-system/;

}

location /favicon.ico {

root /opt/images/dispatch-portal-system/;

}

location /api/user/updateImage/ {

proxy_set_header Host $host:$server_port;

proxy_pass http://127.0.0.1:8061/;

}

location =/{

root /opt/pages/dispatch-portal-system/;

add_header Cache-Control "no-cache, no-store";

}

location /index.html {

root /opt/pages/dispatch-portal-system/;

add_header Cache-Control "no-cache, no-store";

}

location /static/ {

root /opt/pages/dispatch-portal-system/;

}

}

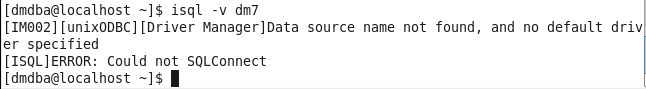

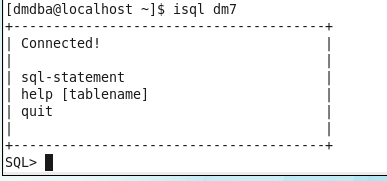

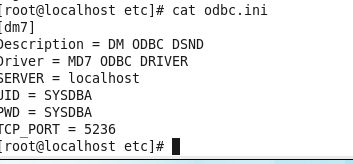

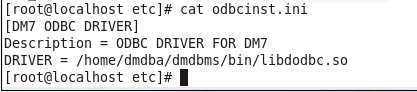

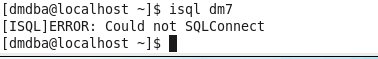

3. Test the ODBC connection

3. Test the ODBC connection