Today, when I was running the program, I kept reporting this error, saying that I was out of CUDA memory. After a long time of debugging, it turned out to be

At first I suspected that the graphics card on the server was being used, but when I got to mvidia-SMi I found that none of the three Gpus were used. That question is obviously impossible. So why is that?

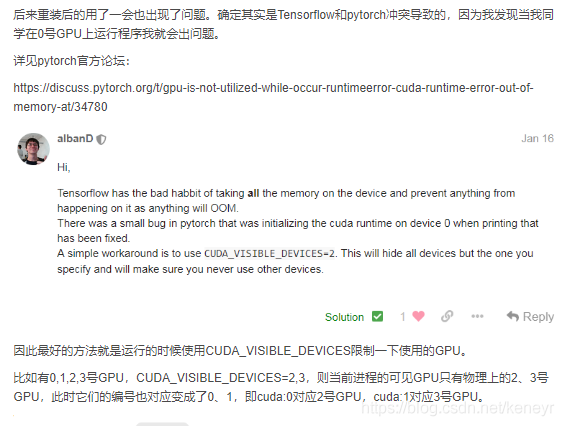

Others say the TensorFlow and Pytorch versions conflict. ?????I didn’t get TensorFlow

The last reference the post: http://www.cnblogs.com/jisongxie/p/10276742.html

Yes, Like the blogger, I’m also using a No. 0 GPU, so I don’t know why my Pytorch process works. I can only see a no. 2 GPU physically, I don’t have a no. 3 GPU. So something went wrong?

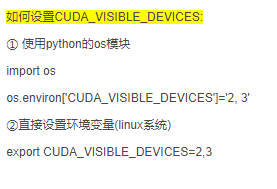

So I changed the code so that PyTorch could see all the Gpus on the server:

OS. Environ [‘ CUDA_VISIBLE_DEVICES] = ‘0’

Then on the physics of no. 0 GPU happily run up ~~~

At first I suspected that the graphics card on the server was being used, but when I got to mvidia-SMi I found that none of the three Gpus were used. That question is obviously impossible. So why is that?

Others say the TensorFlow and Pytorch versions conflict. ?????I didn’t get TensorFlow

The last reference the post: http://www.cnblogs.com/jisongxie/p/10276742.html

Yes, Like the blogger, I’m also using a No. 0 GPU, so I don’t know why my Pytorch process works. I can only see a no. 2 GPU physically, I don’t have a no. 3 GPU. So something went wrong?

So I changed the code so that PyTorch could see all the Gpus on the server:

OS. Environ [‘ CUDA_VISIBLE_DEVICES] = ‘0’

Then on the physics of no. 0 GPU happily run up ~~~

Read More:

- MobaXterm error cuda:out of memory

- RuntimeError: CUDA error: out of memory solution (valid for pro-test)

- RuntimeError: CUDA out of memory. Tried to allocate 600.00 MiB (GPU 0; 23.69 GiB total capacity)

- Runtimeerror using Python training model: CUDA out of memory error resolution

- Fatal error: Newspace:: rebalance allocation failed – process out of memory (memory overflow)

- Python: CUDA error: an illegal memory access was accounted for

- [PostgreSQL tutorial] · out of memory issue

- PyTorch CUDA error: an illegal memory access was encountered

- Error: 701 use DAC connection when out of memory

- FCOS No CUDA runtime is found, using CUDA_HOME=’/usr/local/cuda-10.0′

- CheXNet-master: CUDA out of memery [How to Solve]

- Oracle 11g installation prompt ora-27102: out of memory

- Out of memory overflow solution for idea running error

- Idea pop-up window out of memory, modify the parameters and start the no response solution

- Ineffective mark-compacts near heap limit Allocation failed – JavaScript heap out of memory

- Error: cudaGetDevice() failed. Status: CUDA driver version is insufficient for CUDA runtime version

- RuntimeError: cuda runtime error (100) : no CUDA-capable device is detected at /opt/conda/conda-bld/

- Kvm internal error: process exited :cannot set up guest memory ‘pc.ram‘:Cannot allocate memory

- os::commit_memory(0x0000000538000000, 11408506880, 0) failed; error=‘Cannot allocate memory‘

- The difference, cause and solution of memory overflow and memory leak