The server with 10Gbps port installed 2 NVME hard disks, and the

result of frequent problems occurred. The

log of the problem of disk drop is as follows

Mar 10 13:10:10 10Gbps kernel: nvme nvme0: I/O 183 QID 3 timeout, aborting

Mar 10 13:10:10 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:13 10Gbps kernel: nvme nvme0: I/O 384 QID 4 timeout, aborting

Mar 10 13:10:13 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:14 10Gbps kernel: nvme nvme0: I/O 626 QID 5 timeout, aborting

Mar 10 13:10:14 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:15 10Gbps kernel: nvme nvme0: I/O 879 QID 7 timeout, aborting

Mar 10 13:10:15 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:28 10Gbps kernel: nvme nvme0: I/O 221 QID 5 timeout, aborting

Mar 10 13:10:28 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:34 10Gbps kernel: nvme nvme0: I/O 510 QID 1 timeout, reset controller

Mar 10 13:10:55 10Gbps kernel: nvme nvme0: Device not ready; aborting reset, CSTS=0x1

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 271133736 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 2052266888 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 2175930376 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 7072889400 op 0x0:(READ) flags 0x80700 phys_seg 17 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 238328656 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 3954686056 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 6440531640 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 7301389616 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 6288979456 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 2149595472 op 0x0:(READ) flags 0x80700 phys_seg 6 prio class 0

Mar 10 13:11:16 10Gbps kernel: nvme nvme0: Device not ready; aborting reset, CSTS=0x1

Mar 10 13:11:16 10Gbps kernel: nvme nvme0: Removing after probe failure status: -19

Mar 10 13:11:36 10Gbps kernel: nvme nvme0: Device not ready; aborting reset, CSTS=0x1

Mar 10 13:11:36 10Gbps kernel: print_req_error: 6 callbacks suppressed

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 2000203632 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 68158880 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 4036854464 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 78773728 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 1987798736 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 1986001320 op 0x0:(READ) flags 0x1000 phys_seg 1 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 4082978496 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 5906970992 op 0x0:(READ) flags 0x1000 phys_seg 1 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 3962823040 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 6902965512 op 0x1:(WRITE) flags 0x100000 phys_seg 40 prio class 0

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0x160153970 len 8 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0xf09d82c0 len 32 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0x98fd6a0 len 32 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0x4b1fde0 len 32 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: nvme0n1: writeback error on inode 6619987520, offset 0, sector 6902965832

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0xec33e180 len 32 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_do_force_shutdown(0x2) called from line 1250 of file fs/xfs/xfs_log.c. Return address = 00000000147aed99

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): Log I/O Error Detected. Shutting down filesystem

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): Please unmount the filesystem and rectify the problem(s)

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: nvme0n1: writeback error on inode 77133302, offset 0, sector 684881640

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:39 10Gbps kernel: VFS: busy inodes on changed media or resized disk nvme0n1

Mar 10 13:11:39 10Gbps kernel: nvme nvme0: failed to set APST feature (-19)

Mar 10 13:11:39 10Gbps systemd[1]: Stopped target Local File Systems.

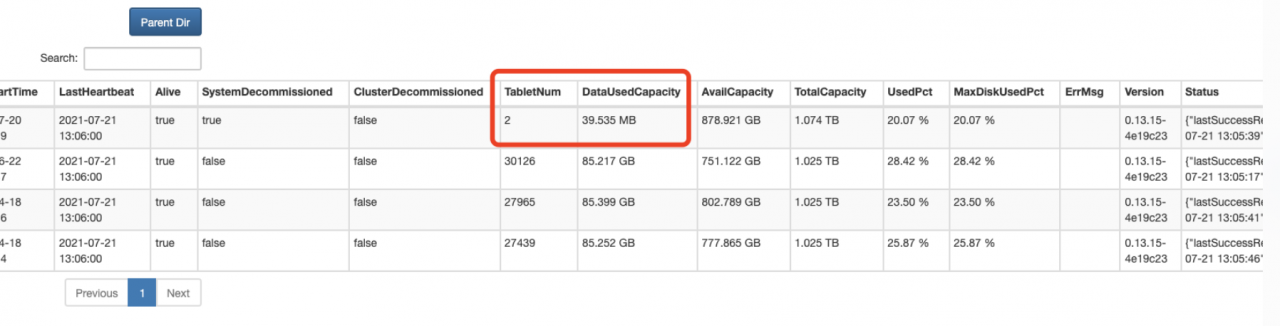

analyzed that

most of the reasons are caused by intensive reading and writing.

This is a CDN cache node. Type reading

NVME temperature is relatively high, if it continues, it will start to throttle

and then slowly collapse.

And because this is ADATA’s XPG consumer product, it is not an enterprise-level

problem-solving

1. Replaced with Samsung’s PRO series, because the other two use Samsung There is no problem with the product

2. Try to increase heat dissipation