Error:

20:1 error Expected indentation of 2 spaces but found 4 indent 21:1 error Expected indent

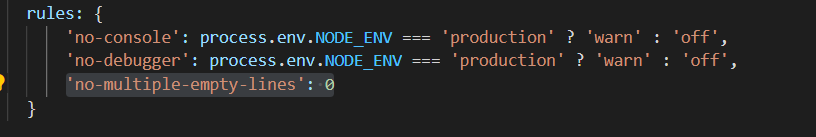

Solution:

Open the file eslintrc.js, enter the following code to solve this error

'no-multiple-empty-lines': 0

Error:

20:1 error Expected indentation of 2 spaces but found 4 indent 21:1 error Expected indent

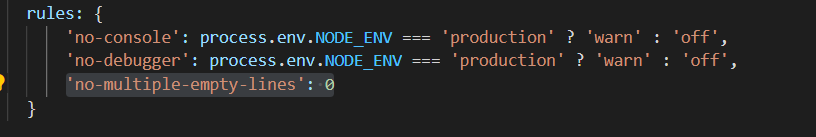

Solution:

Open the file eslintrc.js, enter the following code to solve this error

'no-multiple-empty-lines': 0

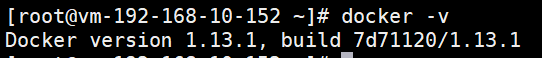

Error reporting description

My docker is 1.13.1

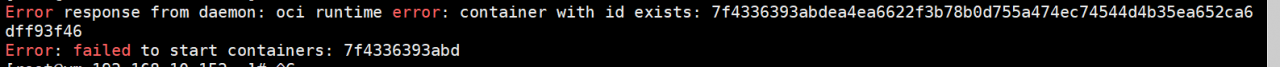

Today, a child violently updated some libraries on the server, causing all my containers to hang up and then can’t get up. The error is as follows:

Error response from daemon: oci runtime error: container with id exists: 7f4336393abdea4ea6622f3b78b0d755a474ec74544d4b35ea652ca6dff93f46

Error: failed to start containers: 7f4336393abd

Solution:

# First step:

cd /run/runc/

# create the directory of bak

mv 7f4336393abdea4ea6622f3b78b0d755a474ec74544d4b35ea652ca6dff93f46 bak/

# Then restart

docker start 7f4336393abdea4ea6622f3b78b0d755a474ec74544d4b35ea652ca6dff93f46

# it will be fine!Error message:

org.springframework.beans.factory.UnsatisfiedDependencyException: Error creating bean with name 'com.cy.mapper.UserMapperTests': Unsatisfied dependency expressed through field 'userMapper'; nested exception is org.springframework.beans.factory.NoSuchBeanDefinitionException: No qualifying bean of type 'com.cy.mapper.UserMapper' available: expected at least 1 bean which qualifies as autowire candidate. Dependency annotations: {@org.springframework.beans.factory.annotation.Autowired(required=true)}

at org.springframework.beans.factory.annotation.AutowiredAnnotationBeanPostProcessor$AutowiredFieldElement.resolveFieldValue(AutowiredAnnotationBeanPostProcessor.java:659) ~[spring-beans-5.3.19.jar:5.3.19]

at org.springframework.beans.factory.annotation.AutowiredAnnotationBeanPostProcessor$AutowiredFieldElement.inject(AutowiredAnnotationBeanPostProcessor.java:639) ~[spring-beans-5.3.19.jar:5.3.19]

at org.springframework.beans.factory.annotation.InjectionMetadata.inject(InjectionMetadata.java:119) ~[spring-beans-5.3.19.jar:5.3.19]

at org.springframework.beans.factory.annotation.AutowiredAnnotationBeanPostProcessor.postProcessProperties(AutowiredAnnotationBeanPostProcessor.java:399) ~[spring-beans-5.3.19.jar:5.3.19]

at org.springframework.beans.factory.support.AbstractAutowireCapableBeanFactory.populateBean(AbstractAutowireCapableBeanFactory.java:1431) ~[spring-beans-5.3.19.jar:5.3.19]

at org.springframework.beans.factory.support.AbstractAutowireCapableBeanFactory.autowireBeanProperties(AbstractAutowireCapableBeanFactory.java:417) ~[spring-beans-5.3.19.jar:5.3.19]

at org.springframework.test.context.support.DependencyInjectionTestExecutionListener.injectDependencies(DependencyInjectionTestExecutionListener.java:119) ~[spring-test-5.3.19.jar:5.3.19]

at org.springframework.test.context.support.DependencyInjectionTestExecutionListener.prepareTestInstance(DependencyInjectionTestExecutionListener.java:83) ~[spring-test-5.3.19.jar:5.3.19]

at org.springframework.boot.test.autoconfigure.SpringBootDependencyInjectionTestExecutionListener.prepareTestInstance(SpringBootDependencyInjectionTestExecutionListener.java:43) ~[spring-boot-test-autoconfigure-2.6.7.jar:2.6.7]

at org.springframework.test.context.TestContextManager.prepareTestInstance(TestContextManager.java:248) ~[spring-test-5.3.19.jar:5.3.19]

at org.springframework.test.context.junit4.SpringJUnit4ClassRunner.createTest(SpringJUnit4ClassRunner.java:227) [spring-test-5.3.19.jar:5.3.19]

at org.springframework.test.context.junit4.SpringJUnit4ClassRunner$1.runReflectiveCall(SpringJUnit4ClassRunner.java:289) [spring-test-5.3.19.jar:5.3.19]

at org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12) [junit-4.13.2.jar:4.13.2]

at org.springframework.test.context.junit4.SpringJUnit4ClassRunner.methodBlock(SpringJUnit4ClassRunner.java:291) [spring-test-5.3.19.jar:5.3.19]

at org.springframework.test.context.junit4.SpringJUnit4ClassRunner.runChild(SpringJUnit4ClassRunner.java:246) [spring-test-5.3.19.jar:5.3.19]

at org.springframework.test.context.junit4.SpringJUnit4ClassRunner.runChild(SpringJUnit4ClassRunner.java:97) [spring-test-5.3.19.jar:5.3.19]

at org.junit.runners.ParentRunner$4.run(ParentRunner.java:331) [junit-4.13.2.jar:4.13.2]

at org.junit.runners.ParentRunner$1.schedule(ParentRunner.java:79) [junit-4.13.2.jar:4.13.2]

at org.junit.runners.ParentRunner.runChildren(ParentRunner.java:329) [junit-4.13.2.jar:4.13.2]

at org.junit.runners.ParentRunner.access$100(ParentRunner.java:66) [junit-4.13.2.jar:4.13.2]

at org.junit.runners.ParentRunner$2.evaluate(ParentRunner.java:293) [junit-4.13.2.jar:4.13.2]

at org.springframework.test.context.junit4.statements.RunBeforeTestClassCallbacks.evaluate(RunBeforeTestClassCallbacks.java:61) [spring-test-5.3.19.jar:5.3.19]

at org.springframework.test.context.junit4.statements.RunAfterTestClassCallbacks.evaluate(RunAfterTestClassCallbacks.java:70) [spring-test-5.3.19.jar:5.3.19]

at org.junit.runners.ParentRunner$3.evaluate(ParentRunner.java:306) [junit-4.13.2.jar:4.13.2]

at org.junit.runners.ParentRunner.run(ParentRunner.java:413) [junit-4.13.2.jar:4.13.2]

at org.springframework.test.context.junit4.SpringJUnit4ClassRunner.run(SpringJUnit4ClassRunner.java:190) [spring-test-5.3.19.jar:5.3.19]

at org.junit.runner.JUnitCore.run(JUnitCore.java:137) [junit-4.13.2.jar:4.13.2]

at com.intellij.junit4.JUnit4IdeaTestRunner.startRunnerWithArgs(JUnit4IdeaTestRunner.java:69) [junit-rt.jar:na]

at com.intellij.rt.junit.IdeaTestRunner$Repeater.startRunnerWithArgs(IdeaTestRunner.java:33) [junit-rt.jar:na]

at com.intellij.rt.junit.JUnitStarter.prepareStreamsAndStart(JUnitStarter.java:235) [junit-rt.jar:na]

at com.intellij.rt.junit.JUnitStarter.main(JUnitStarter.java:54) [junit-rt.jar:na]

Caused by: org.springframework.beans.factory.NoSuchBeanDefinitionException: No qualifying bean of type 'com.cy.mapper.UserMapper' available: expected at least 1 bean which qualifies as autowire candidate. Dependency annotations: {@org.springframework.beans.factory.annotation.Autowired(required=true)}

at org.springframework.beans.factory.support.DefaultListableBeanFactory.raiseNoMatchingBeanFound(DefaultListableBeanFactory.java:1799) ~[spring-beans-5.3.19.jar:5.3.19]

at org.springframework.beans.factory.support.DefaultListableBeanFactory.doResolveDependency(DefaultListableBeanFactory.java:1355) ~[spring-beans-5.3.19.jar:5.3.19]

at org.springframework.beans.factory.support.DefaultListableBeanFactory.resolveDependency(DefaultListableBeanFactory.java:1309) ~[spring-beans-5.3.19.jar:5.3.19]

at org.springframework.beans.factory.annotation.AutowiredAnnotationBeanPostProcessor$AutowiredFieldElement.resolveFieldValue(AutowiredAnnotationBeanPostProcessor.java:656) ~[spring-beans-5.3.19.jar:5.3.19]

... 30 common frames omittedNo qualifying bean of type 'com.cy.mapper.UserMapper' available: expected at least 1 bean which qualifies as autowire candidate. Dependency annotations: {@org.springframework.beans.factory.annotation.Autowired(required=true)}

In the error message, you can know that there is an error when injecting the bean of usermapper

Error reason:

Mapper package scan path error on springboot boot start class

package com.cy;

import org.mybatis.spring.annotation.MapperScan;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

@MapperScan("mapper")

//mapperScan annotation to automatically load all mapper interface files when the project starts

public class StoreApplication {

public static void main(String[] args) {

SpringApplication.run(StoreApplication.class, args);

}

}Solution: Modify @MapperScan(“mapper”) to the correct path.

Error Messages:

[2022-04-25 23:07:20,681][ERROR][SERVER][TakeToExecute][reqsched_thread] Request failed with code: Error code(30100): Collection xxx does not exist. Use milvus.has_collection to verify whether the collection exists. You also can check whether the collection name exists.

Solution

When deleting, you must delete the index first and then the set. If the order is reversed, an error will be reported

When creating an index, create a set first. If the order is reversed, an error will be reported

def insert_ini(self, collection_name, vectors, ids=None, partition_tag=None):

try:

if self.has_collection(collection_name):

self.client.drop_index(collection_name)

self.client.drop_collection(collection_name)

self.creat_collection(collection_name)

self.create_index(collection_name)

print('collection info: {}'.format(self.client.get_collection_info(collection_name)[1]))

self.create_partition(collection_name, partition_tag)

if vectors != -1:

status, ids = self.client.insert(

collection_name=collection_name,

records=vectors,

ids=ids,

partition_tag=partition_tag)

self.client.flush([collection_name])

print(

'Insert {} entities, there are {} entities after insert data.'.

format(

len(ids), self.client.count_entities(collection_name)[1]))

return status, ids

else:

return -1, ids

except Exception as e:

print("Milvus insert error:", e)

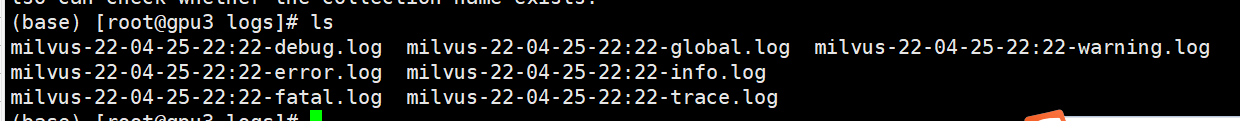

After finishing the whole, be sure to check the log in the background of the component to see if there are exceptions

Stand alone version

cd /home/$USER/milvus/logs

The log is completely normal only when there is no error report

cat milvus-22-04-25-22:22-error.log

Mishards cluster version, or report an error

[2022-04-26 01:13:47,971][ERROR][SERVER][TakeToExecute][reqsched_thread] Request failed with code: Error: Collection already exists and it is in delete state, please wait a second

[2022-04-26 01:13:47,975][ERROR][SERVER][TakeToExecute][reqsched_thread] Request failed with code: Error code(30100): Collection call_12345_prd does not exist. Use milvus.has_collection to verify whether the collection exists. You also can check whether the collection name exists.

[2022-04-26 01:13:47,978][ERROR][SERVER][TakeToExecute][reqsched_thread] Request failed with code: Error code(30100): Collection call_12345_prd does not exist. Use milvus.has_collection to verify whether the collection exists. You also can check whether the collection name exists.

[2022-04-26 01:13:47,981][ERROR][SERVER][TakeToExecute][reqsched_thread] Request failed with code: Error code(30100): Collection call_12345_prd does not exist. Use milvus.has_collection to verify whether the collection exists. You also can check whether the collection name exists.

[2022-04-26 01:13:47,985][ERROR][SERVER][OnExecute][reqsched_thread] [insert][0] Collection call_12345_prd not found

[2022-04-26 01:13:47,985][ERROR][SERVER][TakeToExecute][reqsched_thread] Request failed with code: Error code(30100): Collection call_12345_prd does not exist. Use milvus.has_collection to verify whether the collection exists. You also can check whether the collection name exists.

[2022-04-26 01:13:47,987][ERROR][SERVER][TakeToExecute][reqsched_thread] Request failed with code: Error code(30100): Collection call_12345_prd does not exist. Use milvus.has_collection to verify whether the collection exists. You also can check whether the collection name exists.

[2022-04-26 01:13:47,990][ERROR][SERVER][TakeToExecute][reqsched_thread] Request failed with code: Error code(30100): Collection call_12345_prd does not exist. Use milvus.has_collection to verify whether the collection exists. You also can check whether the collection name exists.

Go to the corresponding node and check the log files one by one.

kubectl get pods

kubectl exec -it milvus-release-mishards-767dc476bf-fxz8v -- /bin/bash

kubectl exec -it milvus-release-readonly-6cb6c5bcd9-9m4c5 -- /bin/bash

kubectl exec -it milvus-release-readonly-6cb6c5bcd9-mzrh6 -- /bin/bash

kubectl exec -it milvus-release-writable-7776777fcc-dsdmv -- /bin/bash

Problem analysis

Error: 0308010c: digital envelope routines:: Unsupported

This error is due to the recent release of OpenSSL 3.0 in node.js V17, which adds strict restrictions on allowed algorithms and key sizes, which may have some impact on the ecosystem.

Some applications that worked fine before node.js V17 may throw the following exceptions in V17:

At present, this problem can be temporarily solved by running the following command line

export NODE_OPTIONS=–openssl-legacy-provider

Recently playing detectron2, need pycocotools >= 2.0.2, python version 3.7, installation error

ERROR: Error expected str, bytes or os.PathLike object, not NoneType while executing command pip subprocess to install build dependenciesIt is found that pycocotools updated to 2.0.4. The degraded version can be installed normally:

pip install pycocotools==2.0.2Project scenario:

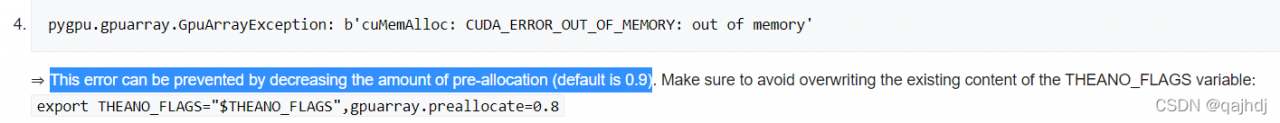

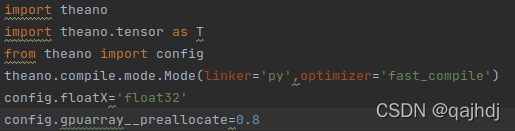

l2t code needs to use theano library and GPU to accelerate calculation

Non root user ubuntu-18.04 + cuda8 0 (you need to downgrade GCC to match, I’ll configure it as 5.3) + cudnn V5 1+theano 1.0.4+pygpu 0.7.6

Problem description

pygpu.gpuarray.GpuArrayException: b'cuMemAlloc: CUDA_ERROR_OUT_OF_MEMORY: out of memory

Training... 2448.24 sec.

Traceback (most recent call last):

File ".../python3.8/site-packages/theano/compile/function_module.py", line 903, in __call__

self.fn() if output_subset is None else\

File "pygpu/gpuarray.pyx", line 689, in pygpu.gpuarray.pygpu_zeros

File "pygpu/gpuarray.pyx", line 700, in pygpu.gpuarray.pygpu_empty

File "pygpu/gpuarray.pyx", line 301, in pygpu.gpuarray.array_empty

pygpu.gpuarray.GpuArrayException: b'cuMemAlloc: CUDA_ERROR_OUT_OF_MEMORY: out of memory'

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "learn.py", line 535, in <module>

main()

File "learn.py", line 531, in main

trainer.train()

File "..l2t/lib/python3.8/site-packages/smartlearner-0.1.0-py3.8.egg/smartlearner/trainer.py", line 31, in train

File ../l2t/lib/python3.8/site-packages/smartlearner-0.1.0-py3.8.egg/smartlearner/trainer.py", line 90, in _learning

File "..l2t/lib/python3.8/site-packages/theano/compile/function_module.py", line 914, in __call__

gof.link.raise_with_op(

File "../l2t/lib/python3.8/site-packages/theano/gof/link.py", line 325, in raise_with_op

reraise(exc_type, exc_value, exc_trace)

File "../l2t/lib/python3.8/site-packages/six.py", line 718, in reraise

raise value.with_traceback(tb)

File "../l2t/lib/python3.8/site-packages/theano/compile/function_module.py", line 903, in __call__

self.fn() if output_subset is None else\

File "pygpu/gpuarray.pyx", line 689, in pygpu.gpuarray.pygpu_zeros

File "pygpu/gpuarray.pyx", line 700, in pygpu.gpuarray.pygpu_empty

File "pygpu/gpuarray.pyx", line 301, in pygpu.gpuarray.array_empty

pygpu.gpuarray.GpuArrayException: b'cuMemAlloc: CUDA_ERROR_OUT_OF_MEMORY: out of memory'

Apply node that caused the error: GpuAlloc<None>{memset_0=True}(GpuArrayConstant{[[0.]]}, Elemwise{Composite{((i0 * i1 * i2 * i3) // maximum(i3, i4))}}[(0, 0)].0, Shape_i{3}.0)

Toposort index: 98

Inputs types: [GpuArrayType<None>(float64, (True, True)), TensorType(int64, scalar), TensorType(int64, scalar)]

Inputs shapes: [(1, 1), (), ()]

Inputs strides: [(8, 8), (), ()]

Inputs values: [gpuarray.array([[0.]]), array(2383800), array(100)]

Outputs clients: [[forall_inplace,gpu,grad_of_scan_fn}(Shape_i{1}.0, InplaceGpuDimShuffle{0,2,1}.0, InplaceGpuDimShuffle{0,2,1}.0, InplaceGpuDimShuffle{0,2,1}.0, GpuAlloc<None>{memset_0=True}.0, GpuSubtensor{int64:int64:int64}.0, GpuSubtensor{int64:int64:int64}.0, GpuSubtensor{int64:int64:int64}.0, GpuSubtensor{int64:int64:int64}.0, GpuSubtensor{int64:int64:int64}.0, GpuAlloc<None>{memset_0=True}.0, GpuAlloc<None>{memset_0=True}.0, GpuAlloc<None>{memset_0=True}.0, GpuAlloc<None>{memset_0=True}.0, GpuAlloc<None>{memset_0=True}.0, Shape_i{1}.0, Shape_i{1}.0, Shape_i{1}.0, Shape_i{1}.0, Shape_i{1}.0, Shape_i{1}.0, Shape_i{1}.0, Shape_i{1}.0, Shape_i{1}.0, Shape_i{1}.0, GRU0_W, GRU0_U, GRU0_Uh, GRU1_W, GRU1_U, GRU1_Uh, InplaceGpuDimShuffle{x,0}.0, GpuFromHost<None>.0, GpuElemwise{add,no_inplace}.0, GpuElemwise{add,no_inplace}.0, GpuElemwise{Add}[(0, 1)]<gpuarray>.0, GpuReshape{2}.0, GpuFromHost<None>.0, GpuElemwise{add,no_inplace}.0, GpuElemwise{add,no_inplace}.0, GpuElemwise{Add}[(0, 1)]<gpuarray>.0, GpuReshape{2}.0, GpuFromHost<None>.0, GpuElemwise{add,no_inplace}.0, GpuElemwise{add,no_inplace}.0, GpuElemwise{Add}[(0, 1)]<gpuarray>.0, GpuReshape{2}.0, InplaceGpuDimShuffle{1,0}.0, InplaceGpuDimShuffle{1,0}.0, InplaceGpuDimShuffle{x,0}.0, InplaceGpuDimShuffle{1,0}.0, InplaceGpuDimShuffle{1,0}.0, InplaceGpuDimShuffle{1,0}.0, InplaceGpuDimShuffle{1,0}.0, GpuFromHost<None>.0, InplaceGpuDimShuffle{1,0}.0, GpuAlloc<None>{memset_0=True}.0, GpuFromHost<None>.0, GpuAlloc<None>{memset_0=True}.0, GpuFromHost<None>.0, GpuAlloc<None>{memset_0=True}.0)]]

HINT: Re-running with most Theano optimization disabled could give you a back-trace of when this node was created. This can be done with by setting the Theano flag 'optimizer=fast_compile'. If that does not work, Theano optimizations can be disabled with 'optimizer=None'.

HINT: Use the Theano flag 'exception_verbosity=high' for a debugprint and storage map footprint of this apply node.

Solution:

Reduce gpu__preallocate, I reduced it to 0.8, and optimize it with a small amount of graphs according to the hint when the error is reported

type in the codes

Run successfully!

Problem description:

when migrating the database using flash DB, an error report: KeyError: ‘migrate’

Solution:

When initializing the migrate object, there is no migrate function or the parameters are incorrect

from flask_migrate import Migrate

migrate =Migrate(app,db)

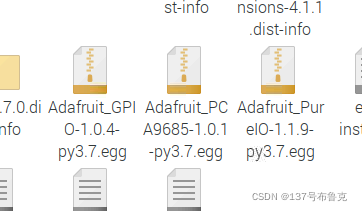

After I install pca9685() according to the online method, the following errors will appear in the operation.

>>> pwm = Adafruit_PCA9685.PCA9685()

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

NameError: name 'Adafruit_PCA9685' is not defined

>>> import Adafruit_PCA9685

>>> pwm = Adafruit_PCA9685.PCA9685()

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/usr/local/lib/python3.7/dist-packages/Adafruit_PCA9685-1.0.1-py3.7.egg/Adafruit_PCA9685/PCA9685.py", line 75, in __init__

self.set_all_pwm(0, 0)

File "/usr/local/lib/python3.7/dist-packages/Adafruit_PCA9685-1.0.1-py3.7.egg/Adafruit_PCA9685/PCA9685.py", line 111, in set_all_pwm

self._device.write8(ALL_LED_ON_L, on & 0xFF)

File "/usr/local/lib/python3.7/dist-packages/Adafruit_GPIO-1.0.4-py3.7.egg/Adafruit_GPIO/I2C.py", line 116, in write8

self._bus.write_byte_data(self._address, register, value)

File "/usr/local/lib/python3.7/dist-packages/Adafruit_PureIO-1.1.9-py3.7.egg/Adafruit_PureIO/smbus.py", line 327, in write_byte_data

OSError: [Errno 121] Remote I/O error

But the premise of this method is to have the Adafruit_PCA9685 file under the path /usr/local/lib/python3.7/dist-packages/”, if there is one, use the above method

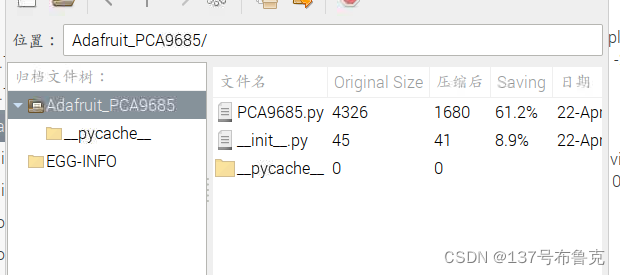

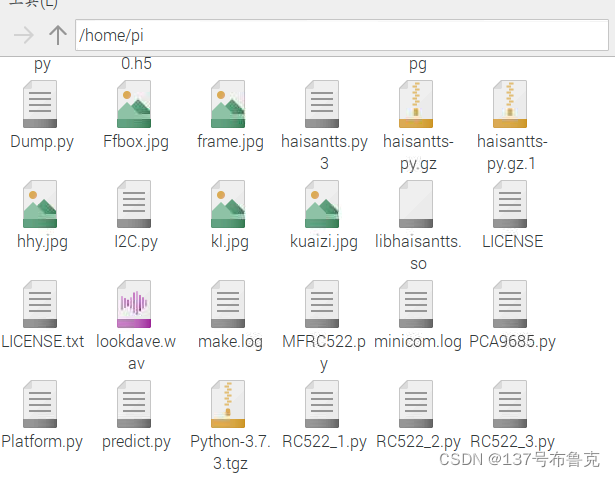

My Raspberry Pi is 4B, there are three .egg files in the above path, Adafruit_PCA9685 is placed in it, as shown below

Select the middle of the Adafruit_PCA9685 .egg file, there will be PCA9685.py file, just create PCA9685.py under raspberry home/pi/ and paste the contents of the previous PCA9685.py into the newly created file, import the code to import PCA9685 instead of not import Adafruit_PCA9685

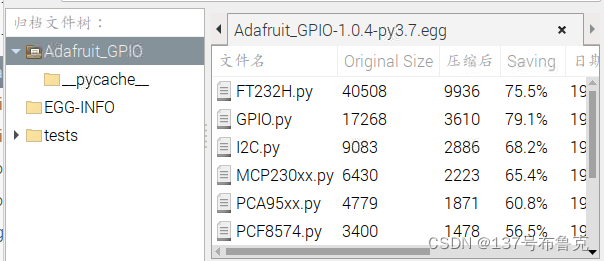

import PCA9685Open the PCA9685 file and find that it needs to import the I2C.py file (I forgot to take a screenshot), then open the first one under the /usr/local/lib/python3.7/dist-packages/ path

Paste the contents of I2C.py and Plaform.py inside this file into a new I2C.py and Plaform.py file under home/pi, then open the PCA9685.py file in this directory and change the imported I2C in the middle position, it seems to be form Adafurit_GPIO import I2C as I2C directly to import I2C.

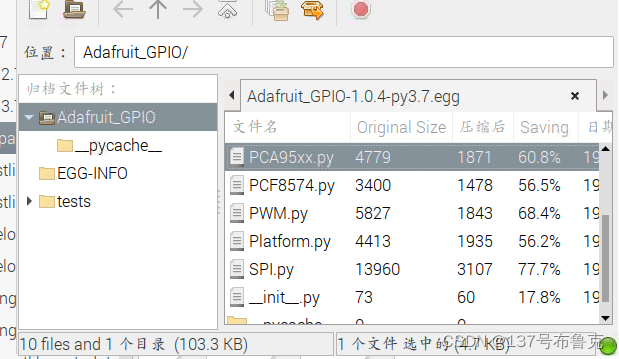

Then open the I2C file, found that you need to import Plaform.py, the same operation, change the previous import to import Plaform can be, as shown, and finally in the directory I2C.py PCA9685.py and Plaform.py file

You can write it directly in the raspberry pie file

import PCA9685

pwm = PCA9685.PCA9685()See if it’s successful. I’m successful.

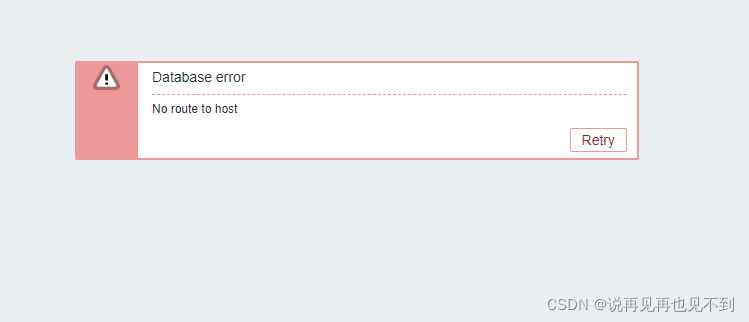

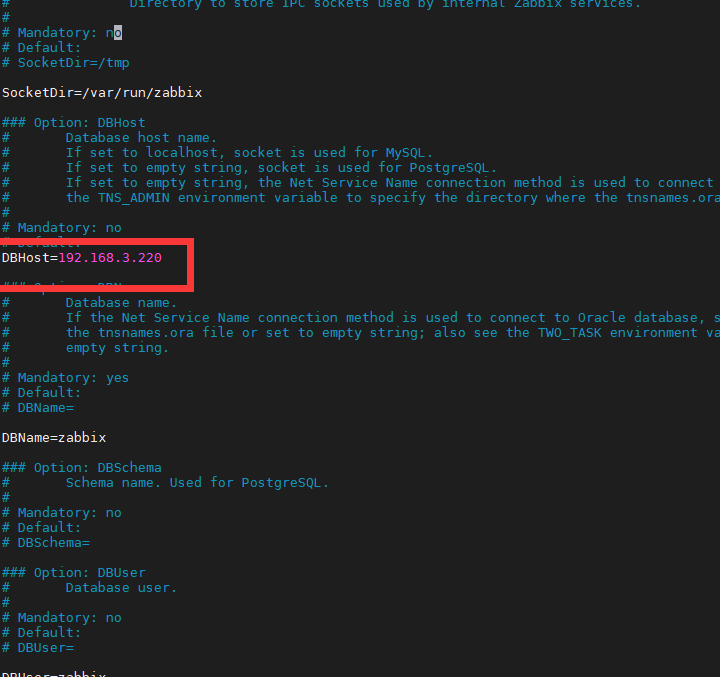

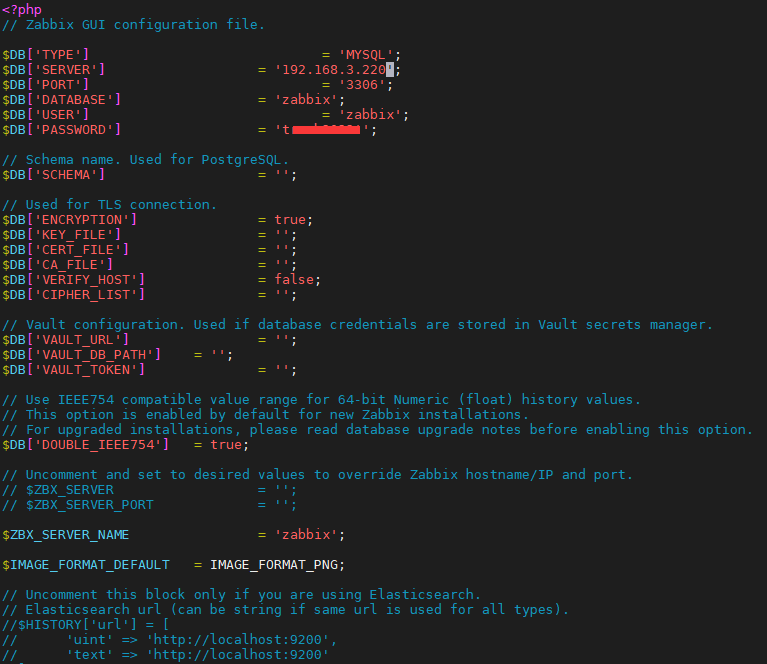

Generally, the problem is that the database address of ZABBIX has changed, so we need to update the configuration file of ZABBIX

1. Change zabbix_server.conf file

vim /etc/zabbix/zabbix_server.conf

2. Modify zabbix.conf.php file

3. Restart ZABBIX service

systemctl restart zabbix-server.service

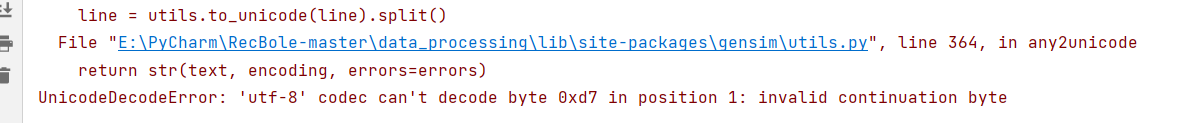

1. Decode Issues

1. Error Messages: UnicodeDecodeError: ‘utf-8’ codec can’t decode byte 0xd7 in position 1

2. Error Codes:

Insert # -*- coding: utf8 -*-

def write_review(review, stop_words_address, stop_address, no_stop_address):

"""Write out the processed data"""

no_stop_review = remove_stop_words(review, stop_words_address)

with open(no_stop_address, 'a') as f:

for row in no_stop_review:

f.write(row[-1])

f.write("\n")

f.close()

if __name__ == '__main__':

segment_text = cut_words('audito_whole.csv')

review_text = remove_punctuation(segment_text)

write_review(review_text, 'stop_words.txt', 'no_stop.txt', 'stop.txt')

# Model Training Master Program

logging.basicConfig(format='%(asctime)s : %(levelname)s : %(message)s', level=logging.INFO)

sentences_1 = word2vec.LineSentence('no_stop.txt')

model_1 = word2vec.Word2Vec(sentences_1)

# model.wv.save_word2vec_format('test_01.model.txt', 'test_01.vocab.txt', binary=False) # 保存模型,后面可直接调用

# model = word2vec.Word2Vec.load("test_01.model") # Calling the model

# Calculate the list of related words for a word

a_1 = model_1.wv.most_similar(u"Kongjian", topn=20)

print(a_1)

# Calculate the correlation of two words

b_1 = model_1.wv.similarity(u"Kongjian", u"Houzuo")

print(b_1)

3. Error parsing: this error means that the character encoded as 0xd7 cannot be parsed during decoding. This is because the encoding method is not specified when saving the text, so the GBK encoding may be used when saving the text

4. Solution: when writing out the data, point out that the coding format is UTF-8, as shown in the following figure

def write_review(review, stop_words_address, stop_address, no_stop_address):

"""Write out the processed data"""

with open(stop_address, 'a', encoding='utf-8') as f:

for row in review:

f.write(row[-1])

f.write("\n")

f.close()

no_stop_review = remove_stop_words(review, stop_words_address)

with open(no_stop_address, 'a', encoding='utf-8') as f:

for row in no_stop_review:

f.write(row[-1])

f.write("\n")

f.close()

5. Expansion – why did this mistake happen

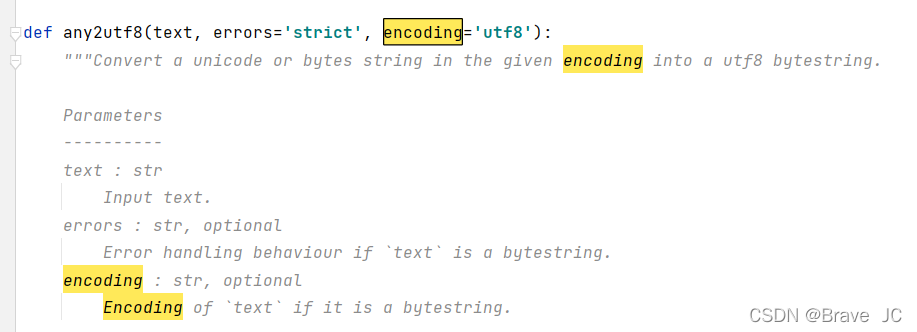

first of all, we click the source code file of the error report, as shown in the following figure:

open utils, and we find the following code

it can be seen that the encoding format set by word2vec is UTF-8. If it is not UTF-8 during decoding, errors = ‘strict’ will be triggered, and then UnicodeDecodeError will be reported

2. Attribute error

1 Error reporting: attributeerror: ‘word2vec’ object has no attribute ‘most_similar’

2. Wrong source code:

# Model Training Master Program

logging.basicConfig(format='%(asctime)s : %(levelname)s : %(message)s', level=logging.INFO)

sentences_1 = word2vec.LineSentence('no_stop.txt')

model_1 = word2vec.Word2Vec(sentences_1)

# model.wv.save_word2vec_format('test_01.model.txt', 'test_01.vocab.txt', binary=False) # 保存模型,后面可直接调用

# model = word2vec.Word2Vec.load("test_01.model") # Calling the model

# Calculate the list of related words for a word

a_1 = model_1.most_similar(u"Kongjian", topn=20)

print(a_1)

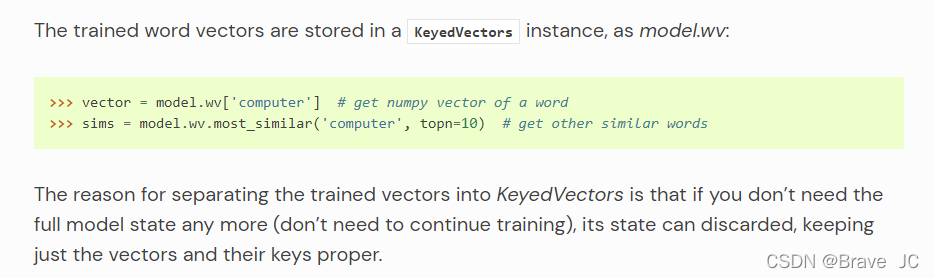

**3. Analysis of error reports: * * error reports refer to attribute errors because the source code structure has been updated in the new word2vec. See the official website for instructions as follows:

**4. Solution: * * replace the called object as follows:

# Model Training Master Program

logging.basicConfig(format='%(asctime)s : %(levelname)s : %(message)s', level=logging.INFO)

sentences_1 = word2vec.LineSentence('no_stop.txt')

model_1 = word2vec.Word2Vec(sentences_1)

# model.wv.save_word2vec_format('test_01.model.txt', 'test_01.vocab.txt', binary=False) # 保存模型,后面可直接调用

# model = word2vec.Word2Vec.load("test_01.model") # Calling the model

# Calculate the list of related words for a word

a_1 = model_1.wv.most_similar(u"Kongjian", topn=20)

print(a_1)

5. Why is model_1.wv.most_similar(), I didn’t find the statement about WV definition in word2vec. Does anyone know?

Problem description

An error occurs when executing the linux command on centos8

[root@VM-16-14-centos ~]# dnf update epel-release

Repository epel is listed more than once in the configuration

Last metadata expiration check: 2:24:16 ago on Mon 25 Apr 2022 07:27:51 AM CST.

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Module yaml error: Unexpected key in data: static_context [line 9 col 3]

Dependencies resolved.

Nothing to do.

Complete!

Solution:

It can be solved by upgrading libmodulemd (DNF upgrade libmodulemd). The problem can be fixed in libmodulemd-2.13.0-1.fc33.