Execute docker pull xx.xx.xx.xx/xx/xx to download the image of the private library. The errors are as follows:

Error response from daemon: Get https://xx.xx.xx.xx/v2/: Service Unavailable

The reason is that docker supports HTTPS protocol by default, while the private library is HTTP protocol.

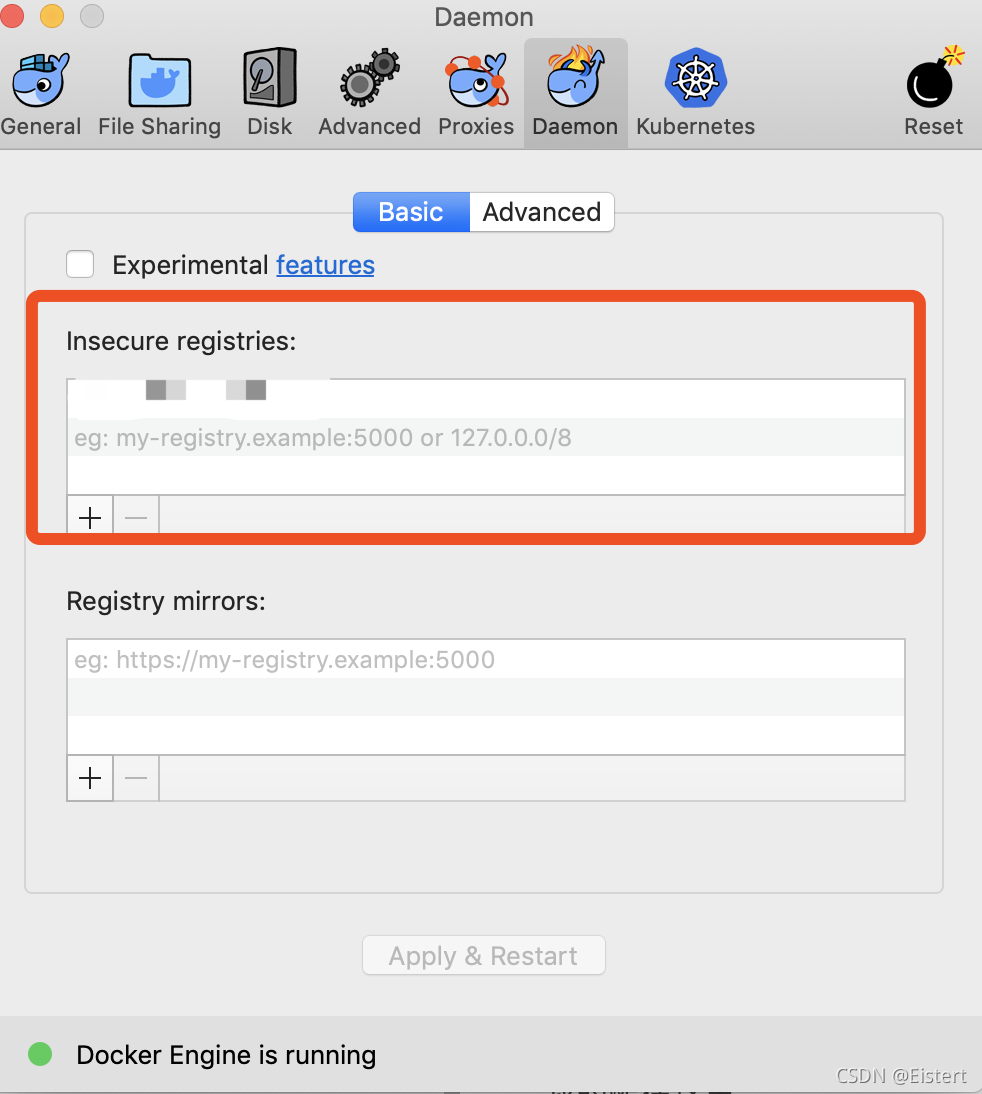

Mac desktop can be in preferences – & gt; Configure the following code in docker engine. Xx.xx.xx.xx is the address of your private library.

{

"insecure-registries":[

"xx.xx.xx.xx"

]

}

CentOS system, modify/etc/docker/daemon.json, and add the following code.

{

"insecure-registries":[

"xx.xx.xx.xx"

]

}

Add here