Causes of loopholes

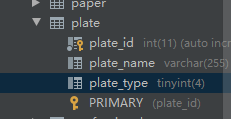

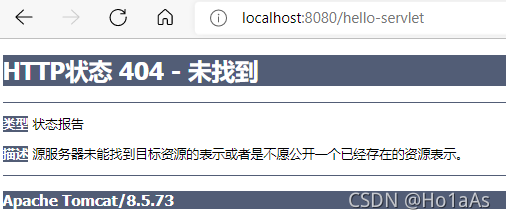

Normally, you can access/article and enter a numeric ID to get the article content. However, if a spel expression is passed in, you will go to the error page and parse the spel expression content and reflect it in the error page.

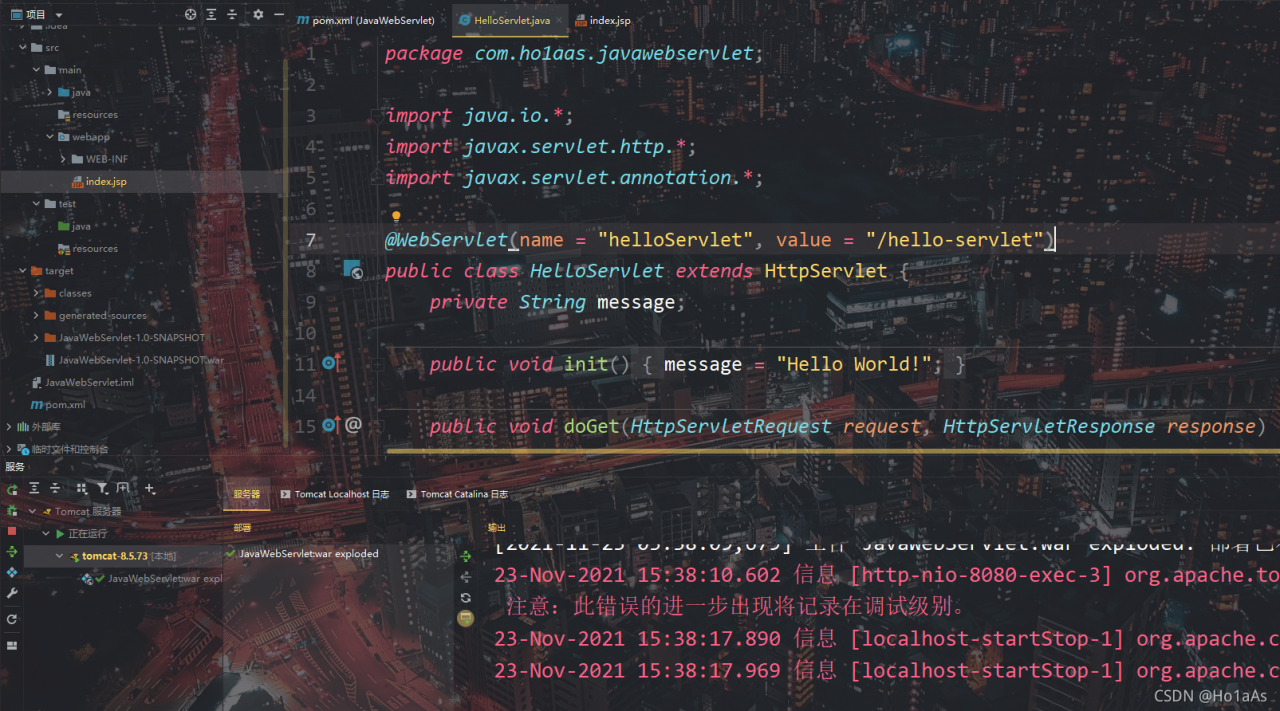

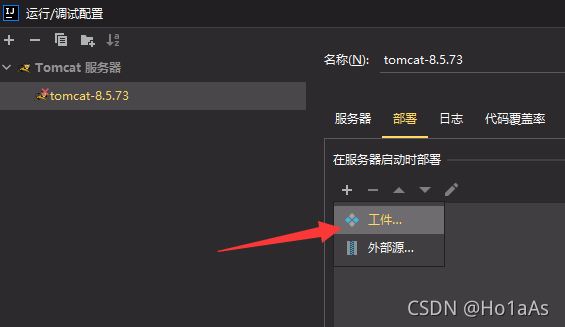

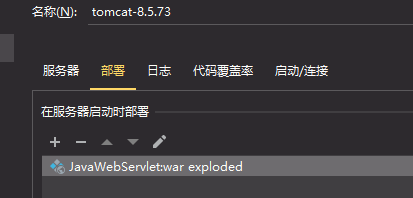

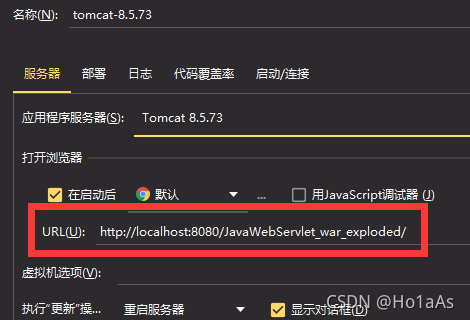

Debug process

Similarly, starting from the dispatcherservlet.Dodispatch() function, we quickly located the key class: propertyplaceholderhelper class

in dispatcherservlet, an error is reported due to the following judgment:

protected void doDispatch(HttpServletRequest request, HttpServletResponse response) throws Exception {

...

if (asyncManager.isConcurrentHandlingStarted()) {

return;

}

...

}

The error content is numberformatexception, which converts the entered value into a digital error.

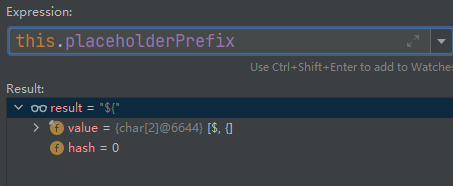

First look at the propertyplaceholderhelper.Parsestringvalue() method

rotected String parseStringValue(String strVal, PropertyPlaceholderHelper.PlaceholderResolver placeholderResolver, Set<String> visitedPlaceholders) {

StringBuilder result = new StringBuilder(strVal);

int startIndex = strVal.indexOf(this.placeholderPrefix);

while(startIndex != -1) {

int endIndex = this.findPlaceholderEndIndex(result, startIndex);

if (endIndex != -1) {

String placeholder = result.substring(startIndex + this.placeholderPrefix.length(), endIndex);

String originalPlaceholder = placeholder;

if (!visitedPlaceholders.add(placeholder)) {

throw new IllegalArgumentException("Circular placeholder reference '" + placeholder + "' in property definitions");

}

placeholder = this.parseStringValue(placeholder, placeholderResolver, visitedPlaceholders);

//Here

String propVal = placeholderResolver.resolvePlaceholder(placeholder);

if (propVal == null && this.valueSeparator != null) {

int separatorIndex = placeholder.indexOf(this.valueSeparator);

if (separatorIndex != -1) {

String actualPlaceholder = placeholder.substring(0, separatorIndex);

String defaultValue = placeholder.substring(separatorIndex + this.valueSeparator.length());

propVal = placeholderResolver.resolvePlaceholder(actualPlaceholder);

if (propVal == null) {

propVal = defaultValue;

}

}

}

......

}

return result.toString();

}

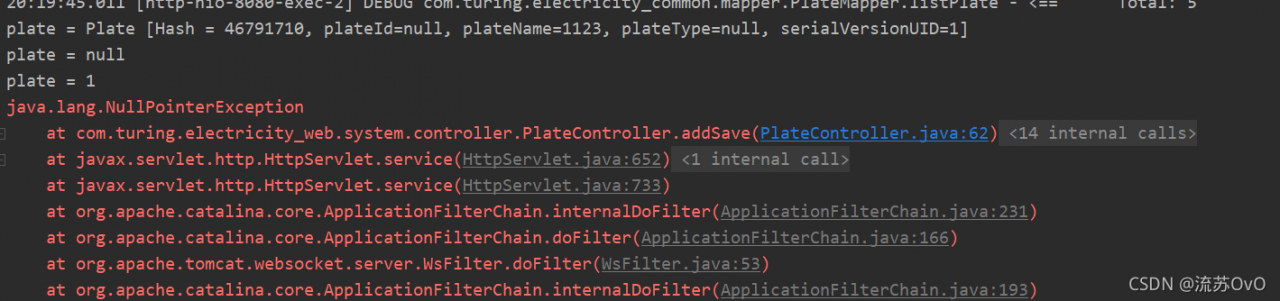

After that, it will return to the view, which contains ${timestamp}, ${error}, ${status}, and ${message}. The view will judge whether the value starts with “${” through circular traversal

As long as it starts with “${“, it will enter the spel expression execution stage. However, when we set the value of message to payload, which also starts with “${“, it will also execute payload. It does not verify the value of controllable parameter message.

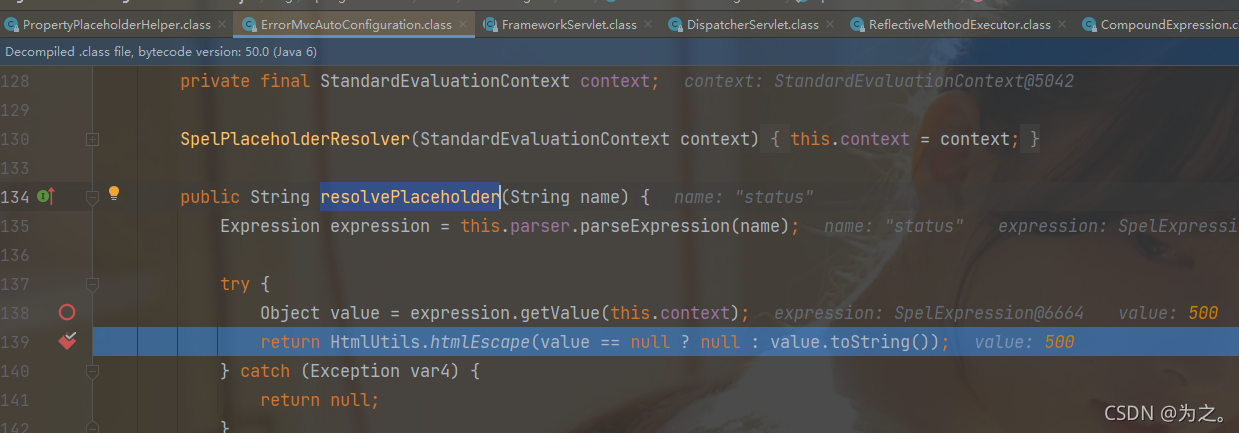

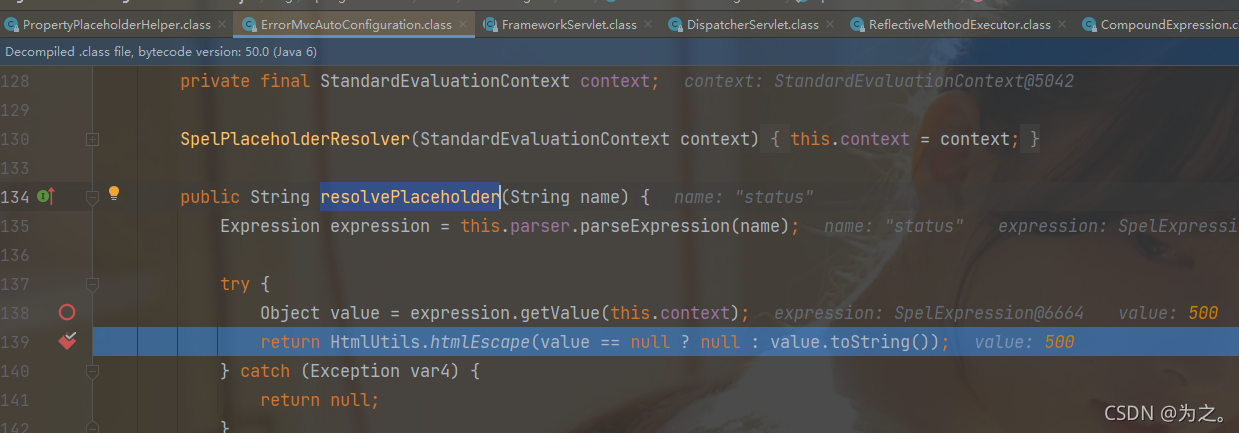

After that, use the errormvcautoconfiguration.Resolveplaceholder() method to parse the spel expression and get the value.

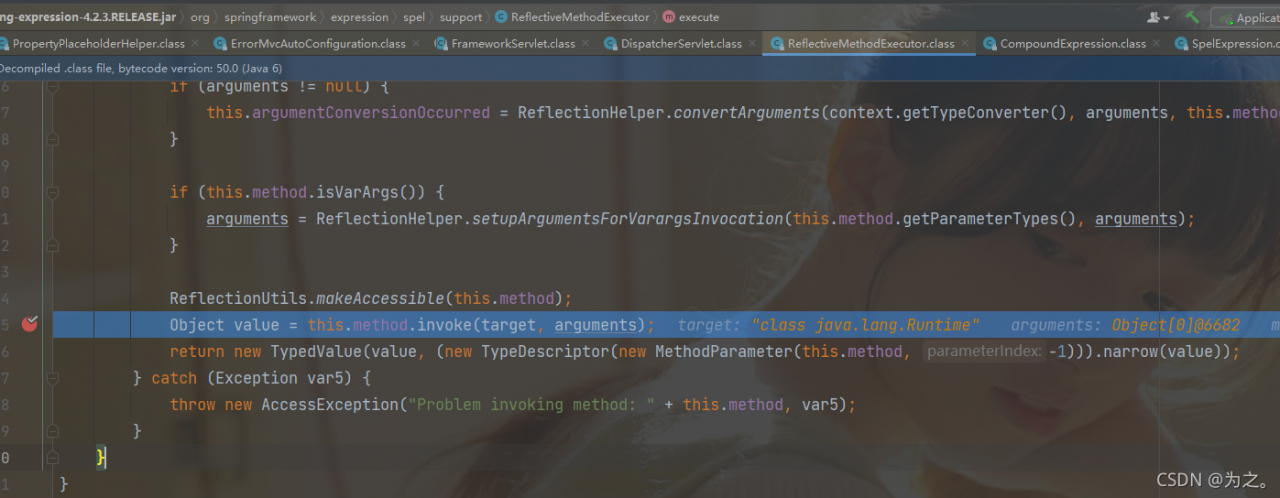

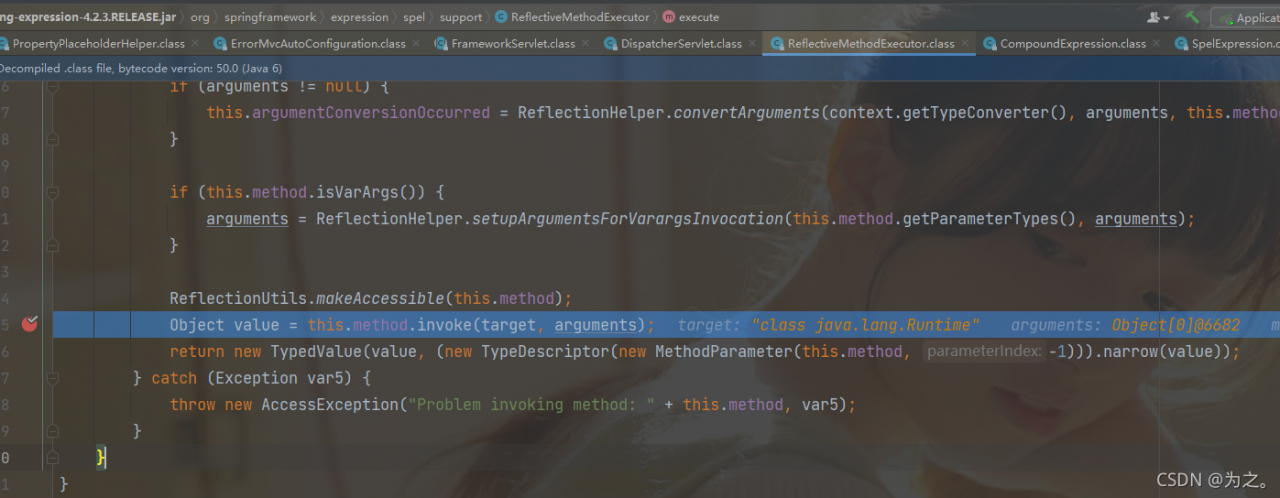

this step is to get the value of status. When the payload is reached, it will be parsed into the runtime class and execute the exec method. This step is to get the runtime instance through reflection.

Summary

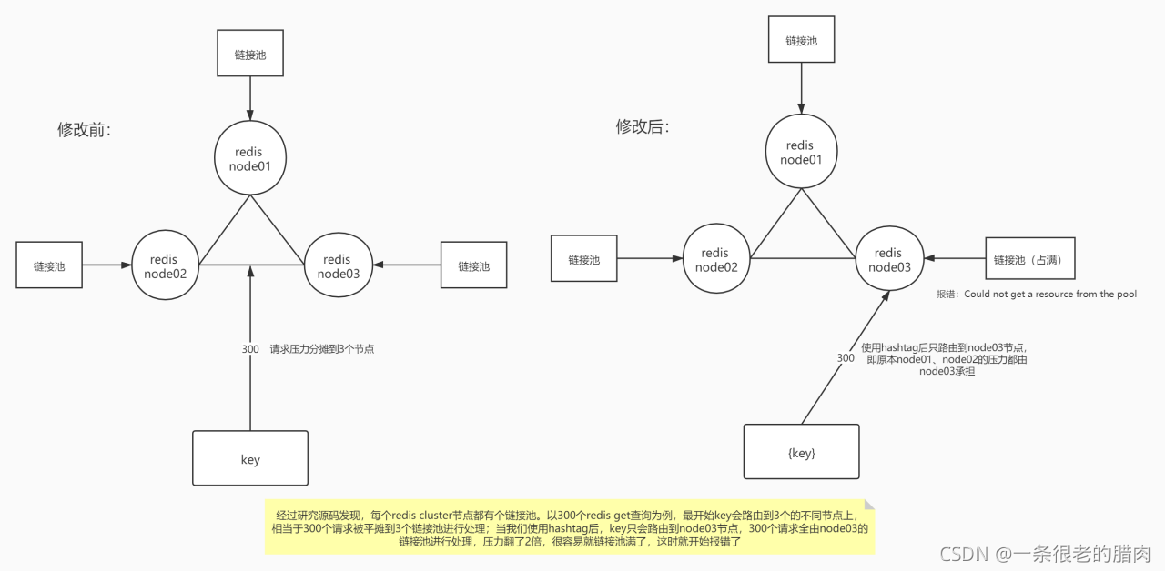

Because the value passed in will determine whether it is a number, if it is not a number, an error will be reported and you will enter the error page. The error page contains some spel expressions, so the spel expressions in the error page will be searched and parsed in a loop, and finally rendered to the page. However, the reason for the problem is ${messgae} If the value of is also a spel expression, it will continue to parse the expression circularly, so as to achieve command injection, that is, the user-controllable parameters are not verified.

Repair suggestions

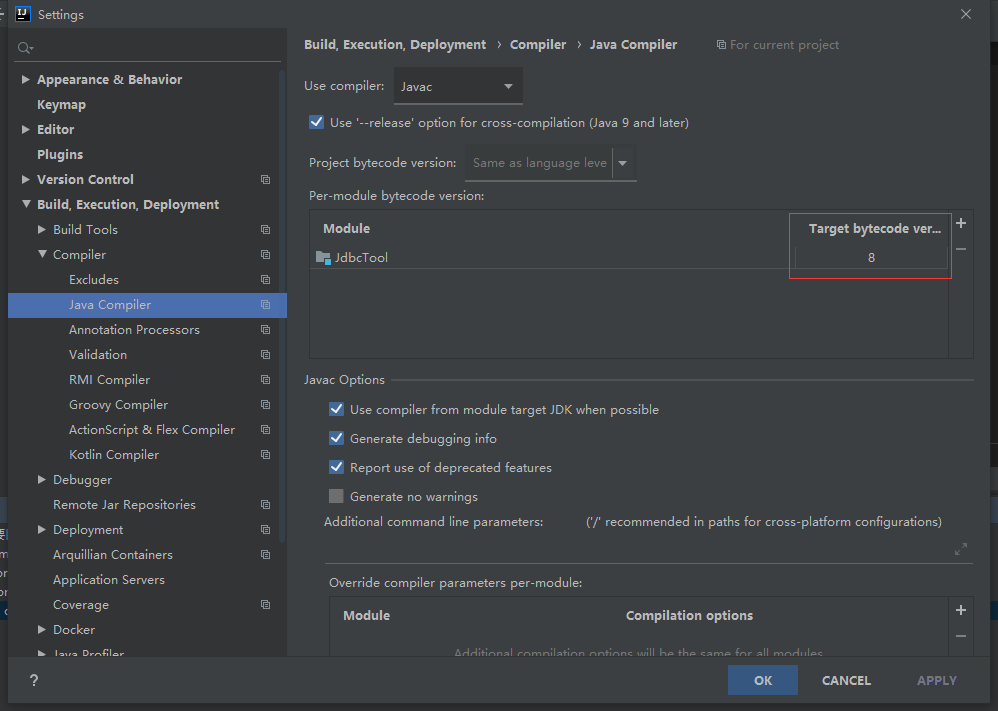

The reason is that the ID is not filtered in the spel direction. Therefore, I summarize and suggest the following points:

filter IDS, set black-and-white lists, filter values such as ${, upgrade the Framework version, patch, and customize the error page

is accessed

is accessed