Turn off eslint verification

1. Modify the following code for index.js in config

useEslint: false,

2. The universal method is to write in the first line of the error reporting JS file

/* eslint-disable */

3. There is a file. Eslintignore file in the root directory. You can add files that you do not need to verify.

for example, if you do not want it to verify Vue files, add. Vue. Of course, this will make all Vue files not verified. Similarly,. JS does not verify all JS files.

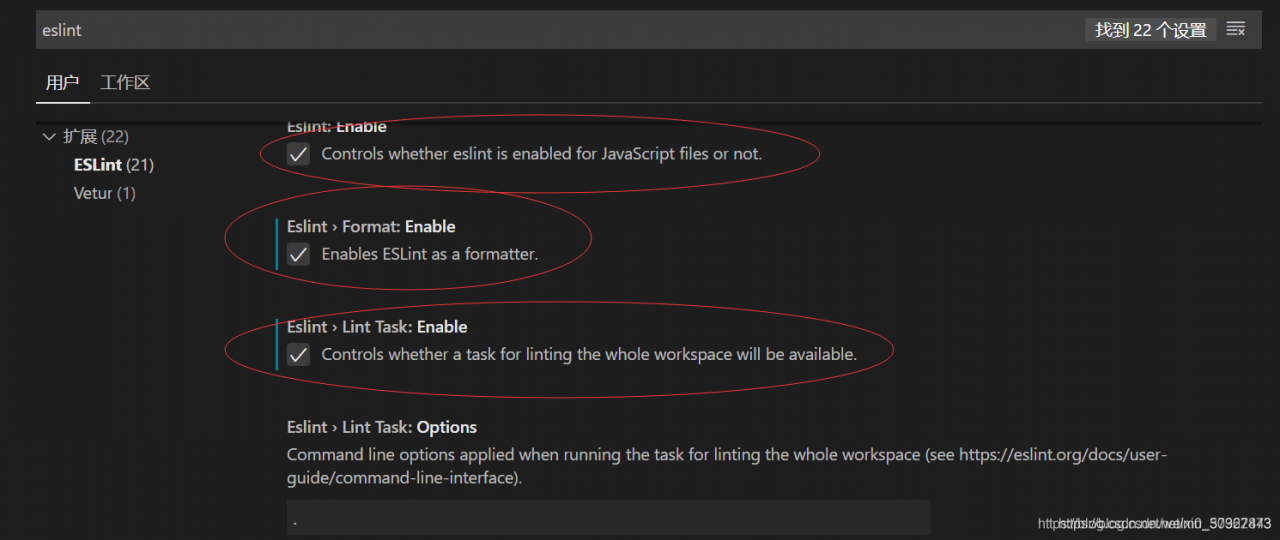

4. Open the extension of vscode editor and enter eslint search, Disable the eslint extension to solve the problem fundamentally

5. Check as shown in the figure to cancel the error reporting, restart vscode, and no error will be reported during compilation

6. Change the verification rule to 0 (0 means no verification, 1 means warning, 2 means error reporting)

rules: {

'vue/html-self-closing': 0,

'vue/html-indent': 0,

'vue/max-attributes-per-line': [

1,

{

singleline: 10,

multiline: {

max: 4,

allowFirstLine: true

}

}

],

}

7. Directly modify the configuration file vue.config.js

module.exports = {

lintOnSave: false

}

Causes and solutions of configuration conflict between eslint and prettier in vscode

Vscode uses the eslint plug-in and the prettier plug-in. The settings.json configuration of the editor is as follows:

{

"editor.formatOnSave": true, // Auto-formatting on save

"[javascript]": {

"editor.defaultFormatter": "esbenp.prettier-vscode", // use prettier when formatting

},

"editor.codeActionsOnSave": {

"source.fixAll.eslint": true // use eslint to verify files when saving

}

}

Eslint, prettier, eslint config prettier, eslint plugin prettier

are installed in the project. Pretierrc is added to the root directory

{

"singleQuote": true,

"semi": true,

}