try:

pd.DataFrame(output_data).to_csv(output_path, index=False)

except Exception as exp:

print("exp=",exp)

arrays must all be same length

Country_Code symbols – after removal, it seems that there is no problem

try:

pd.DataFrame(output_data).to_csv(output_path, index=False)

except Exception as exp:

print("exp=",exp)

arrays must all be same length

Country_Code symbols – after removal, it seems that there is no problem

linux rust download dependency error

if a proxy or similar is necessary net.git-fetch-with-cli

Create config in cargo

vi ~/.cargo/config

1

add proxy

[http]

proxy = "127.0.0.1:7891"

[https]

proxy = "127.0.0.1:7891"

Or modify the download warehouse

[source.crates-io]

registry = "https://github.com/rust-lang/crates.io-index"

replace-with = 'ustc'

[source.ustc]

registry = "git://mirrors.ustc.edu.cn/crates.io-index"

Pro test, modify and download the warehouse.

Solution to the error in compiling couldn't resolve host name (could not resolve host: Crites) by rust

When compiling the rust source program, the following couldn’t resolve host name (couldn’t resolve host: Crites) errors may occur (see https://github.com/ustclug/discussions/issues/294 )。

A temporary solution is to add the environment variable cargo when running cargo_ HTTP_ MULTIPLEXING=false。 The function is to cancel parallel downloads. For specific reasons, please refer to the error report of building rustc after using the image.

Pro test passed!

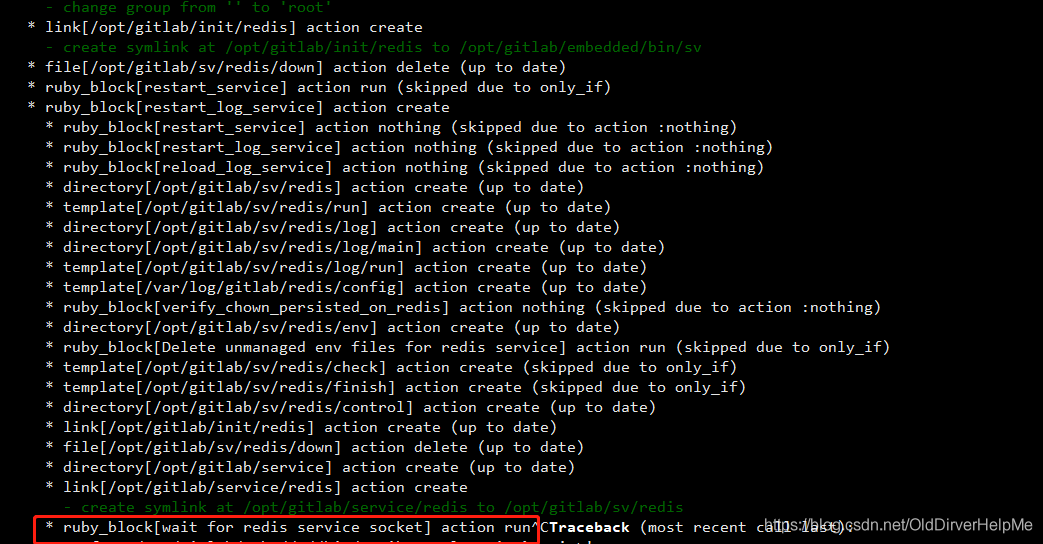

Gitlab is stuck in Ruby_ block[wait for redis service socket] action run

Environment: Ubuntu 20

When installing gitlab , execute sudo gitlab CTL reconfigure , and get stuck in this place when the /etc/gitlab/gitlab.rb file is installed

Insert a picture description here

and wait for a long time, but you don’t see any change in the log information output from the console.

Solution:

open another terminal and start the following command

sudo /opt/gitlab/embedded/bin/runsvdir-start

Or execute the above command in the background in the current terminal window

nohup /opt/gitlab/embedded/bin/runsvdir-start &

Then execute

sudo gitlab-ctl reconfigure

type MeshStatus struct {

// INSERT ADDITIONAL STATUS FIELD - define observed state of cluster

// Important: Run "make" to regenerate code after modifying this file

AvailableReplicas int `json:"available_replicas,omitempty"`

readyReplicas int `json:"ready_replicas,omitempty"`

Replicas int `json:"replicas,omitempty"`

}

error: struct field readyReplicas has json tag but is not exported

Cause of the error readyReplicas does not have a capitalization at the beginning ReadyReplicas

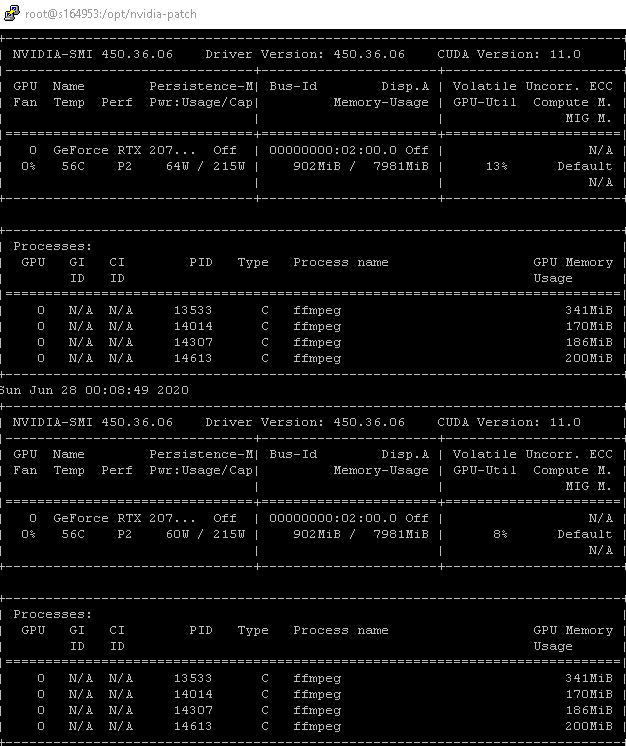

This problem occurs because Nvidia’s official restrictions

limit 2 concurrent processing tasks for different desktop-level products. For

detailed restrictions on each product, please refer to

https://developer.nvidia.com/video-encode-decode-gpu -support-matrix#Encoder

We can remove this restriction through the following patch

|

1

2

3

4

5

6

7

|

cd /opt

git clone https://github.com/keylase/nvidia-patch

cd nvidia–patch

# patch remove limite 2 sessions

bash ./patch.sh

# patch restore limit 2 sessions

bash ./patch.sh –r

|

Start ffmpeg

The above picture has started 4 ffmpeg HW transcoding processes, and there is no error message

. Special attention needs to be paid here. The graphics card memory used by the control process should not exceed the total graphics card memory. If it exceeds, there will be frame loss, green screen and other problems

As shown in the figure, our RTX 2070 Super has 8G video memory, and 4 processes take up 10% or so, so according to 400/process, the maximum of about 18-20 processes can be enabled.

The server with 10Gbps port installed 2 NVME hard disks, and the

result of frequent problems occurred. The

log of the problem of disk drop is as follows

Mar 10 13:10:10 10Gbps kernel: nvme nvme0: I/O 183 QID 3 timeout, aborting

Mar 10 13:10:10 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:13 10Gbps kernel: nvme nvme0: I/O 384 QID 4 timeout, aborting

Mar 10 13:10:13 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:14 10Gbps kernel: nvme nvme0: I/O 626 QID 5 timeout, aborting

Mar 10 13:10:14 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:15 10Gbps kernel: nvme nvme0: I/O 879 QID 7 timeout, aborting

Mar 10 13:10:15 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:28 10Gbps kernel: nvme nvme0: I/O 221 QID 5 timeout, aborting

Mar 10 13:10:28 10Gbps kernel: nvme nvme0: Abort status: 0x0

Mar 10 13:10:34 10Gbps kernel: nvme nvme0: I/O 510 QID 1 timeout, reset controller

Mar 10 13:10:55 10Gbps kernel: nvme nvme0: Device not ready; aborting reset, CSTS=0x1

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 271133736 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 2052266888 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 2175930376 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 7072889400 op 0x0:(READ) flags 0x80700 phys_seg 17 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 238328656 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 3954686056 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 6440531640 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 7301389616 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 6288979456 op 0x0:(READ) flags 0x80700 phys_seg 4 prio class 0

Mar 10 13:10:55 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 2149595472 op 0x0:(READ) flags 0x80700 phys_seg 6 prio class 0

Mar 10 13:11:16 10Gbps kernel: nvme nvme0: Device not ready; aborting reset, CSTS=0x1

Mar 10 13:11:16 10Gbps kernel: nvme nvme0: Removing after probe failure status: -19

Mar 10 13:11:36 10Gbps kernel: nvme nvme0: Device not ready; aborting reset, CSTS=0x1

Mar 10 13:11:36 10Gbps kernel: print_req_error: 6 callbacks suppressed

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 2000203632 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 68158880 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 4036854464 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 78773728 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 1987798736 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 1986001320 op 0x0:(READ) flags 0x1000 phys_seg 1 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 4082978496 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 5906970992 op 0x0:(READ) flags 0x1000 phys_seg 1 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 3962823040 op 0x0:(READ) flags 0x1000 phys_seg 4 prio class 0

Mar 10 13:11:36 10Gbps kernel: blk_update_request: I/O error, dev nvme0n1, sector 6902965512 op 0x1:(WRITE) flags 0x100000 phys_seg 40 prio class 0

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0x160153970 len 8 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0xf09d82c0 len 32 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0x98fd6a0 len 32 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0x4b1fde0 len 32 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: nvme0n1: writeback error on inode 6619987520, offset 0, sector 6902965832

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): metadata I/O error in “xfs_trans_read_buf_map” at daddr 0xec33e180 len 32 error 5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_do_force_shutdown(0x2) called from line 1250 of file fs/xfs/xfs_log.c. Return address = 00000000147aed99

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): Log I/O Error Detected. Shutting down filesystem

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): Please unmount the filesystem and rectify the problem(s)

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): log I/O error -5

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:36 10Gbps kernel: nvme0n1: writeback error on inode 77133302, offset 0, sector 684881640

Mar 10 13:11:36 10Gbps kernel: XFS (nvme0n1): xfs_imap_to_bp: xfs_trans_read_buf() returned error -5.

Mar 10 13:11:39 10Gbps kernel: VFS: busy inodes on changed media or resized disk nvme0n1

Mar 10 13:11:39 10Gbps kernel: nvme nvme0: failed to set APST feature (-19)

Mar 10 13:11:39 10Gbps systemd[1]: Stopped target Local File Systems.

analyzed that

most of the reasons are caused by intensive reading and writing.

This is a CDN cache node. Type reading

NVME temperature is relatively high, if it continues, it will start to throttle

and then slowly collapse.

And because this is ADATA’s XPG consumer product, it is not an enterprise-level

problem-solving

1. Replaced with Samsung’s PRO series, because the other two use Samsung There is no problem with the product

2. Try to increase heat dissipation

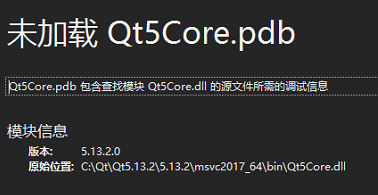

When releasing the version, windeployqt is used to generate the corresponding library, but it is inadvertently directly in C: \ QT \ qt5.13.2 \ 5.13.2 \ msvc2017_ Generated in 64 \ bin, overwriting qt5core.dll, resulting in debugging failure

it can be solved by replacing the original qt5core.dll.

I’m about to cry by myself.

first of all, install portaudio

brew install portaudio

Secondly, find the installation path of portaudio

sudo find/-name "portaudio.h"

In my example, my path is stored in /opt/Hometree/cellular/portaudio/19.7.0/include/portaudio. H

Finally, replace the portaudio path with your own and run the following code

pip install --global-option='build_ext' --global-option='-I/opt/homebrew/Cellar/portaudio/19.7.0/include' --global-option='-L/opt/homebrew/Cellar/portaudio/19.7.0/lib' pyaudio

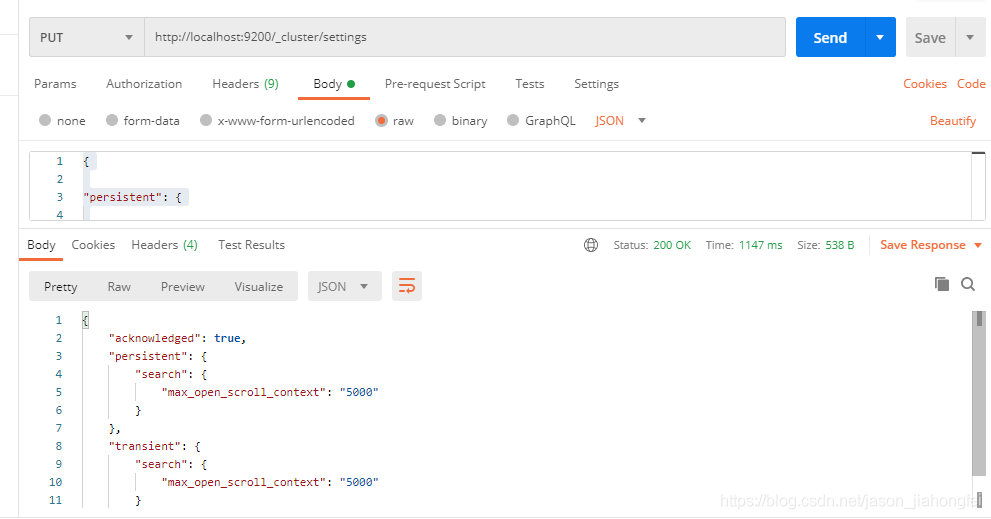

Accident scene

First, install elasticsearch 7.13.3 , then uninstall it, and then install elasticsearch 7.13.2 , start to report an error:

java.lang.IllegalStateException: cannot downgrade a node from version [7.13.3] to version [7.13.2]

at org.elasticsearch.env.NodeMetadata.upgradeToCurrentVersion(NodeMetadata.java:83) ~[elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.env.NodeEnvironment.loadNodeMetadata(NodeEnvironment.java:423) ~[elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.env.NodeEnvironment.<init>(NodeEnvironment.java:320) ~[elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.node.Node.<init>(Node.java:368) ~[elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.node.Node.<init>(Node.java:278) ~[elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.bootstrap.Bootstrap$5.<init>(Bootstrap.java:217) ~[elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.bootstrap.Bootstrap.setup(Bootstrap.java:217) ~[elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.bootstrap.Bootstrap.init(Bootstrap.java:397) [elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.bootstrap.Elasticsearch.init(Elasticsearch.java:159) [elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.bootstrap.Elasticsearch.execute(Elasticsearch.java:150) [elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.cli.EnvironmentAwareCommand.execute(EnvironmentAwareCommand.java:75) [elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.cli.Command.mainWithoutErrorHandling(Command.java:116) [elasticsearch-cli-7.13.2.jar:7.13.2]

at org.elasticsearch.cli.Command.main(Command.java:79) [elasticsearch-cli-7.13.2.jar:7.13.2]

at org.elasticsearch.bootstrap.Elasticsearch.main(Elasticsearch.java:115) [elasticsearch-7.13.2.jar:7.13.2]

at org.elasticsearch.bootstrap.Elasticsearch.main(Elasticsearch.java:81) [elasticsearch-7.13.2.jar:7.13.2]

Cause of the accident

The elasticsearch 7.13.3 is not unloaded completely, and some data remains in the /var/lib/elasticsearch path, which needs to be deleted at the same time;

The versions of compilesdkversion and buildtoolsversion in gradle are too high, which is beyond the support range of Android studio

Solution:

http://localhost:9200/_cluster/settings

Set the value of the variable: search.max_open_scroll_context

{

"persistent": {

"search.max_open_scroll_context": 5000

},

"transient": {

"search.max_open_scroll_context": 5000

}

}

Android Studio run app error:

Manifest merger failed : Apps targeting Android 12 and higher are required to specify an explicit value for `android:exported` when the corresponding component has an intent filter defined. See https://developer.android.com/guide/topics/manifest/activity-element#exported for details.

Environment used: Android studio 4.2.2, Pixel 2 API 29

Solution reference.

Add android:exported to activity in AndroidManifest.xml file, e.g:

<activity android:name=".MainActivity" android:exported="true">