Brokerload statement

LOAD

LABEL gaofeng_broker_load_HDD

(

DATA INFILE("hdfs://eoop/user/coue_data/hive_db/couta_test/ader_lal_offline_0813_1")

INTO TABLE ads_user

)

WITH BROKER "hdfs_broker"

(

"dfs.nameservices"="eadhadoop",

"dfs.ha.namenodes.eadhadoop" = "nn1,nn2",

"dfs.namenode.rpc-address.eadhadoop.nn1" = "h4:8000",

"dfs.namenode.rpc-address.eadhadoop.nn2" = "z7:8000",

"dfs.client.failover.proxy.provider.eadhadoop" = "org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider",

"hadoop.security.authentication" = "kerberos","kerberos_principal" = "ou3.CN",

"kerberos_keytab_content" = "BQ8uMTYzLkNPTQALY291cnNlXgAAAAFfVyLbAQABAAgCtp0qmxxP8QAAAAE="

);

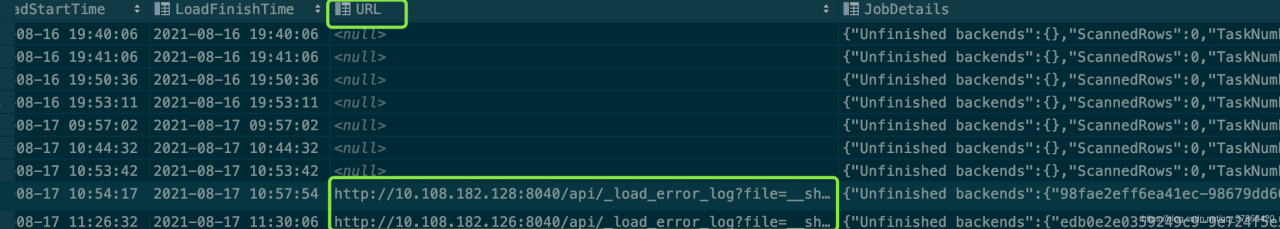

report errors

Task cancelled

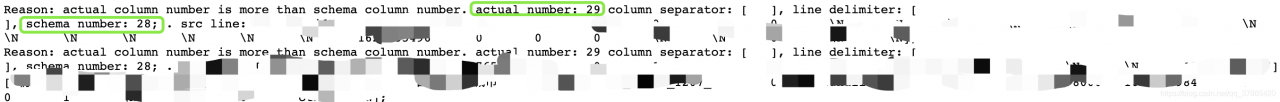

type:ETL_ RUN_ FAIL; msg:errCode = 2, detailMessage = No source file in this table(ads_ user).

Solution:

The data file path in the broker load statement is written incorrectly. What needs to be written is a file, not a directory

this directory is the directory I export the table directly. This cannot be used in broker load, but many files below

will be

hdfs://eoop/user/coue_ data/hive_ db/couta_ test/ader_ lal_ offline_ 0813_ 1

Modify to

hdfs://eoop/user/coue_ data/hive_ db/couta_ test/ader_ lal_ offline_ 0813_ 1/*

that will do

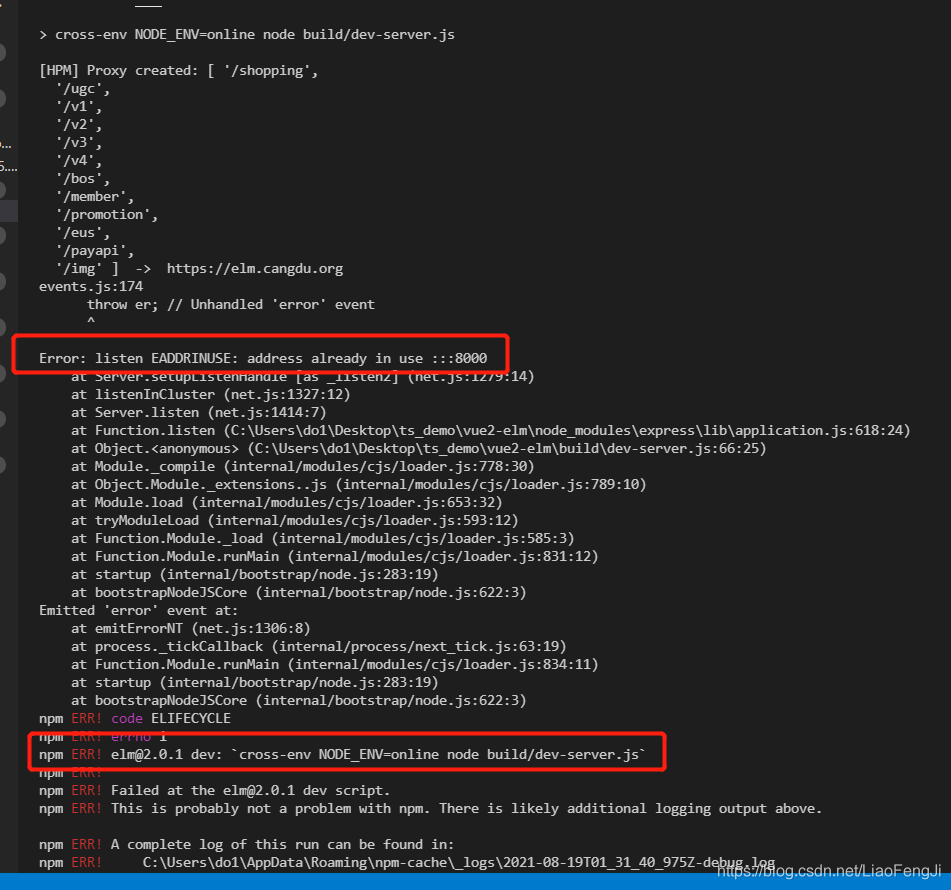

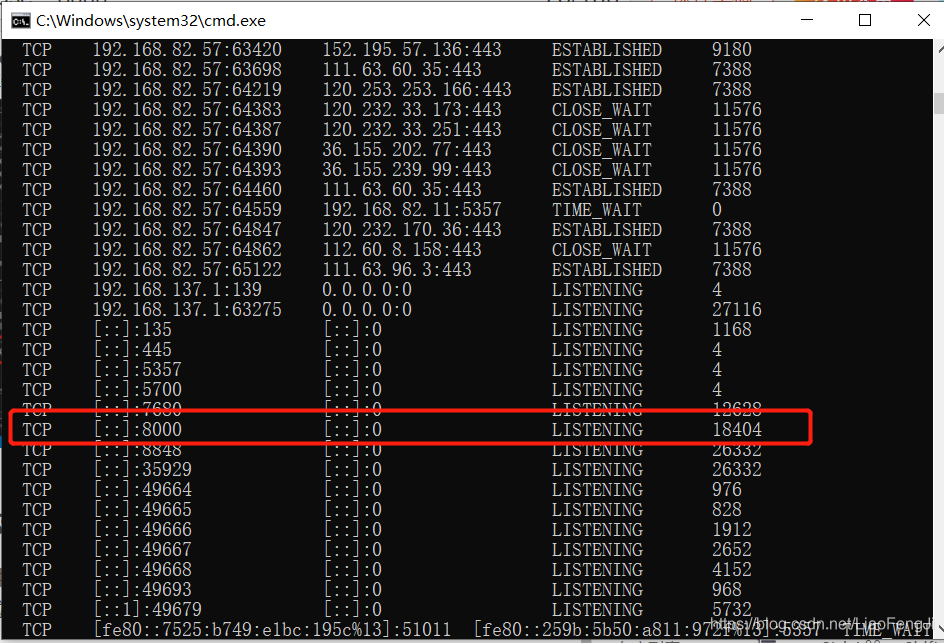

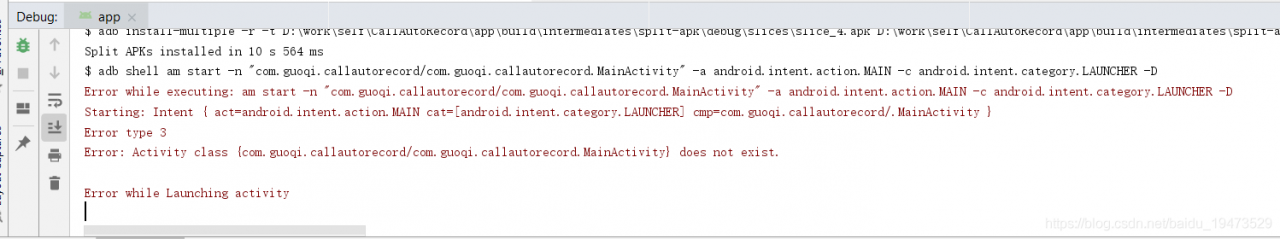

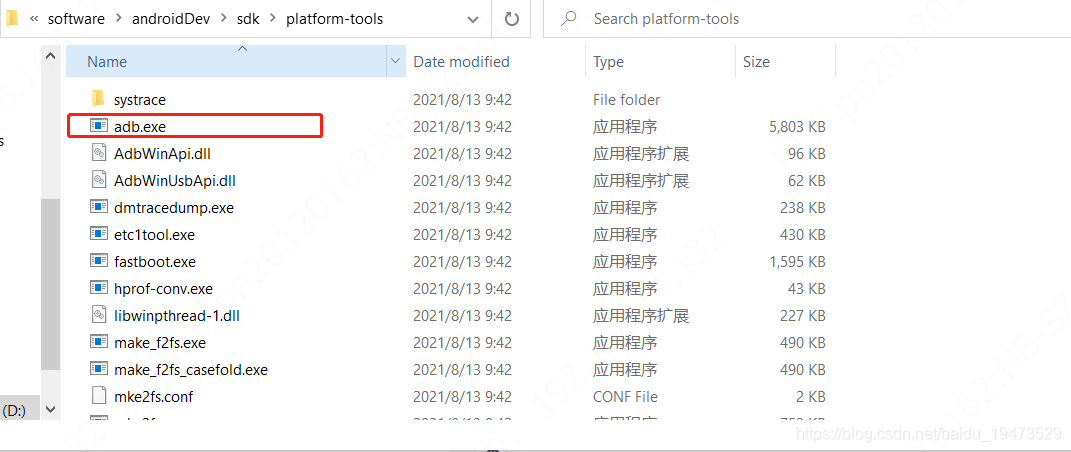

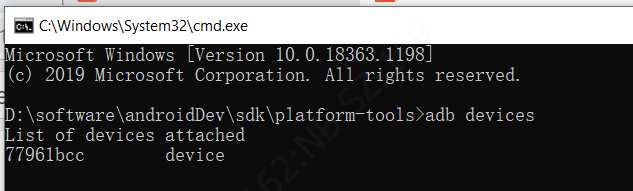

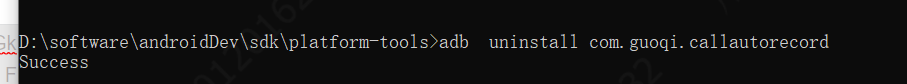

. Then, in the current directory, open the command line window and enter

. Then, in the current directory, open the command line window and enter

is installed successfully, as shown in the figure below

is installed successfully, as shown in the figure below