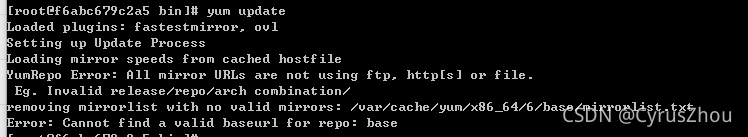

Error Message:

Loaded plugins: fastestmirror, ovl

Setting up Update Process

Loading mirror speeds from cached hostfile

YumRepo Error: All mirror URLs are not using ftp, http[s] or file.

Eg. Invalid release/repo/arch combination/

removing mirrorlist with no valid mirrors: /var/cache/yum/x86_64/6/base/mirrorlist.txt

Error: Cannot find a valid baseurl for repo: base

Solution:

mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo.old #Backup

vi /etc/yum.repos.d/CentOS-Base.repo #New/etc/yum.repos.d/centos-base.repo

[base]

name=CentOS-$releasever - Base

baseurl=https://vault.centos.org/6.10/os/$basearch/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-6

[updates]

name=CentOS-$releasever - Updates

baseurl=https://vault.centos.org/6.10/updates/$basearch/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-6

[extras]

name=CentOS-$releasever - Extras

baseurl=https://vault.centos.org/6.10/extras/$basearch/

gpgcheck=1

gpgkey=file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-6