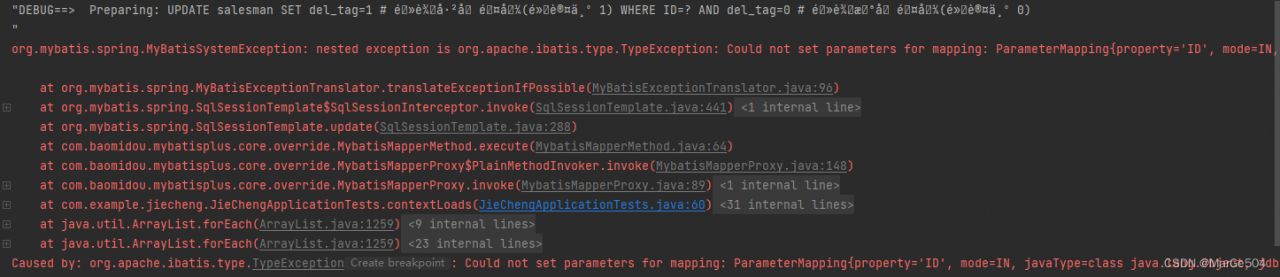

Error reporting details

When using mybatis plus multiple data sources, the startup message cannot find the master data source

com.baomidou.dynamic.datasource.exception.CannotFindDataSourceException: dynamic-datasource can not find primary datasource

at com.baomidou.dynamic.datasource.DynamicRoutingDataSource.determinePrimaryDataSource(DynamicRoutingDataSource.java:91) ~[dynamic-datasource-spring-boot-starter-3.5.1.jar:3.5.1]

at com.baomidou.dynamic.datasource.DynamicRoutingDataSource.getDataSource(DynamicRoutingDataSource.java:120) ~[dynamic-datasource-spring-boot-starter-3.5.1.jar:3.5.1]

at com.baomidou.dynamic.datasource.DynamicRoutingDataSource.determineDataSource(DynamicRoutingDataSource.java:78) ~[dynamic-datasource-spring-boot-starter-3.5.1.jar:3.5.1]

at com.baomidou.dynamic.datasource.ds.AbstractRoutingDataSource.getConnection(AbstractRoutingDataSource.java:48) ~[dynamic-datasource-spring-boot-starter-3.5.1.jar:3.5.1]

......

Solution:

① The dependency of multiple data sources is introduced, but multiple data sources are not used

<!--This is the dependent version I use-->

<dependency>

<groupId>com.baomidou</groupId>

<artifactId>dynamic-datasource-spring-boot-starter</artifactId>

<version>3.5.1</version>

</dependency>

<!--document-->

<dependency>

<groupId>com.baomidou</groupId>

<artifactId>dynamic-datasource-spring-boot-starter</artifactId>

<version>${version}</version>

</dependency>

Multi data source usage: use @ds to switch data sources.

@DS can be annotated on methods or classes, and there is a proximity principle that annotations on methods take precedence over annotations on classes.

| annotation |

result |

| no @DS |

Default data source |

| @DS(“databaseName”) |

databaseName can be a group name or the name of a specific library |

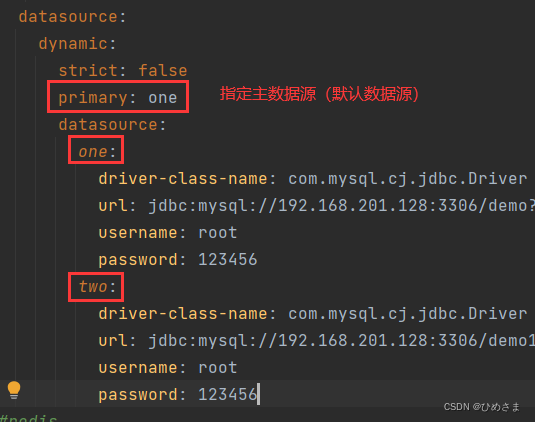

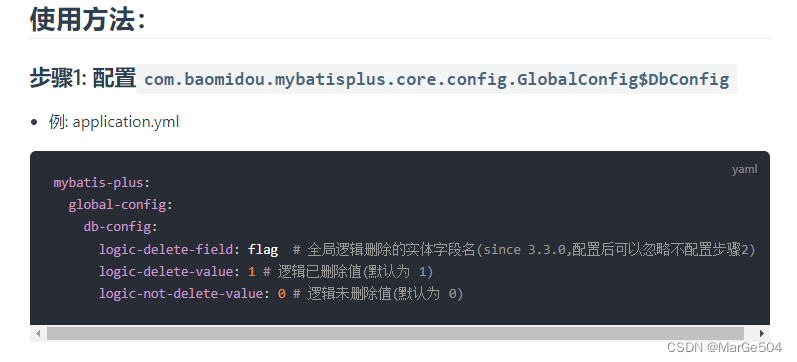

② Multiple data sources are used but the main data source is not specified

spring:

datasource:

dynamic:

primary: master # Set the default data source or data source group, the default value is master

strict: false #Strictly match the datasource, default false. true does not match the specified datasource throw an exception, false uses the default datasource

datasource:

master:

url: jdbc:mysql://xx.xx.xx.xx:3306/dynamic

username: root

password: 123456

driver-class-name: com.mysql.jdbc.Driver # This configuration can be omitted for SPI support since 3.2.0

slave_1:

url: jdbc:mysql://xx.xx.xx.xx:3307/dynamic

username: root

password: 123456

driver-class-name: com.mysql.jdbc.

slave_2:

url: ENC(xxxxxx) # Built-in encryption, please check the detailed documentation for use

username: ENC(xxxxxxxxxx)

password: ENC(xxxxxxxxxx)

driver-class-name: com.mysql.jdbc.

#...... omit

#The above will configure a default library master, a group slave with two sub-banks slave_1,slave_2

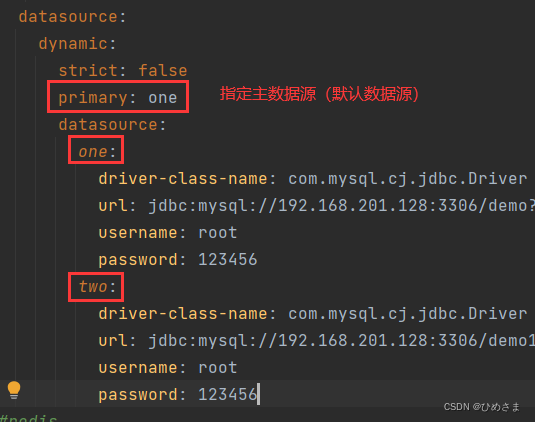

③ Check carefully if there is any alignment in the configuration

# Correct format

spring:

datasource:

dynamic:

strict: false

primary: one

datasource:

one:

driver-class-name: com.mysql.cj.jdbc.Driver

url: jdbc:mysql://localhost:3306/demo?allowMultiQueries=true&zeroDateTimeBehavior=convertToNull&useUnicode=true&characterEncoding=utf-8&serverTimezone=Asia/Shanghai&useSSL=false

username: root

password: 123456

two:

driver-class-name: com.mysql.cj.jdbc.Driver

url: jdbc:mysql://localhost:3306/demo1?allowMultiQueries=true&zeroDateTimeBehavior=convertToNull&useUnicode=true&characterEncoding=utf-8&serverTimezone=Asia/Shanghai&useSSL=false

username: root

password: 123456