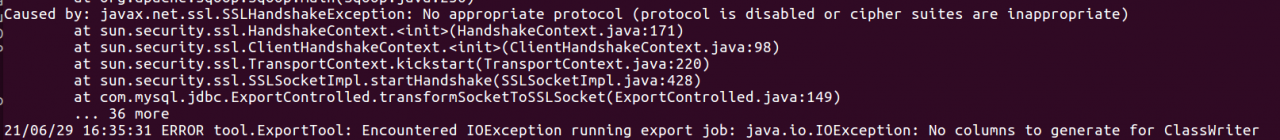

The error is as follows

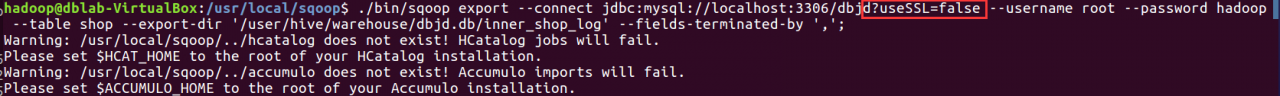

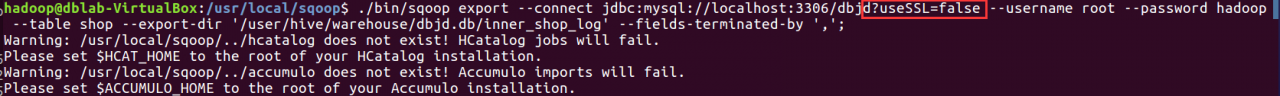

Solution: add usessl = false after the MySQL connection URL, as shown in the figure below

Import it later

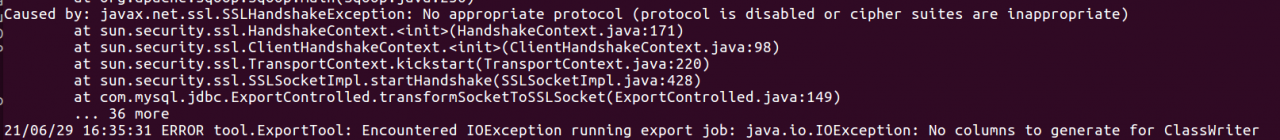

The error is as follows

Solution: add usessl = false after the MySQL connection URL, as shown in the figure below

Import it later

Decompilation modification is easy to make mistakes. It is recommended to modify it in the following ways

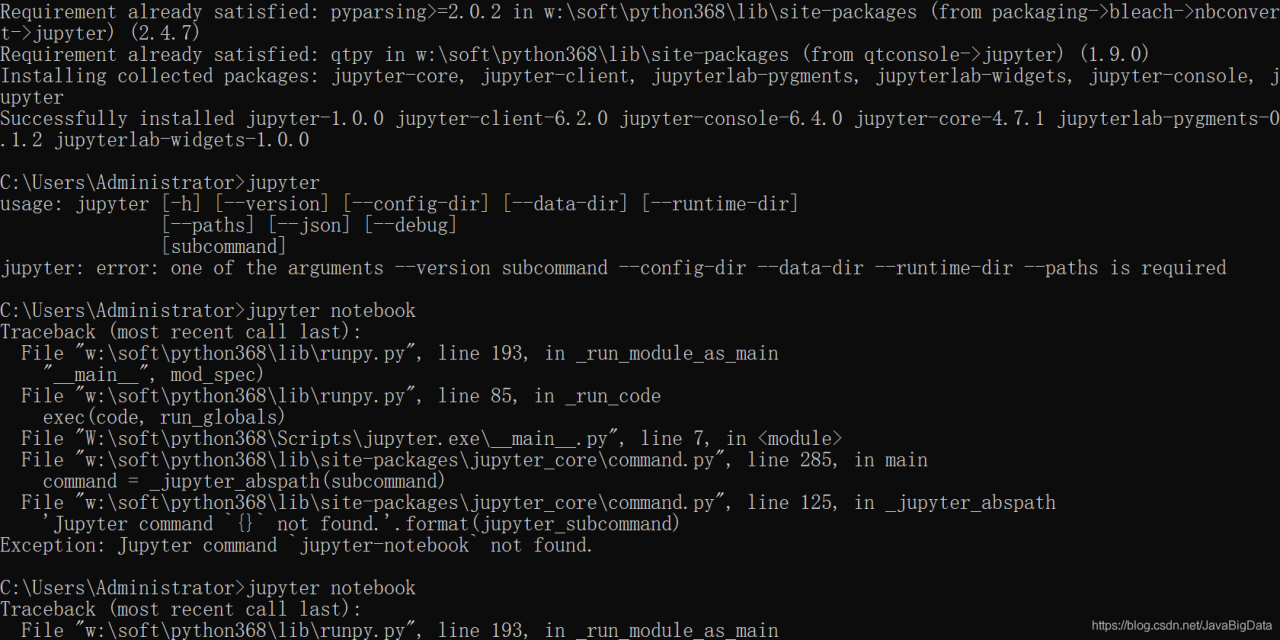

pip uninstall jupyter jupyter-client jupyter-console jupyter-core jupyterlab-pygments jupyterlab-widgets

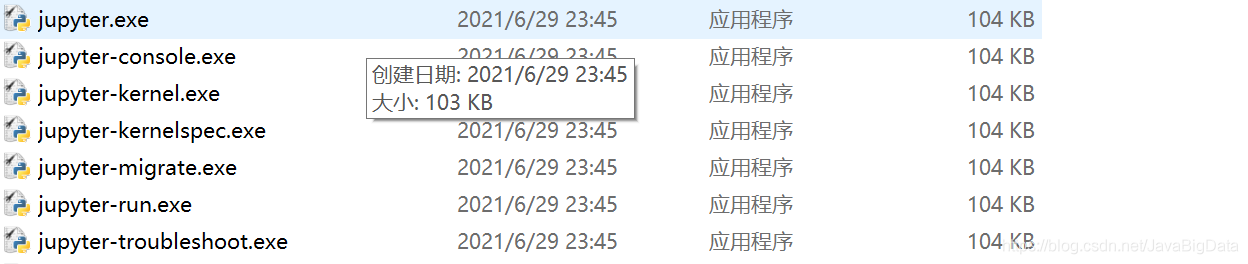

Delete the files in the script directory

Then install PIP install Jupiter

Problem Description: when using the SCP command to copy the file to another server, an error prompt appears

Permission denied, please try again.

terms of settlement

Configuration file path: /etc/SSH/sshd_ config

Permitrootlogin no/without password

?Change to:

permitrootlogin yes

2. Check whether the permission to copy into the folder is sufficient

Check whether the folder has write permission, who is the user of the created folder and who the folder belongs to.

chmod 766 /usr/local/backup/

3. Check whether the SCP copy syntax is correct (whether the user name is correct/valid)

scp -r log [email protected] :/usr/local/backup

Please check the user name carefully

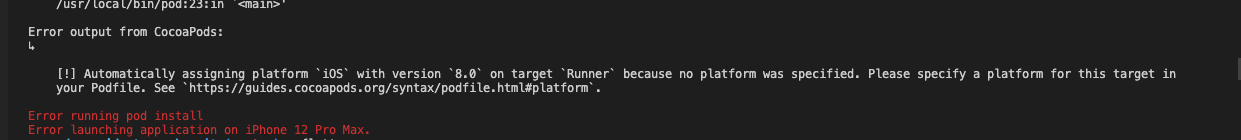

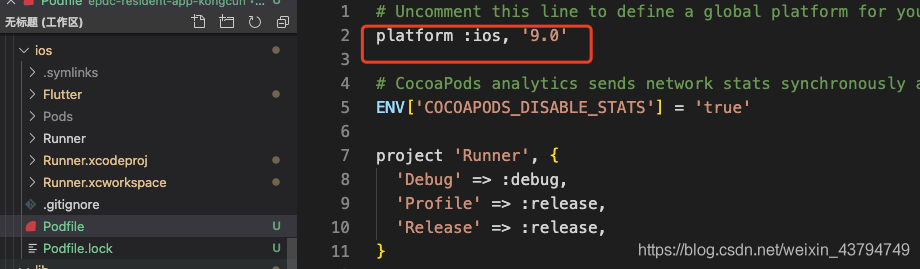

Environment: MAC, when running the flutter run in vscode, the following error is reported:

before  , the code was logged off, now release it. Podfile is a folder brought by the MAC running itself, and then running the flutter run will report an error

, the code was logged off, now release it. Podfile is a folder brought by the MAC running itself, and then running the flutter run will report an error

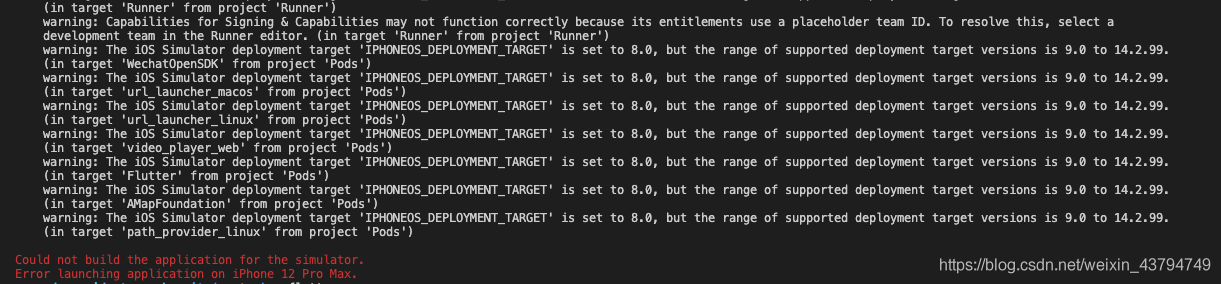

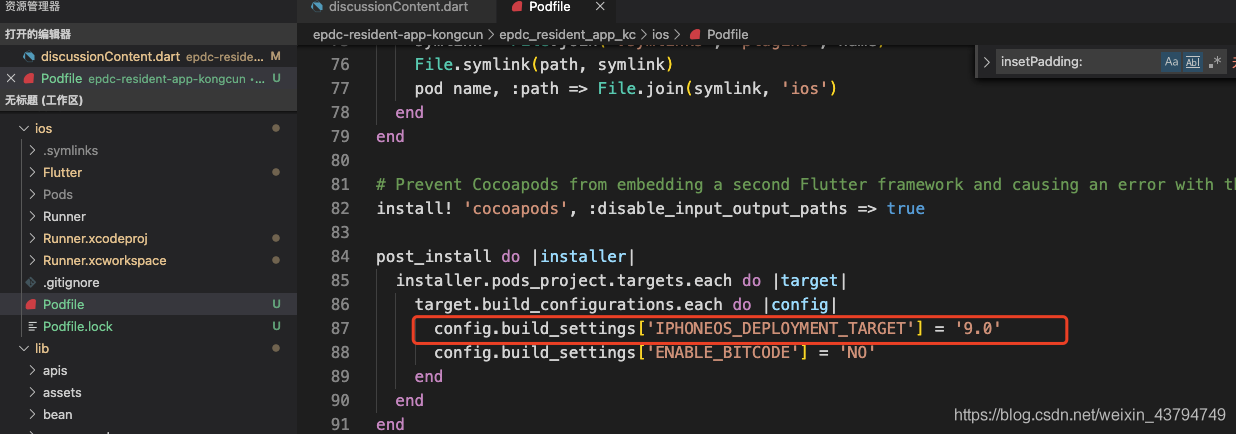

. Go to the configuration file and configure the code

. Go to the configuration file and configure the code

post_install do |installer|

installer.pods_project.targets.each do |target|

target.build_configurations.each do |config|

config.build_settings['IPHONEOS_DEPLOYMENT_TARGET'] = '9.0'

config.build_settings['ENABLE_BITCODE'] = 'NO'

end

end

end

Then run the flutter run to start the project successfully

PostgreSQL provides a hidden parameter zero_ damaged_ Pages. When this parameter is true, all pages with damaged data will be ignored.

database$> SET zero_damaged_pages = on;

database$> VACUUM FULL damaged_table;

database$> REINDEX TABLE damaged_table;

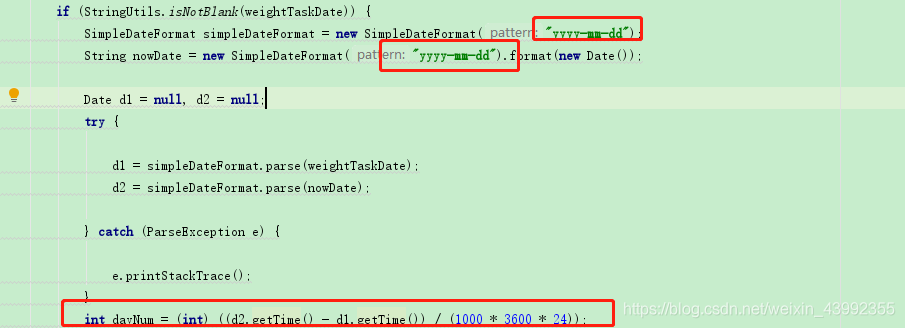

String to date:

SimpleDateFormat simpleDateFormat = new SimpleDateFormat("yyyy-mm-dd");

String nowDate = new SimpleDateFormat("yyyy-mm-dd").format(new Date());

Format must be written, otherwise an error will be reported. Conversion exception: Java. Text. Parseexception: unparseable date

Date subtraction calculation days reference:

int dayNum = (int) ((d2.getTime() - d1.getTime())/(1000 * 3600 * 24));

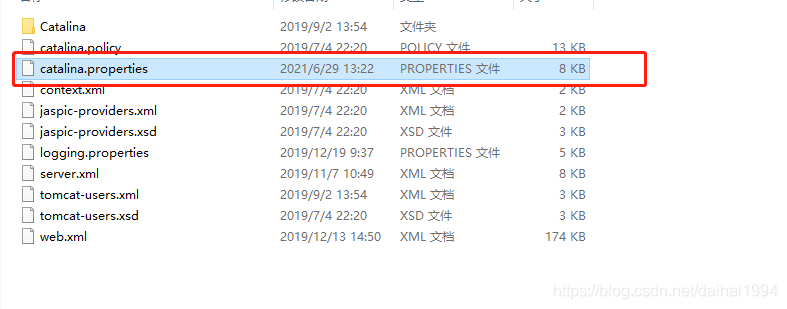

Problem:

start springmvc project, start error:

org.apache.jasper.servlet.tldscanner.scanjars at least one jar is scanned for TLD, but it does not contain TLD. Enable debug logging for this logger to get a complete list of jars that have been scanned but in which TLDs were not found. Skipping unnecessary jars during scanning can reduce startup time and JSP compilation time.

Solution:

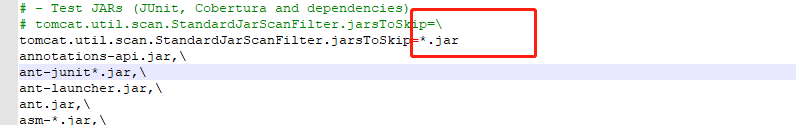

1. Find the catalina.properties file in the conf directory under your Tomcat installation path:

2. Change the content after the equal sign in the figure below to *. Jar

3. Then reconfigure your own server and restart it.

2021-06-29T17:47:55,131 DEBUG [HiveServer2-Handler-Pool: Thread-52] retry.RetryInvocationHandler: Exception while invoking call #36 ClientNamenodeProtocolTranslatorPB.getFileInfo over null. Not retry

ing because try once and fail.org.apache.hadoop.ipc.RemoteException: User: hive is not allowed to impersonate anonymous

at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1491) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.ipc.Client.call(Client.java:1437) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.ipc.Client.call(Client.java:1347) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:228) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:116) ~[hadoop-common-3.0.0.jar:?]

at com.sun.proxy.$Proxy31.getFileInfo(Unknown Source) ~[?:?]

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getFileInfo(ClientNamenodeProtocolTranslatorPB.java:874) ~[hadoop-hdfs-client-3.0.0.jar:?]

at sun.reflect.GeneratedMethodAccessor4.invoke(Unknown Source) ~[?:?]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_291]

at java.lang.reflect.Method.invoke(Method.java:498) ~[?:1.8.0_291]

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:422) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:165) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:157) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:359) ~[hadoop-common-3.0.0.jar:?]

at com.sun.proxy.$Proxy32.getFileInfo(Unknown Source) ~[?:?]

at org.apache.hadoop.hdfs.DFSClient.getFileInfo(DFSClient.java:1697) ~[hadoop-hdfs-client-3.0.0.jar:?]

at org.apache.hadoop.hdfs.DistributedFileSystem$29.doCall(DistributedFileSystem.java:1491) ~[hadoop-hdfs-client-3.0.0.jar:?]

at org.apache.hadoop.hdfs.DistributedFileSystem$29.doCall(DistributedFileSystem.java:1488) ~[hadoop-hdfs-client-3.0.0.jar:?]

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1503) ~[hadoop-hdfs-client-3.0.0.jar:?]

at org.apache.hadoop.fs.FileSystem.exists(FileSystem.java:1668) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.hive.ql.exec.Utilities.ensurePathIsWritable(Utilities.java:4486) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.session.SessionState.createRootHDFSDir(SessionState.java:760) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.session.SessionState.createSessionDirs(SessionState.java:701) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:627) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:586) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.session.HiveSessionImpl.open(HiveSessionImpl.java:179) ~[hive-service-3.1.2.jar:3.1.2]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) ~[?:1.8.0_291]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) ~[?:1.8.0_291]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_291]

at java.lang.reflect.Method.invoke(Method.java:498) ~[?:1.8.0_291]

at org.apache.hive.service.cli.session.HiveSessionProxy.invoke(HiveSessionProxy.java:78) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.session.HiveSessionProxy.access$000(HiveSessionProxy.java:36) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.session.HiveSessionProxy$1.run(HiveSessionProxy.java:63) ~[hive-service-3.1.2.jar:3.1.2]

at java.security.AccessController.doPrivileged(Native Method) ~[?:1.8.0_291]

at javax.security.auth.Subject.doAs(Subject.java:422) ~[?:1.8.0_291]

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1962) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hive.service.cli.session.HiveSessionProxy.invoke(HiveSessionProxy.java:59) ~[hive-service-3.1.2.jar:3.1.2]

at com.sun.proxy.$Proxy39.open(Unknown Source) ~[?:?]

at org.apache.hive.service.cli.session.SessionManager.createSession(SessionManager.java:425) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.session.SessionManager.openSession(SessionManager.java:373) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.CLIService.openSessionWithImpersonation(CLIService.java:195) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.thrift.ThriftCLIService.getSessionHandle(ThriftCLIService.java:472) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.thrift.ThriftCLIService.OpenSession(ThriftCLIService.java:322) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.rpc.thrift.TCLIService$Processor$OpenSession.getResult(TCLIService.java:1497) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hive.service.rpc.thrift.TCLIService$Processor$OpenSession.getResult(TCLIService.java:1482) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.thrift.ProcessFunction.process(ProcessFunction.java:39) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hive.service.auth.TSetIpAddressProcessor.process(TSetIpAddressProcessor.java:56) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:286) ~[hive-exec-3.1.2.jar:3.1.2]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) ~[?:1.8.0_291]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) ~[?:1.8.0_291]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_291]

2021-06-29T17:47:55,133 WARN [HiveServer2-Handler-Pool: Thread-52] service.CompositeService: Failed to open session

java.lang.RuntimeException: java.lang.RuntimeException: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.authorize.AuthorizationException): User: hive is not allowed to impersonate an

onymous at org.apache.hive.service.cli.session.HiveSessionProxy.invoke(HiveSessionProxy.java:89) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.session.HiveSessionProxy.access$000(HiveSessionProxy.java:36) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.session.HiveSessionProxy$1.run(HiveSessionProxy.java:63) ~[hive-service-3.1.2.jar:3.1.2]

at java.security.AccessController.doPrivileged(Native Method) ~[?:1.8.0_291]

at javax.security.auth.Subject.doAs(Subject.java:422) ~[?:1.8.0_291]

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1962) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hive.service.cli.session.HiveSessionProxy.invoke(HiveSessionProxy.java:59) ~[hive-service-3.1.2.jar:3.1.2]

at com.sun.proxy.$Proxy39.open(Unknown Source) ~[?:?]

at org.apache.hive.service.cli.session.SessionManager.createSession(SessionManager.java:425) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.session.SessionManager.openSession(SessionManager.java:373) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.CLIService.openSessionWithImpersonation(CLIService.java:195) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.thrift.ThriftCLIService.getSessionHandle(ThriftCLIService.java:472) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.thrift.ThriftCLIService.OpenSession(ThriftCLIService.java:322) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.hive.service.rpc.thrift.TCLIService$Processor$OpenSession.getResult(TCLIService.java:1497) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hive.service.rpc.thrift.TCLIService$Processor$OpenSession.getResult(TCLIService.java:1482) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.thrift.ProcessFunction.process(ProcessFunction.java:39) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.thrift.TBaseProcessor.process(TBaseProcessor.java:39) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hive.service.auth.TSetIpAddressProcessor.process(TSetIpAddressProcessor.java:56) ~[hive-service-3.1.2.jar:3.1.2]

at org.apache.thrift.server.TThreadPoolServer$WorkerProcess.run(TThreadPoolServer.java:286) ~[hive-exec-3.1.2.jar:3.1.2]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) ~[?:1.8.0_291]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) ~[?:1.8.0_291]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_291]

Caused by: java.lang.RuntimeException: org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.authorize.AuthorizationException): User: hive is not allowed to impersonate anonymous

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:651) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:586) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.session.HiveSessionImpl.open(HiveSessionImpl.java:179) ~[hive-service-3.1.2.jar:3.1.2]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) ~[?:1.8.0_291]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) ~[?:1.8.0_291]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_291]

at java.lang.reflect.Method.invoke(Method.java:498) ~[?:1.8.0_291]

at org.apache.hive.service.cli.session.HiveSessionProxy.invoke(HiveSessionProxy.java:78) ~[hive-service-3.1.2.jar:3.1.2]

... 21 more

Caused by: org.apache.hadoop.ipc.RemoteException: User: hive is not allowed to impersonate anonymous

at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1491) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.ipc.Client.call(Client.java:1437) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.ipc.Client.call(Client.java:1347) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:228) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:116) ~[hadoop-common-3.0.0.jar:?]

at com.sun.proxy.$Proxy31.getFileInfo(Unknown Source) ~[?:?]

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getFileInfo(ClientNamenodeProtocolTranslatorPB.java:874) ~[hadoop-hdfs-client-3.0.0.jar:?]

at sun.reflect.GeneratedMethodAccessor4.invoke(Unknown Source) ~[?:?]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_291]

at java.lang.reflect.Method.invoke(Method.java:498) ~[?:1.8.0_291]

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:422) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:165) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:157) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:359) ~[hadoop-common-3.0.0.jar:?]

at com.sun.proxy.$Proxy32.getFileInfo(Unknown Source) ~[?:?]

at org.apache.hadoop.hdfs.DFSClient.getFileInfo(DFSClient.java:1697) ~[hadoop-hdfs-client-3.0.0.jar:?]

at org.apache.hadoop.hdfs.DistributedFileSystem$29.doCall(DistributedFileSystem.java:1491) ~[hadoop-hdfs-client-3.0.0.jar:?]

at org.apache.hadoop.hdfs.DistributedFileSystem$29.doCall(DistributedFileSystem.java:1488) ~[hadoop-hdfs-client-3.0.0.jar:?]

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1503) ~[hadoop-hdfs-client-3.0.0.jar:?]

at org.apache.hadoop.fs.FileSystem.exists(FileSystem.java:1668) ~[hadoop-common-3.0.0.jar:?]

at org.apache.hadoop.hive.ql.exec.Utilities.ensurePathIsWritable(Utilities.java:4486) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.session.SessionState.createRootHDFSDir(SessionState.java:760) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.session.SessionState.createSessionDirs(SessionState.java:701) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:627) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hadoop.hive.ql.session.SessionState.start(SessionState.java:586) ~[hive-exec-3.1.2.jar:3.1.2]

at org.apache.hive.service.cli.session.HiveSessionImpl.open(HiveSessionImpl.java:179) ~[hive-service-3.1.2.jar:3.1.2]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) ~[?:1.8.0_291]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) ~[?:1.8.0_291]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) ~[?:1.8.0_291]

at java.lang.reflect.Method.invoke(Method.java:498) ~[?:1.8.0_291]

at org.apache.hive.service.cli.session.HiveSessionProxy.invoke(HiveSessionProxy.java:78) ~[hive-service-3.1.2.jar:3.1.2]

... 21 more

Solution: add the following configuration to core-site.xml in HDFS

<property>

<name>hadoop.proxyuser.hive.groups</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hive.hosts</name>

<value>*</value>

</property>

Researchers, hadoop

: https://blog.csdn.net/github_38358734/article/details/77522798

When using mybatis plus3.2.0 + springboot2.1.1, an error is reported

error attaching to get column from result set

1. Generally, this kind of problem occurs. The simplest error may be that the field type of the database is inconsistent with the type of the entity class

2. But I’m not. I use @ builder in the entity class, but I don’t use @ allargsconstructor and @ noargsconstructor, There is no parameterless constructor

1. Build directory and source code directory are at the same level

2. After the build directory of build is selected, the directory of exclutable is automatically assigned to the debug or release file

3. The directory of the debug or release file is the same level as the source code

2. The working directory of run is at the same level as the source code directory.

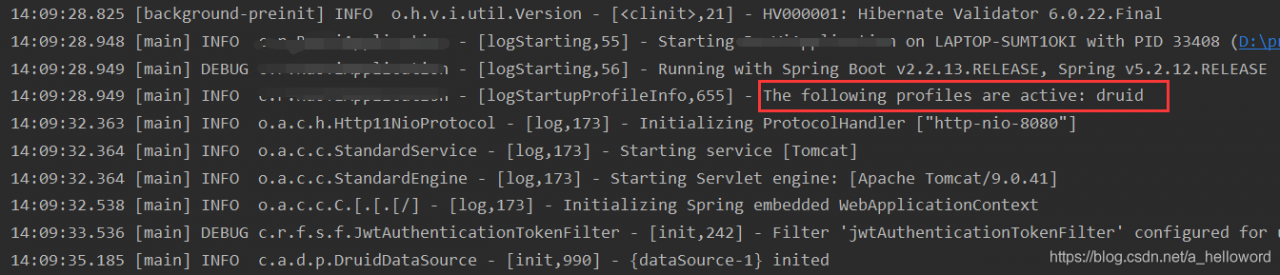

An error was reported when launching the springboot project today

Caused by: java.lang.IllegalArgumentException: Could not resolve placeholder

'spring.datasource.druid.initialSize' in value "${spring.datasource.druid.initialSize}"

First, confirm whether the field exists in the configuration file and whether the field name is written correctly. If it is confirmed that there is no error, check whether the configuration file is referenced. Generally, we will configure different configuration files in different environments, so we need to specify which configuration file to use in application.yml

spring:

profiles:

active: druid

During the startup process, which file is used will be output. Note that if there is no output, it means that it has no effect

if you check the above, you can try build – & gt; Rebuild project try to rebuild and I succeed.

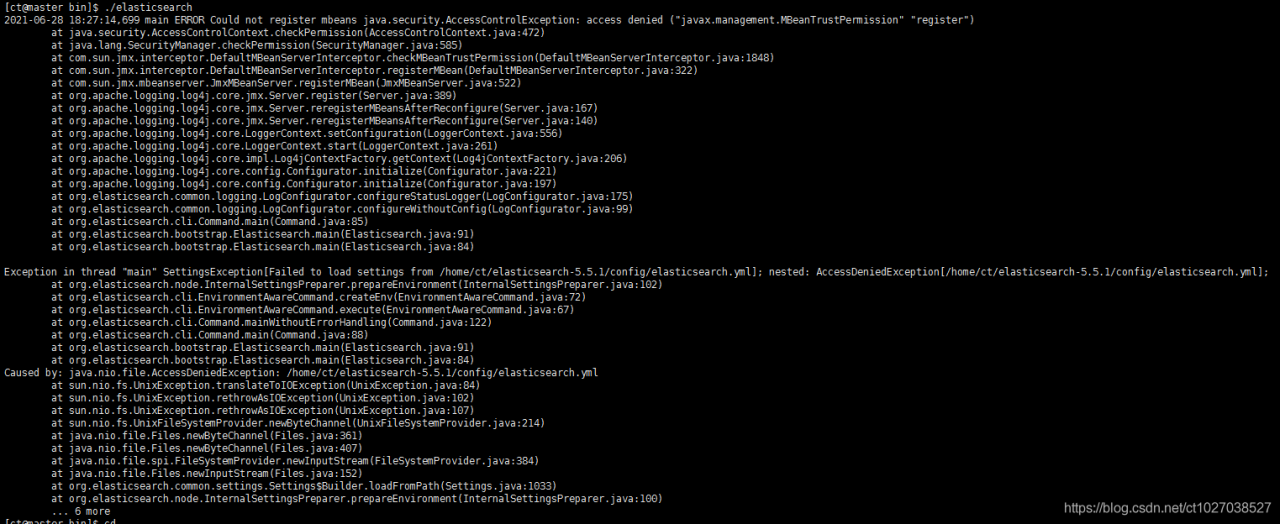

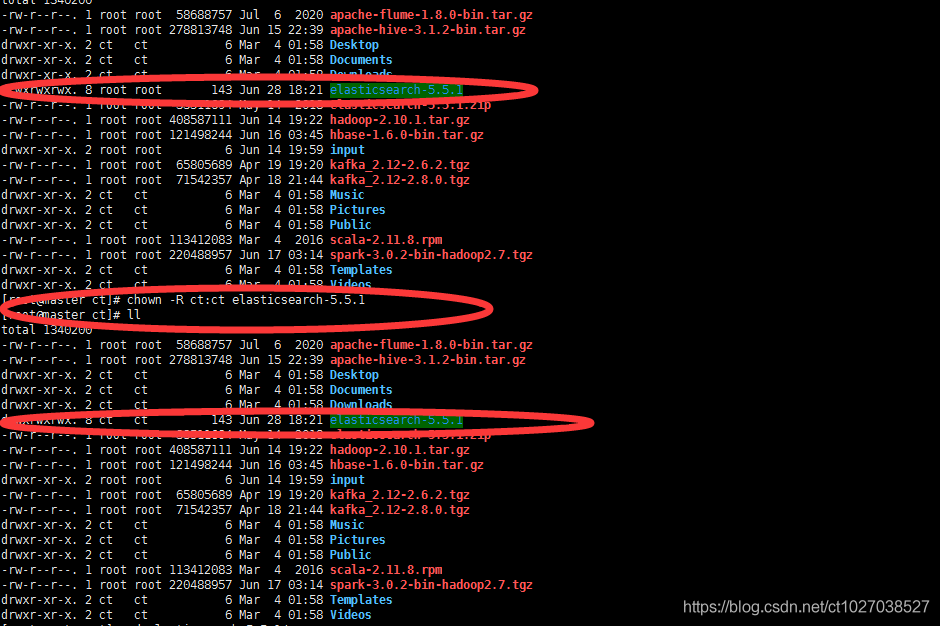

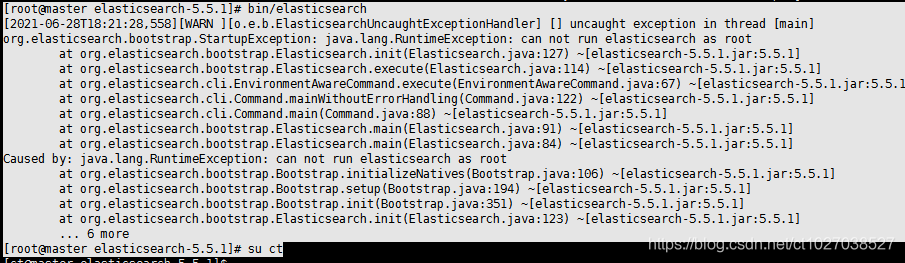

error1: The default startup user of elasticsearch is the non root user, and you can switch to the non root user.

erorr2: The elasticsearch installation package belongs to the root group, and you can modify the permission group to a non root group.