Introducing Maven dependency into POM file

<build>

<pluginManagement>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-jar-plugin</artifactId>

<version>2.4</version>

<configuration>

<archive>

<manifest>

<addClasspath>true</addClasspath>

<classpathPrefix>lib/</classpathPrefix>

<mainClass>com.pro.main</mainClass>

</manifest>

</archive>

</configuration>

</plugin>

</plugins>

</pluginManagement>

</build>

Code in main method

if(args.length !=2){

System.out.println("Please enter the path");

System.exit(-1);

}

Job job = Job.getInstance();

Configuration conf = new Configuration();

//1. encapsulate the position of the parameter jarbao

job.setJarByClass(Submitter.class);

//2. Wrapping parameters The position of the current job mapper implementation class in the position of the reduce implementation class

job.setMapperClass(WordCountMapper.class);

job.setReducerClass(WordConutReduce.class);

//3. encapsulate the parameters of the current job map output

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

//4. encapsulate the parameters What is the output of this job reduce

job.setOutputKeyClass(Text.class);

job.setOutputKeyClass(IntWritable.class);

// determine whether there is an output folder

Path path = new Path(args[1]);

FileSystem fileSystem = path.getFileSystem(conf);// find this file according to path

if (fileSystem.exists(path)) {

fileSystem.delete(path, true);// true means that even if output has something, it is deleted along with it

}

//5. encapsulate the parameters where the dataset to be processed by this job is generated paths

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

//6. Wrap parameters Number of multiple reduce tasks started

job.setNumReduceTasks(1);

//7. Submit the job

boolean b = job.waitForCompletion(true);

System.exit(b ?0 : 1);

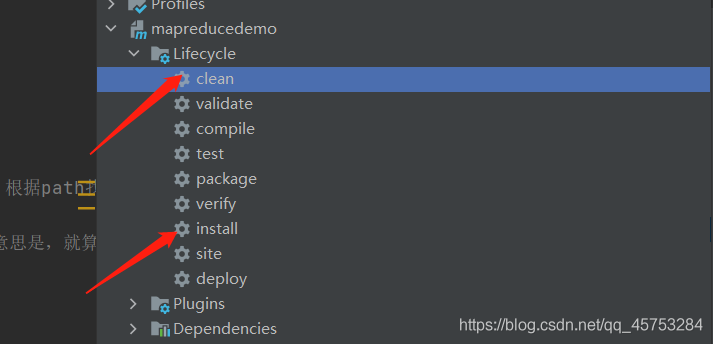

Maven is packaged as a jar package and put into the Hadoop environment

Upload text to Hadoop file

hadoop fs -put xxx.info /input

Enter the Hadoop environment and enter the command to start

hadoop jar mapreducedemo-1.0-SNAPSHOT.jar /input /output

Read More:

- IDEA Create maven project error: Error running‘[org.apache.maven.pluginsmaven-archetype-pluginRELEASE

- [Solved] Hadoop Error: HADOOP_HOME and hadoop.home.dir are unset.

- [Solved] Hadoop Error: Exception in thread “main“ java.io.IOException: Error opening job jar: /usr/local/hadoop-2.

- Maven (http://repo1.maven.org/maven2/): Failed to transfer file and PKIX path building failed: sun.secu

- [Solved] Hive Run SQL error: mapreduce failed to initiate a task

- [Solved] SpringBoot Pack Project: Failed to execute goal org.apache.maven.plugins:maven-resources-plugin:3.2.0:resources

- [Solved] O2oa compile error: Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin

- Maven project running servlet jump JSP error: HTTP status 500 – unable to compile class for JSP

- [Solved] Spring Boot Package Error: Failed to execute goal org.apache.maven.plugins:maven-resources-plugin:3.2.0

- [Solved] Failed to execute goal org.apache.maven.plugins:maven-install-plugin:2.4:install (default-cli) on

- [Maven] maven filtering OTS parsing error incorrect file size in WOFF head [Two Methods to Solve]

- [Solved] MVN Build Project Error: Failed to execute goal org.apache.maven.plugins:maven-surefire-plugin:3.0.0-M5:test

- [Solved] Failed to execute goal org.apache.maven.plugins:maven-dependency-plugin:3.1.1:analyze-only

- [Solved] Maven Publish Error: Failed to execute goal org.apache.maven.pluginsmaven-deploy-plugin2.8.2deploy

- The MapReduce program generates a jar package and runs with an error classnotfoundexception

- MVN compile Error: [ERROR] Failed to execute goal org.apache.maven.plugins:maven-compiler-plugin…

- How to Solve classnotfoundexception error in spark without Hadoop runtime

- [Solved] Error:Maven Resources Compiler: Maven project configuration required for module ‘XX‘ isn‘t available

- [Solved] org.apache.maven.archiver.MavenArchiver.getManifest(org.apache.maven.project.MavenProject…

- Executing Maven command error: Java_HOME is not defined correctly executing maven