Version: cdh6.3.2

flick version: 1.13.2

CDH hive version: 2.1.1

Error message:

java.lang.NoSuchMethodError: org.apache.parquet.hadoop.ParquetWriter$Builder.<init>(Lorg/apache/parquet/io/OutputFile;)V

at org.apache.flink.formats.parquet.row.ParquetRowDataBuilder.<init>(ParquetRowDataBuilder.java:55) ~[flink-parquet_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.formats.parquet.row.ParquetRowDataBuilder$FlinkParquetBuilder.createWriter(ParquetRowDataBuilder.java:124) ~[flink-parquet_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.formats.parquet.ParquetWriterFactory.create(ParquetWriterFactory.java:56) ~[flink-parquet_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.table.filesystem.FileSystemTableSink$ProjectionBulkFactory.create(FileSystemTableSink.java:624) ~[flink-table-blink_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.functions.sink.filesystem.BulkBucketWriter.openNew(BulkBucketWriter.java:75) ~[flink-table-blink_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.functions.sink.filesystem.OutputStreamBasedPartFileWriter$OutputStreamBasedBucketWriter.openNewInProgressFile(OutputStreamBasedPartFileWriter.java:90) ~[flink-table-blink_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.functions.sink.filesystem.BulkBucketWriter.openNewInProgressFile(BulkBucketWriter.java:36) ~[flink-table-blink_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.functions.sink.filesystem.Bucket.rollPartFile(Bucket.java:243) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.functions.sink.filesystem.Bucket.write(Bucket.java:220) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.functions.sink.filesystem.Buckets.onElement(Buckets.java:305) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.functions.sink.filesystem.StreamingFileSinkHelper.onElement(StreamingFileSinkHelper.java:103) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.table.filesystem.stream.AbstractStreamingWriter.processElement(AbstractStreamingWriter.java:140) ~[flink-table-blink_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.runtime.tasks.CopyingChainingOutput.pushToOperator(CopyingChainingOutput.java:71) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.runtime.tasks.CopyingChainingOutput.collect(CopyingChainingOutput.java:46) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.runtime.tasks.CopyingChainingOutput.collect(CopyingChainingOutput.java:26) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.CountingOutput.collect(CountingOutput.java:50) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.CountingOutput.collect(CountingOutput.java:28) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at StreamExecCalc$35.processElement(Unknown Source) ~[?:?]

at org.apache.flink.streaming.runtime.tasks.CopyingChainingOutput.pushToOperator(CopyingChainingOutput.java:71) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.runtime.tasks.CopyingChainingOutput.collect(CopyingChainingOutput.java:46) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.runtime.tasks.CopyingChainingOutput.collect(CopyingChainingOutput.java:26) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.CountingOutput.collect(CountingOutput.java:50) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.CountingOutput.collect(CountingOutput.java:28) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.table.runtime.operators.source.InputConversionOperator.processElement(InputConversionOperator.java:128) ~[flink-table-blink_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.runtime.tasks.CopyingChainingOutput.pushToOperator(CopyingChainingOutput.java:71) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.runtime.tasks.CopyingChainingOutput.collect(CopyingChainingOutput.java:46) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.runtime.tasks.CopyingChainingOutput.collect(CopyingChainingOutput.java:26) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.CountingOutput.collect(CountingOutput.java:50) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.CountingOutput.collect(CountingOutput.java:28) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.StreamSourceContexts$ManualWatermarkContext.processAndCollectWithTimestamp(StreamSourceContexts.java:322) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.StreamSourceContexts$WatermarkContext.collectWithTimestamp(StreamSourceContexts.java:426) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.connectors.kafka.internals.AbstractFetcher.emitRecordsWithTimestamps(AbstractFetcher.java:365) ~[flink-connector-kafka_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.connectors.kafka.internals.KafkaFetcher.partitionConsumerRecordsHandler(KafkaFetcher.java:183) ~[flink-connector-kafka_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.connectors.kafka.internals.KafkaFetcher.runFetchLoop(KafkaFetcher.java:142) ~[flink-connector-kafka_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumerBase.run(FlinkKafkaConsumerBase.java:826) ~[flink-connector-kafka_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.StreamSource.run(StreamSource.java:110) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.api.operators.StreamSource.run(StreamSource.java:66) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

at org.apache.flink.streaming.runtime.tasks.SourceStreamTask$LegacySourceFunctionThread.run(SourceStreamTask.java:269) ~[flink-dist_2.11-1.13.2.jar:1.13.2]

2021-08-15 10:45:37,863 INFO org.apache.flink.runtime.resourcemanager.slotmanager.DeclarativeSlotManager [] - Clearing resource requirements of job e8f0af4bb984507ec9f69f07fa2df3d5

2021-08-15 10:45:37,865 INFO org.apache.flink.runtime.executiongraph.failover.flip1.RestartPipelinedRegionFailoverStrategy [] - Calculating tasks to restart to recover the failed task cbc357ccb763df2852fee8c4fc7d55f2_0.

2021-08-15 10:45:37,866 INFO org.apache.flink.runtime.executiongraph.failover.flip1.RestartPipelinedRegionFailoverStrategy [] - 1 tasks should be restarted to recover the failed task cbc357ccb763df2852fee8c4fc7d55f2_0.

2021-08-15 10:45:37,867 INFO org.apache.flink.runtime.executiongraph.ExecutionGraph

According to the guidelines given on the official website of Flink:

add the Flink parquet dependency package and parquet-hadoop-1.11.1.jar and parquet-common-1.11.1.jar packages. The above error still exists and the specified construction method cannot be found.

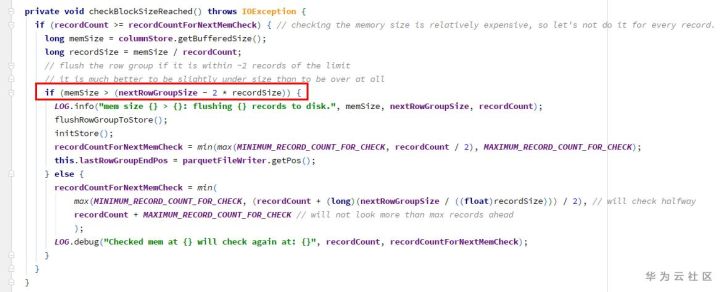

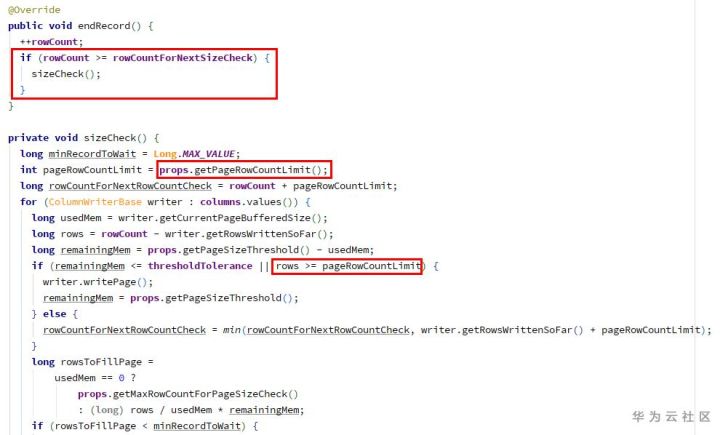

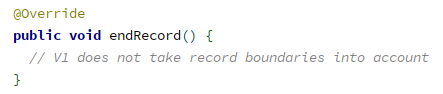

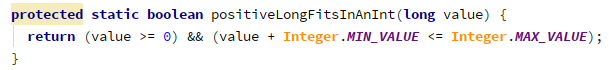

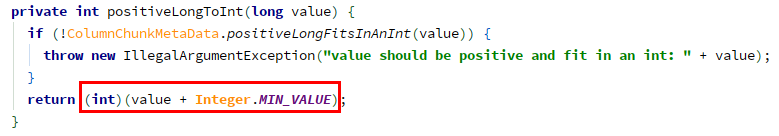

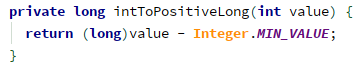

reason:

In CDH hive version: the version in parquet-hadoop-bundle.jar is inconsistent with that in Flink parquet.

**

resolvent:

**

1. Because the Flink itself has provided the Flink parquet package and contains the corresponding dependencies, it is only necessary to ensure that the dependencies provided by the Flink are preferentially loaded when the Flink task is executed. Flink parquet can be packaged and distributed with the code

2. Because the package versions are inconsistent, you can consider upgrading the corresponding component version. Note that you can’t simply adjust the version of parquet-hadoop-bundle.jar. After viewing it from Maven warehouse, there are no available packages to use. And: upgrade the version of hive or reduce the version of Flink.